How to Automate Change Request Processing with AI

How to Automate Change Request Processing with AI

If you've ever submitted a change request and watched it disappear into a black hole of CAB meetings, email chains, and approval queues for two weeks, you already understand the problem. The change management process at most organizations is absurdly manual, painfully slow, and—ironically—introduces more risk than it mitigates because people start circumventing it entirely.

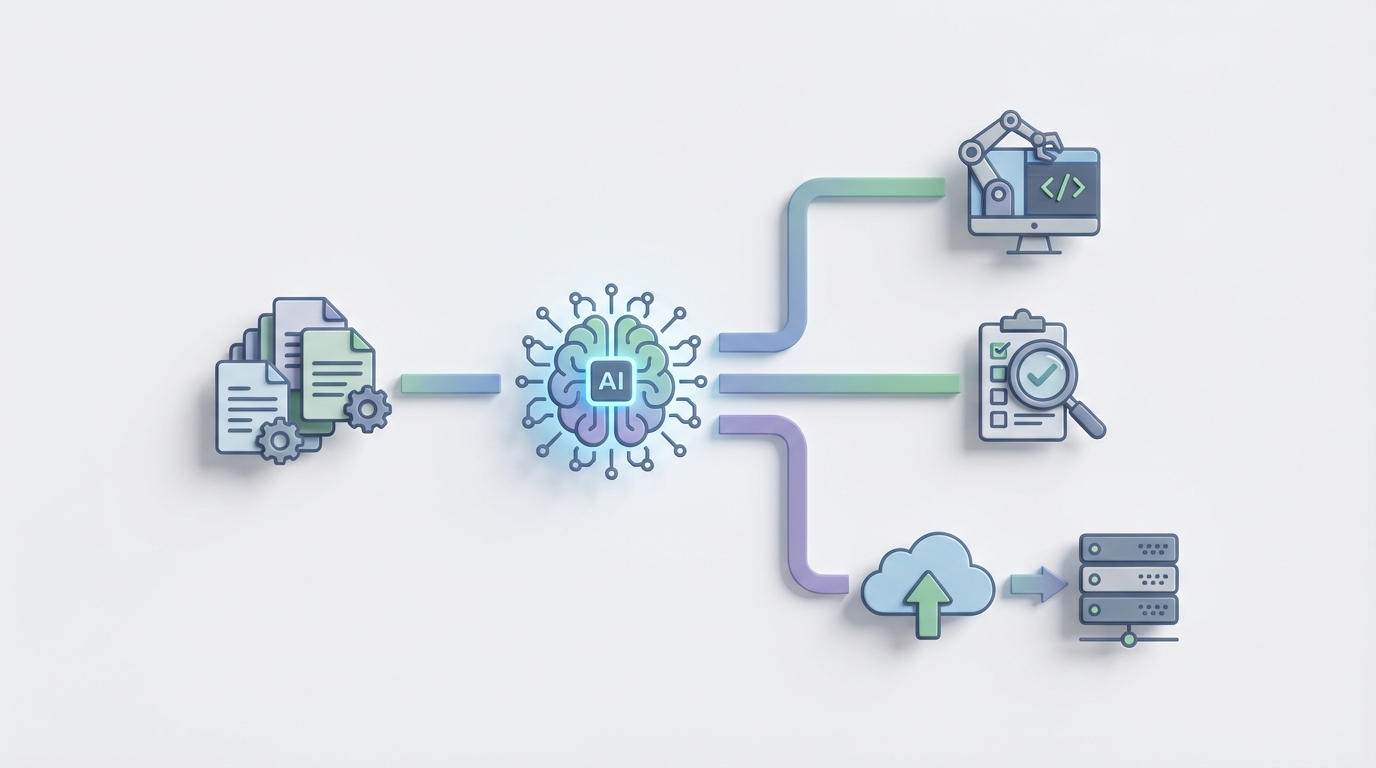

Here's the thing: most of the work in change request processing is pattern matching, data lookup, and routing. That's exactly what AI agents are good at. Not the judgment calls. Not the "should we migrate our entire payment stack to a new provider" decisions. The repetitive, high-volume triage and assessment work that eats 18+ hours per normal change request.

This guide walks through how to automate the bulk of change request processing using an AI agent built on OpenClaw. No hype, no "AI will replace your entire IT team" nonsense. Just a practical breakdown of what you can automate today, what you shouldn't, and how to build it.

The Manual Workflow Today (And Why It Takes So Long)

Let's map out what actually happens when someone submits a change request in a typical ITIL-aligned organization. I'm talking about a "Normal Change"—not the pre-approved standard changes or the fire-drill emergency ones.

Step 1: Request Submission (15–30 minutes) Someone fills out a form in ServiceNow, Jira Service Management, or whatever ITSM platform you're running. They describe what they want to change, which systems are affected, the justification, and the rollback plan. The quality of this submission varies wildly. Some people write novels. Others write "updating the server."

Step 2: Initial Screening and Logging (30–60 minutes) A service desk coordinator or change coordinator picks it up, checks if the form is actually complete, assigns a category, sets a priority, and routes it to the right team. This is pure triage work.

Step 3: Impact and Risk Assessment (2–6 hours) This is where things get slow. A technical team has to evaluate: What services does this affect? What are the dependencies? What's the blast radius if it fails? They're pulling up the CMDB (if it's accurate—big if), cross-referencing spreadsheets, pinging other teams on Slack. The risk assessment is often subjective—one person's "medium risk" is another's "high risk."

Step 4: Change Advisory Board Review (1–3 days of calendar time) The weekly CAB meeting. Everyone brings their changes, the board discusses, asks questions, sometimes defers to the next meeting because the right stakeholder isn't in the room. For organizations processing hundreds of changes per month, this is a massive bottleneck. The actual discussion per change might be 5–15 minutes, but the waiting-for-the-next-meeting delay kills velocity.

Step 5: Approval Chain (1–5 days) After CAB, the change still needs formal sign-offs from managers, architects, security, compliance, and business owners. Sequential approvals. One person on vacation, and the whole thing stalls.

Step 6: Scheduling and Implementation (variable) Coordinate a maintenance window, allocate resources, execute the change, run post-implementation testing, document the results.

Step 7: Post-Implementation Review and Closure (1–2 hours) Write up whether it worked, capture lessons learned, close the ticket.

Total end-to-end time for a Normal Change: 5–16 days. Total manual effort: 18–25 hours per change.

Multiply that by hundreds or thousands of changes per month, and you're looking at a staggering amount of human time spent on process, not on actual engineering work.

What Makes This Painful

The time cost alone is brutal, but the downstream effects are worse:

Approval delays are the #1 complaint. Gartner data shows manual change processes contribute to 60–80% of unplanned outages—not because the process catches problems, but because people bypass it when it's too slow. Shadow IT thrives when the official process takes two weeks for a config change.

Risk assessment is inconsistent. Without data-driven scoring, you get subjective evaluations that vary by who's doing the assessment and what mood they're in. Two identical changes might get classified differently by different people on different days.

High-volume, low-risk changes clog the pipeline. The vast majority of changes are routine. Updating a firewall rule. Deploying a patched version of an app. Scaling a cloud resource. These don't need a CAB meeting. But they go through the same pipeline as a database migration because nobody has built the filtering logic to separate them.

Compliance and audit burden. Every change needs documentation, evidence of approval, risk assessment artifacts. Collecting this manually for auditors is a recurring nightmare.

Change failure rates at immature organizations sit at 15–25%. Not because the changes themselves are risky, but because the process is so slow and cumbersome that shortcuts get taken, documentation gets skipped, and testing gets compressed.

What AI Can Handle Right Now

Let's be specific about what an AI agent can realistically do well in this workflow versus where you're kidding yourself.

High-confidence automation targets:

-

Classification and routing. Reading a change description and categorizing it (standard/normal/emergency), identifying the affected configuration items from CMDB, and routing to the correct team. This is straightforward NLP work. An OpenClaw agent can parse the submission, match against your CMDB taxonomy, and route with high accuracy.

-

Risk and impact scoring. Train on your historical change data—what changes succeeded, what failed, what the characteristics were. An AI model can predict risk scores and failure probability far more consistently than a human doing it from gut feel. ServiceNow's Predictive Intelligence does a version of this, but building it on OpenClaw gives you more control and customization.

-

Auto-approval for standard/low-risk changes. If the risk score is below a threshold and the change matches a known pattern, approve it automatically and notify stakeholders. No CAB needed. This alone can eliminate 30–50% of your CAB workload.

-

Dependency mapping and blast radius analysis. An agent that queries your CMDB, cloud provider APIs, and monitoring tools to build a real-time dependency graph and show what's actually affected. Way better than someone manually tracing connections in a spreadsheet.

-

Natural language summarization. Generate CAB briefing documents, executive summaries, and implementation plan drafts from the change request data. Save the CAB members from reading raw tickets.

-

Post-implementation review generation. Compare monitoring data from before and after the change window, flag anomalies, and auto-generate PIR reports.

Where human judgment is still essential:

- Business value and priority trade-offs (revenue impact, customer experience decisions)

- Novel, first-time changes with no historical precedent

- Legal, ethical, or contractual implications

- Final accountability—regulators want a named human

- Complex organizational politics that don't show up in data

How to Build This with OpenClaw: Step by Step

Here's how to build a change request processing agent on OpenClaw that handles triage, risk assessment, and routing—the highest-ROI automation targets.

Step 1: Define Your Agent's Scope

Start narrow. Don't try to automate the entire lifecycle on day one. The highest-value starting point is an agent that handles steps 2 and 3 from our workflow above: initial screening/classification and risk/impact assessment.

In OpenClaw, you'll create an agent with a clear system prompt that defines its role:

You are a Change Request Triage Agent. Your responsibilities:

1. Validate change request completeness

2. Classify the change type (Standard, Normal, Emergency)

3. Identify affected Configuration Items from the CMDB

4. Calculate a risk score based on historical patterns

5. Route to the appropriate approval workflow

You do NOT approve high-risk changes. You flag them for human review.

Step 2: Connect Your Data Sources

Your agent is only as good as the data it can access. On OpenClaw, you'll configure integrations with:

- Your ITSM platform (ServiceNow, Jira SM, etc.) via API to pull change request details and push back classifications/scores

- Your CMDB for configuration item lookups and dependency data

- Historical change records as training context—past changes with their outcomes, risk levels, and failure/success data

- Monitoring tools (Datadog, Splunk, Dynatrace) for current system health context

OpenClaw's integration layer lets you define these as tool functions the agent can call:

# Tool function: Look up Configuration Item in CMDB

def lookup_ci(ci_name: str) -> dict:

"""Query CMDB for configuration item details,

dependencies, and recent change history."""

response = cmdb_api.search(

query=ci_name,

include_relationships=True,

include_change_history=True,

history_window_days=90

)

return {

"ci_id": response.id,

"ci_type": response.type,

"environment": response.environment,

"tier": response.business_criticality,

"upstream_dependencies": response.parents,

"downstream_dependencies": response.children,

"recent_changes": response.change_history,

"recent_incidents": response.incident_history

}

# Tool function: Calculate risk score

def calculate_risk_score(change_data: dict) -> dict:

"""Score change risk based on historical patterns,

affected CI criticality, and current system health."""

ci_tier = change_data["ci_tier"]

change_type = change_data["change_category"]

environment = change_data["environment"]

# Pull historical failure rate for similar changes

historical = analytics_api.get_failure_rate(

ci_type=change_data["ci_type"],

change_category=change_type,

environment=environment,

window_days=365

)

# Check current system health

health = monitoring_api.get_health(

ci_id=change_data["ci_id"]

)

risk_score = compute_weighted_risk(

ci_criticality=ci_tier,

historical_failure_rate=historical.rate,

active_incidents=health.open_incidents,

deployment_frequency=historical.frequency,

rollback_plan_present=change_data.get("rollback_plan") is not None

)

return {

"score": risk_score, # 0-100

"level": categorize_risk(risk_score), # Low/Medium/High/Critical

"factors": risk_score.contributing_factors,

"recommendation": "auto_approve" if risk_score < 25 else "cab_review"

}

Step 3: Build the Triage Workflow

With your agent defined and data sources connected, build the processing workflow in OpenClaw:

- Trigger: New change request created in ITSM platform (webhook or polling)

- Validate: Agent checks for completeness—does it have a description, justification, affected systems, rollback plan? If not, it sends it back to the requester with specific questions about what's missing.

- Classify: Agent reads the description, matches against CMDB, categorizes the change type and priority.

- Assess: Agent calculates risk score using historical data and current system health.

- Route:

- Risk score < 25 (Low) → Auto-approve, notify stakeholders, schedule implementation

- Risk score 25–60 (Medium) → Route to team lead for lightweight review (skip CAB)

- Risk score > 60 (High/Critical) → Full CAB review with AI-generated briefing document

# OpenClaw workflow definition

workflow = openclaw.Workflow(

name="change_request_triage",

trigger=openclaw.WebhookTrigger(

source="servicenow",

event="change_request.created"

),

steps=[

openclaw.Step(

name="validate_completeness",

agent="cr_triage_agent",

action="validate",

on_failure="request_more_info"

),

openclaw.Step(

name="classify_and_assess",

agent="cr_triage_agent",

action="classify_and_score",

tools=["lookup_ci", "calculate_risk_score"]

),

openclaw.ConditionalStep(

name="route_by_risk",

conditions={

"risk_score < 25": "auto_approve_flow",

"risk_score >= 25 AND risk_score < 60": "lightweight_review",

"risk_score >= 60": "full_cab_review"

}

)

]

)

Step 4: Build the Auto-Approval Path

For low-risk changes that match known patterns, the agent approves automatically, updates the ticket, and generates the compliance documentation:

auto_approve_flow = openclaw.SubWorkflow(

name="auto_approve_flow",

steps=[

openclaw.Step(

name="generate_approval_record",

agent="cr_triage_agent",

action="create_approval_artifact",

output={

"approval_type": "automated",

"risk_score": "{classify_and_assess.risk_score}",

"risk_factors": "{classify_and_assess.factors}",

"policy_basis": "Standard Change Policy v3.2",

"audit_trail": True

}

),

openclaw.Step(

name="update_itsm",

action="servicenow.update_change",

data={

"state": "Scheduled",

"approval": "Approved",

"approval_notes": "{generate_approval_record.summary}"

}

),

openclaw.Step(

name="notify_stakeholders",

action="send_notification",

channels=["slack", "email"],

message="CR {cr_number} auto-approved (Risk: Low). Implementation window: {scheduled_window}"

)

]

)

Step 5: Generate CAB Briefings for High-Risk Changes

For changes that do need human review, the agent does all the prep work. Instead of CAB members reading raw tickets, they get a structured briefing:

## Change Request CR-2026-4847

**Summary**: Database schema migration for customer-profiles service

**Risk Score**: 72 (High)

**Key Risk Factors**:

- Affects Tier 1 business service (customer portal)

- Similar change failed 2/8 times in past 12 months

- 3 downstream services dependent on affected CI

- No maintenance window currently scheduled

- Rollback plan provided but estimated at 45 min

**Blast Radius**: customer-portal, billing-service, notification-engine

**Recommended Questions for CAB**:

1. Can this be performed in a canary deployment pattern?

2. Has the 45-minute rollback been tested?

3. Is there a read-only fallback for downstream services?

This alone saves hours of CAB prep time and makes the meetings dramatically more focused.

Step 6: Monitor, Tune, and Expand

Once your triage agent is running, watch the data. OpenClaw gives you observability into every agent decision:

- Track auto-approval accuracy (are auto-approved changes succeeding?)

- Monitor classification accuracy (are changes being categorized correctly?)

- Measure risk score calibration (do high-risk scores actually correlate with failures?)

After 30–60 days of data, you'll have enough to tune your risk thresholds and expand the agent's scope. Maybe you add post-implementation review generation next. Maybe you add natural language querying so anyone can ask "what changes are scheduled against the payment system this week?"

What Still Needs a Human

Let me be blunt about what you should not automate:

Don't auto-approve changes to Tier 1 production systems regardless of risk score—at least not initially. Build trust with the process first. An AI agent that auto-approves a database migration that takes down your payment system will set your automation program back years.

Don't remove human accountability for regulatory environments. If you're in finance, healthcare, or government, auditors want a named human who approved significant changes. The AI can do all the assessment work and generate the recommendation, but a human signs off.

Don't automate novel, unprecedented changes. The agent's risk scoring is based on historical patterns. If you're doing something you've never done before—migrating to a new cloud provider, replacing a core system—the historical data doesn't apply. Flag these for thorough human review.

Don't skip the organizational change management. Your CAB members and change coordinators need to understand what the agent does and trust it. Roll it out in "shadow mode" first—let it make recommendations alongside the existing process so people can see it's accurate before you let it route automatically.

Expected Results

Based on what organizations have achieved with AI-augmented change management (and what you can build on OpenClaw), here are realistic expectations:

| Metric | Before | After | Improvement |

|---|---|---|---|

| Normal Change lead time | 5–16 days | 1–3 days | 70–80% reduction |

| Manual effort per change | 18–25 hours | 4–6 hours | ~75% reduction |

| CAB workload | All normal changes | Only high-risk (30–40%) | 60–70% reduction |

| Change failure rate | 15–25% | 5–10% | Consistent, data-driven assessment |

| Compliance documentation time | 2–4 hours per change | Auto-generated | ~90% reduction |

| Shadow IT / process bypass | Common | Reduced (process is faster) | Hard to quantify but real |

A large bank using a similar approach (ServiceNow + Predictive Intelligence, which you can replicate and customize further on OpenClaw) cut their change lead time from 14 days to under 48 hours. A global retailer reduced manual assessment time by 70%. A telecom company auto-routes 40% of incoming changes without human touch.

These aren't theoretical. These are production results.

The Real ROI Math

Let's say your organization processes 500 normal changes per month. At 20 hours of manual effort each, that's 10,000 person-hours per month on change management. If the people doing this work cost $75/hour fully loaded, that's $750,000/month—$9 million per year.

Cut 75% of that manual effort and you're saving $6.75 million annually. Even if you're a tenth of that scale, the math works.

And that's just the direct labor savings. The indirect value—faster deployments, fewer outages from process circumvention, better audit readiness, engineers spending time on engineering instead of paperwork—is arguably worth more.

Getting Started

You don't need to boil the ocean. Here's the minimum viable automation:

- Pick your highest-volume, lowest-risk change category. Infrastructure patching, config updates, routine deployments—whatever clogs your pipeline the most.

- Build the triage agent on OpenClaw with access to your CMDB and historical change data.

- Run in shadow mode for 30 days. Let the agent classify and score alongside your existing process. Compare its outputs to human decisions.

- Enable auto-routing for low-risk changes once accuracy is validated.

- Expand to CAB briefing generation for the changes that still need human review.

- Iterate. Add post-implementation review automation, expand to more change categories, tune risk thresholds.

If you want to explore pre-built automation components for ITSM workflows, check what's available on Claw Mart—the marketplace has agent templates and integration connectors that can accelerate this build significantly.

For teams that would rather have experts handle the implementation, Clawsourcing connects you with specialists who build AI agents on OpenClaw for exactly these kinds of enterprise workflows. They've done this before, they know the ITSM integration patterns, and they can get you to production faster than starting from scratch.

The change management process has been manual for decades not because it has to be, but because the automation tools weren't smart enough to handle the judgment-adjacent work. They are now. The organizations that figure this out first get to ship faster while their competitors are still waiting for next week's CAB meeting.