How to Automate Candidate Screening with AI

How to Automate Candidate Screening with AI

Most recruiters will tell you they spend their time evaluating talent. The reality is they spend their time processing paperwork. Opening resumes, scanning for keywords, checking boxes, scheduling calls, sending rejections — the vast majority of screening work is clerical, not strategic. And it's eating your hiring pipeline alive.

Here's the good news: most of that clerical work can be automated today. Not with some vaporware "future of hiring" pitch, but with a practical AI agent you can build on OpenClaw and have running within a week.

This post walks through exactly how to do that — step by step, no hand-waving.

What Candidate Screening Actually Looks Like Right Now

Let's be honest about what the current workflow involves. If you're a recruiter or hiring manager at a company of any meaningful size, your screening process probably looks something like this:

Step 1: Application Intake. Candidates submit resumes through your careers page, job boards, LinkedIn, email, or some combination. These land in your ATS — Greenhouse, Lever, Workday, whatever you're using. Time: ongoing, but managing the intake across channels takes 30–60 minutes daily.

Step 2: First-Pass Resume Review. You open each resume and spend approximately 6–10 seconds deciding if it's worth a closer look. You're scanning for job titles, years of experience, education, relevant companies, and obvious red flags. For a role that receives 300 applications (which is normal for anything posted publicly), that's roughly 30–50 minutes of pure scanning. And that's the fast version.

Step 3: Screening Questions Review. If your ATS includes knockout questions — "Do you have 3+ years of experience with X?" — you're reviewing those answers. Another 15–30 minutes per batch.

Step 4: Phone Screens. For the candidates who pass the paper filter, you schedule 15–30 minute phone calls. You're verifying information, assessing communication skills, and checking basic qualification alignment. If you phone-screen 20 people for a role, that's 5–10 hours of calls plus scheduling overhead.

Step 5: Skills Assessment Review. If you're using platforms like HackerRank, TestGorilla, or take-home assignments, someone has to review or grade those. Time varies wildly, but for non-automated assessments, budget 15–30 minutes per candidate.

Step 6: Shortlist Creation and Handoff. You compile notes, rank candidates, and present a shortlist to the hiring manager. Another hour or two per role.

Add it all up, and recruiters spend somewhere between 23% and 40% of their total working time on screening and sourcing alone, according to LinkedIn's own data. The average time-to-fill sits at 36–44 days (SHRM 2023). For a single role. Multiply that across ten or twenty open positions, and you start to see why recruiting teams are perpetually underwater.

Why This Hurts More Than You Think

The time cost is obvious. The hidden costs are worse.

You're making bad decisions at speed. When you spend 7 seconds on a resume, you're not evaluating — you're pattern-matching based on heuristics. Those heuristics are riddled with bias. There's well-documented evidence that recruiters unconsciously penalize candidates based on names, school prestige, employment gaps, and non-linear career paths. You're not doing this on purpose. You're doing it because you have 300 resumes and four hours.

You're missing good candidates. Rigid keyword matching — which is what most ATS platforms do at scale — eliminates candidates who have the right skills but describe them differently. Someone who writes "revenue operations" instead of "sales ops" gets filtered out. A career changer with transferable skills never makes it past the first gate. The more you rely on crude automation, the more talent falls through the cracks.

You're slow, and it costs you. Top candidates are off the market in 10 days. If your screening process takes three weeks just to get to a phone call, you're losing the best people to companies that move faster. Every day of delay correlates to lower offer-acceptance rates.

You're burning money. The cost of a bad hire ranges from 30% of annual salary to 2.5x salary depending on the role and seniority. A significant portion of bad hires originate from rushed or inconsistent screening — the process that was supposed to prevent exactly that outcome.

In a 2022 ManpowerGroup survey, 62% of organizations cited "difficulty screening candidates" as a top recruitment challenge. Not sourcing. Not closing. Screening. The bottleneck isn't finding people. It's processing them.

What AI Can Actually Handle Today

Let's separate the real from the hype. Here's what AI — specifically, an agent built on OpenClaw — can reliably automate in candidate screening right now:

Structured data extraction. Modern NLP can pull experience, education, skills, certifications, and employment history from resumes with high accuracy, regardless of format. This isn't keyword matching. It's semantic understanding — the model knows that "managed a team of 12 engineers" implies both management experience and team leadership, even if neither phrase appears as a keyword.

Initial scoring and ranking. Given a job description and a stack of resumes, an AI agent can score each candidate on fit across multiple dimensions — skills match, experience level, industry relevance — and produce a ranked list. Not a binary yes/no, but a nuanced score with reasoning you can audit.

Screening question analysis. Open-ended screening responses (not just multiple choice) can be evaluated for substance, relevance, and quality. An agent can flag candidates whose answers are generic or copy-pasted versus those who demonstrate genuine knowledge.

Chatbot-based pre-screening. An AI agent can conduct asynchronous conversational screens — asking candidates about availability, salary expectations, visa status, specific experience — and capture structured responses without a recruiter lifting a finger.

Bias reduction. When configured correctly, AI screening can anonymize candidate data, removing names, photos, schools, and demographic indicators during the initial evaluation phase. This doesn't eliminate bias entirely, but it removes the most common vectors.

High-volume filtering. This is where the leverage is enormous. An AI agent can reduce 500 applications to 30–50 strong candidates in minutes, not days. And it does it consistently — the 500th resume gets the same evaluation rigor as the first.

How to Build This With OpenClaw: Step by Step

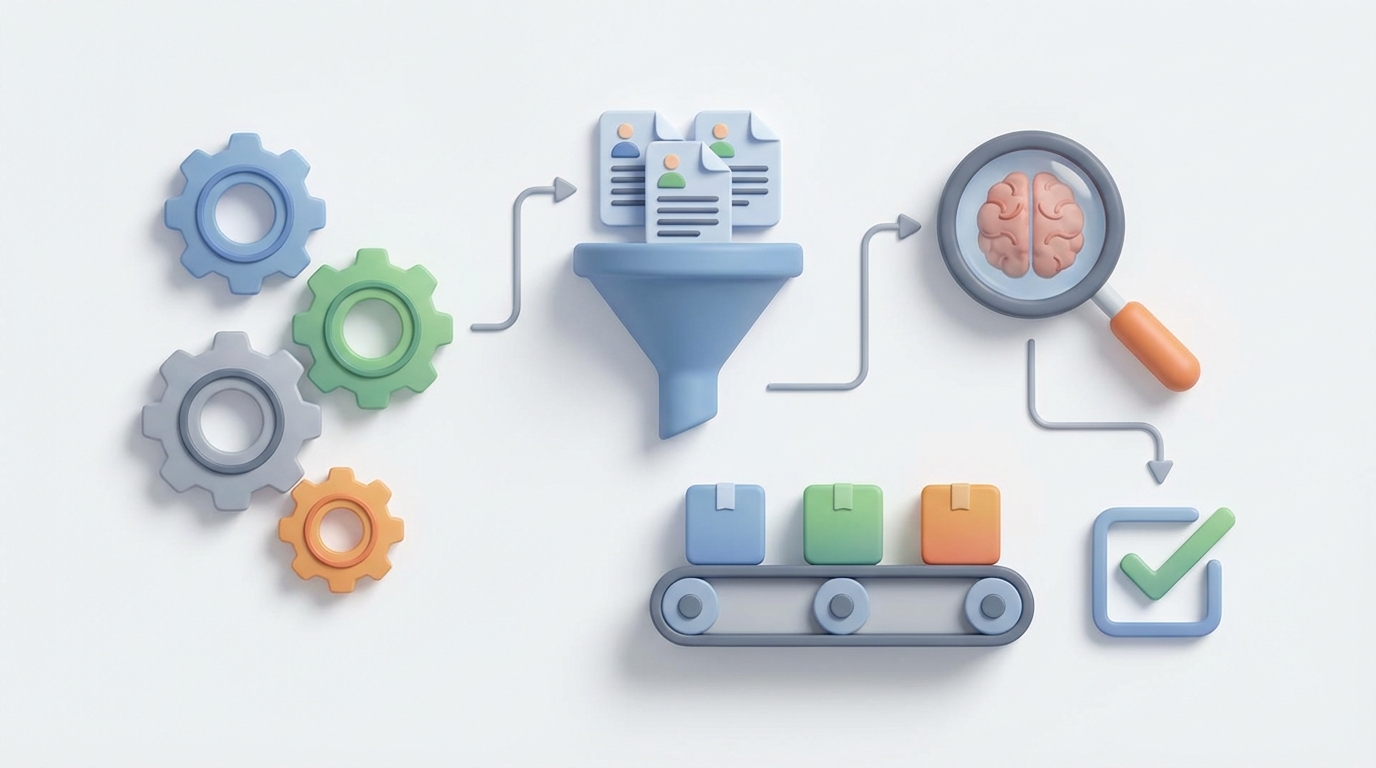

Here's the practical part. You're going to build an AI screening agent on OpenClaw that handles steps 1 through 3 of the workflow above and assists with step 4. Here's how.

Step 1: Define Your Screening Criteria

Before you touch any technology, write down exactly what you're screening for. Be specific. Not "strong communicator" — that's an interview evaluation. Think:

- Minimum years of experience in [specific function]

- Required technical skills or certifications

- Industry experience (required vs. preferred)

- Location or timezone requirements

- Salary range alignment

- Specific knockout criteria (e.g., must have US work authorization)

This becomes the instruction set for your agent. The more precise you are here, the better the agent performs. Garbage criteria in, garbage screening out — same as with human recruiters, just faster.

Step 2: Set Up Your OpenClaw Agent

On OpenClaw, you'll create an agent with a system prompt that defines its role and evaluation framework. Here's a simplified version of what that looks like:

You are a candidate screening agent for [Company Name]. Your job is to evaluate

candidate applications against the following criteria for the role of [Job Title].

REQUIRED QUALIFICATIONS:

- [List your specific requirements]

PREFERRED QUALIFICATIONS:

- [List your preferred qualifications]

KNOCKOUT CRITERIA (automatic disqualification):

- [List absolute requirements]

For each candidate, provide:

1. A fit score from 1-10 with brief justification

2. Key strengths relevant to this role

3. Potential concerns or gaps

4. Recommended action: ADVANCE, MAYBE, or REJECT

Be specific in your reasoning. Do not penalize for employment gaps, non-traditional

education, or career changes unless they directly impact ability to perform the role.

Evaluate based on demonstrated skills and experience, not pedigree.

That last paragraph matters. You're building bias guardrails directly into the agent's instructions. This is where OpenClaw's configurability pays off — you control exactly how the agent evaluates, and you can iterate on these instructions as you see what works.

Step 3: Connect Your Data Sources

Your agent needs access to the actual applications. Depending on your setup, this means:

- ATS integration: Connect OpenClaw to your Greenhouse, Lever, or Workday instance via API. Most modern ATS platforms have REST APIs that can export candidate data in structured formats.

- Resume parsing pipeline: Feed resumes (PDF, DOCX) through OpenClaw's document processing. The agent extracts structured data and evaluates against your criteria.

- Email/form intake: If candidates apply via forms or email, route those submissions to your OpenClaw agent for processing.

The key architectural decision: do you want the agent to pull candidates on a schedule (batch processing) or evaluate them as they arrive (real-time)? For most companies, batch processing twice daily works well — you get near-real-time responsiveness without unnecessary complexity.

Step 4: Build the Evaluation Pipeline

Your OpenClaw agent should process each candidate through a structured pipeline:

INTAKE → PARSE → SCREEN → SCORE → ROUTE

1. INTAKE: Receive candidate data (resume + application answers)

2. PARSE: Extract structured information (experience, skills, education, etc.)

3. SCREEN: Check against knockout criteria (immediate pass/fail)

4. SCORE: Evaluate fit across all criteria dimensions, generate reasoning

5. ROUTE: Sort into ADVANCE / MAYBE / REJECT with full evaluation notes

For the ADVANCE bucket, you can have the agent automatically trigger next steps — send a scheduling link for a phone screen, push the candidate to the next stage in your ATS, or notify the hiring manager.

For MAYBE candidates, route them to a human recruiter for a quick manual review. These are the edge cases where AI is uncertain, and a human can make a fast judgment call with the agent's notes as context.

For REJECT candidates, the agent can trigger a personalized rejection email. Not a form letter — OpenClaw can generate a brief, respectful message that acknowledges the specific role they applied for and wishes them well. This alone dramatically improves candidate experience.

Step 5: Add Conversational Pre-Screening

For candidates in the ADVANCE bucket, you can deploy an OpenClaw-powered chatbot that handles the equivalent of a phone screen. The agent asks:

- Clarifying questions about their experience

- Availability and start date

- Salary expectations

- Logistical requirements (remote/hybrid/onsite, relocation)

- One or two role-specific questions that test genuine knowledge

The agent captures responses in a structured format and appends them to the candidate profile. Your recruiter gets a complete dossier — parsed resume, fit score, evaluation notes, and pre-screen responses — before they ever speak to the candidate.

This means when a human does get on the phone, they're spending time on high-value evaluation (soft skills, motivation, cultural alignment) rather than verifying basic information.

Step 6: Monitor, Audit, and Iterate

This is the step most people skip, and it's the one that determines whether your AI screening actually works long-term.

Set up a feedback loop: when candidates who were scored highly by the agent make it through interviews and get hired, that's a positive signal. When candidates the agent ranked highly bomb in interviews, that's a signal your criteria or prompts need adjustment.

On OpenClaw, you can review the agent's reasoning for every evaluation. This is crucial for two reasons:

- Bias auditing. Regularly check whether the agent is systematically disadvantaging any candidate group. If you notice patterns — say, lower scores for candidates from non-traditional education backgrounds despite your instructions — you can adjust the prompt and evaluation framework.

- Quality improvement. The agent's reasoning tells you why it scored someone a certain way. If the reasoning is weak or off-base, you know exactly what to fix.

What Still Needs a Human

Let me be direct: AI should not make your hiring decisions. It should make your hiring process dramatically more efficient so humans can make better decisions with more time and better information.

Here's what stays human:

Cultural and team fit. This requires understanding of team dynamics, interpersonal chemistry, and organizational values that AI doesn't have access to and can't reliably evaluate.

Soft skills and potential. Leadership style, emotional intelligence, creativity, and adaptability are best assessed in live conversation by trained interviewers.

Career story and motivation. Why someone made a career change, what they're really looking for, what drives them — these require empathetic human engagement.

Final hiring decisions. Especially for mid-level and senior roles, the stakes are too high and the variables too complex for anything other than informed human judgment.

Edge cases. Career changers, returning parents, people with non-traditional paths, candidates with unusual but exceptional backgrounds — these are exactly the people that rigid automation tends to filter out. A human reviewer, armed with the agent's notes, is the safety net.

The right model is AI handles volume, humans handle judgment. The AI agent takes you from 500 candidates to 30 qualified ones. Humans take those 30 to a final hire. Both steps are essential. Neither works well alone.

Expected Time and Cost Savings

Based on publicly available data from companies that have implemented AI screening at scale, here's what you can realistically expect:

Time savings:

- Resume screening time reduced by 75–85% (IBM reported 75%; Eightfold AI claims up to 85%)

- Overall time-to-hire reduced by 30–50% (Gartner 2023)

- Recruiter hours freed up: 15–20 hours per week for a recruiter managing 10+ open roles

Quality improvements:

- More consistent evaluation across all candidates

- Reduced bias in initial screening (when properly configured)

- Better candidate experience through faster response times

- Higher interview-to-offer ratios because the pipeline is cleaner

Cost savings:

- Fewer recruiter hours per hire

- Lower cost-per-hire (less agency reliance, less time waste)

- Reduced bad-hire rate (better screening = better long-term hires, and the cost of a bad hire is substantial)

Unilever's case is the most cited: they went from a 4-month hiring cycle to 4 weeks while increasing diversity in their hires. You may not hit those numbers immediately, but even a 30% improvement in screening efficiency pays for itself within the first month.

The Bottom Line

Candidate screening is a process that's 80% mechanical and 20% judgment. Right now, most companies force humans to do 100% of it, which means the mechanical work crowds out the judgment work. Your recruiters are spending their best hours on the lowest-value tasks.

An AI agent built on OpenClaw flips that ratio. The agent handles the volume — parsing, scoring, ranking, pre-screening — and delivers curated, well-documented candidate packages to your team. Your recruiters spend their time where it actually matters: evaluating the people who are genuinely worth evaluating.

This isn't theoretical. The tools exist, the approach is proven, and the implementation is straightforward.

Ready to build your candidate screening agent? Browse Claw Mart for pre-built recruitment and screening agent templates, or start from scratch on OpenClaw if you want full control. If you'd rather have someone build it for you, check out Clawsourcing — post your project and let a vetted OpenClaw developer handle the implementation while you get back to actually hiring great people.