Automate Win-Loss Analysis: Build an AI Agent That Processes Closed Deals

Automate Win-Loss Analysis: Build an AI Agent That Processes Closed Deals

Every quarter, somewhere in your company, a well-meaning RevOps person opens a spreadsheet, copies in a bunch of CRM fields from closed deals, sends a Slack message begging reps to fill out their loss reason notes, gets maybe half of them back, spends two weeks trying to find patterns, and then presents a slide deck to leadership that basically says "we lost deals because of price and timing." Leadership nods. Nothing changes. Repeat.

This is what passes for win-loss analysis at most companies. And it's a waste of everyone's time — not because the analysis doesn't matter (it matters enormously), but because the way we do it guarantees shallow, biased, late insights that rarely lead to action.

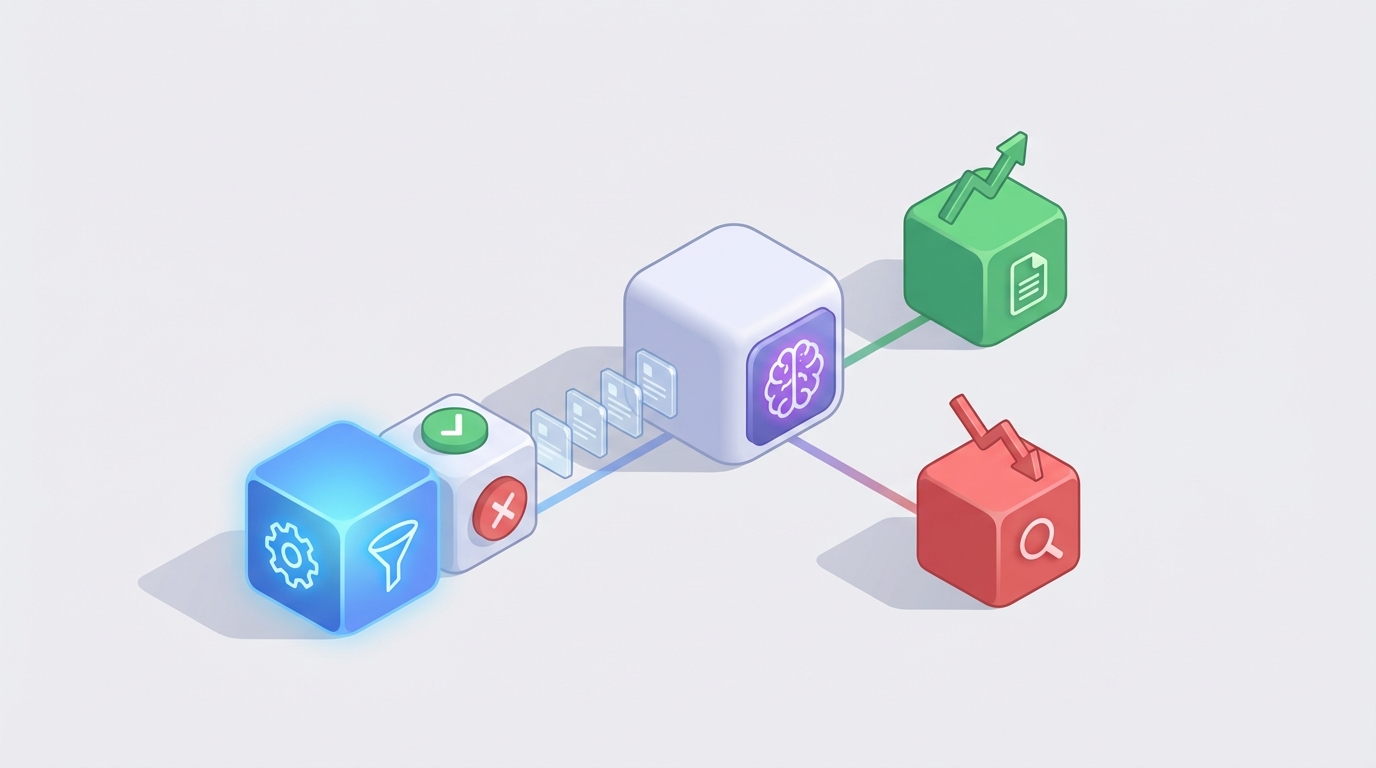

There's a better way. You can build an AI agent that automatically processes every closed deal, extracts real reasons from actual conversations and documents, categorizes them accurately, spots trends across hundreds of deals, and delivers insights while they're still fresh enough to act on. And you can do it on OpenClaw without needing a data science team.

Let me walk through exactly how.

The Manual Workflow Today (And Why It's Broken)

Let's be honest about what win-loss analysis actually looks like at most organizations. Here's the typical flow:

Step 1: Deal closes. A rep marks the opportunity as Closed-Won or Closed-Lost in Salesforce or HubSpot. This is the only reliable part of the process.

Step 2: Rep fills out reason fields. They pick from a dropdown ("Price," "Competitor," "Timing," "No Decision") and maybe write a sentence or two in a notes field. This takes 10–15 minutes if they do it at all. According to Gong's research, reps spend 40–75 minutes per lost deal on CRM administrative work, and completion rates still hover below 60%.

Step 3: Someone tries to collect more context. A sales manager or RevOps analyst schedules 1:1 debriefs with reps. Each takes 20–30 minutes. For strategic deals, someone might also pull call recordings, emails, or proposal docs. This is where most programs die — there just isn't enough time to do this for every deal.

Step 4: Customer interviews (maybe). The gold standard is interviewing the buyer directly, especially on losses. But response rates for loss interviews run 15–25%. Most companies skip this entirely or do it for fewer than 10% of deals.

Step 5: Data aggregation. Someone — usually the most junior person on the RevOps team — spends days copying data from CRM exports, interview notes, and spreadsheets into a single view. Cleaning, deduplication, and standardization eat up more time.

Step 6: Analysis and reporting. Quarterly (if you're lucky), someone looks for patterns, builds charts, and creates a deck. This process typically takes 4–8 weeks from start to finish.

Step 7: Action planning. Insights get presented. Product says "interesting." Marketing says "we'll look into it." Sales says "that's not what really happened." Six months have passed since the deals closed. The feedback loop is so slow it's barely a loop at all.

SiriusDecisions (now Forrester) found that companies with formal programs spend 6–12 hours per deal on deep analysis for strategic accounts. CSO Insights reports that only 37% of companies even have a formal win-loss program, and those that do typically analyze fewer than 30% of their losses.

So the best-case scenario is: you analyze a fraction of your deals, months after they close, using mostly self-reported data from the people with the most incentive to spin the narrative.

What Makes This So Painful

The costs here aren't abstract. They're specific and compounding.

The data quality problem is the worst one. When a rep says they lost on "price," what does that mean? Was the buyer genuinely budget-constrained? Did they see more value in a competitor's offering at a similar price point? Was the procurement team playing hardball while the champion actually wanted your product? Adobe discovered through proper analysis that "perceived implementation risk" was a much bigger loss factor than pricing in enterprise deals — but that nuance never showed up in dropdown menus.

HubSpot's 2023 Sales Report found that 68% of sales leaders say they don't have enough insight into why they lose deals. That's not a data collection problem. It's a data quality and analysis problem.

Bias kills accuracy. Reps overweight external factors and underweight things within their control. This is human nature, not laziness. But it means your raw data is systematically skewed.

The time lag makes insights stale. If you're presenting Q1 findings in late Q2, the competitive landscape may have already shifted. A product gap that cost you five deals in January might have been fixed in March, or it might have gotten worse — and you won't know until summer.

It doesn't scale. You might be able to do deep analysis on 20 strategic deals per quarter. But what about the other 200? The patterns hiding in your mid-market and SMB deals often contain the most valuable signal because there's more data.

The cost is real. A dedicated win-loss analyst costs $80K–$120K fully loaded. Third-party interview firms charge $2,000–$5,000 per completed interview. Even a modest program analyzing 50 deals per quarter with external interviews can run $100K–$200K annually.

Meanwhile, Forrester's 2026 research shows that companies with mature win-loss programs see 15–22% higher win rates within 12–18 months. The ROI is there — but most companies can't get past the operational burden to capture it.

What AI Can Actually Handle Now

Here's where I want to be precise, because the AI hype machine has made people either wildly optimistic or deeply skeptical. Let me break down what works today versus what still needs a human.

AI handles these well (80%+ accuracy with good data):

- Data extraction from unstructured sources. Pull loss reasons, competitor mentions, pricing discussions, feature requests, and objections from call transcripts, email threads, and CRM notes. LLMs are genuinely good at this now.

- Categorization and tagging. Classify extracted reasons into consistent taxonomies. Instead of 50 different ways reps describe "the product didn't integrate with their stack," you get a single clean tag.

- Pattern detection across deal populations. "Feature X was mentioned in 43% of losses to Competitor Y in the enterprise segment during Q3." This is exactly the kind of thing that's nearly impossible to spot manually across hundreds of deals but trivial for an agent processing structured data.

- Sentiment and confidence analysis. Detect whether a buyer was genuinely engaged or politely going through the motions. This surfaces in language patterns that AI picks up reliably.

- Automated report generation. First-draft summaries, trend charts, and executive briefs. Not perfect, but a dramatically better starting point than a blank slide deck.

- Anomaly flagging. Automatically identify deals that don't fit patterns and need human review — the outliers that often contain the most interesting insights.

AI still struggles with (keep humans here):

- Understanding political dynamics and unstated motivations. Why the CFO really killed the deal. The personal relationship between the buyer and a competitor's account exec.

- Strategic interpretation. Knowing which loss trends represent existential competitive threats versus temporary blips.

- High-stakes customer interviews. Building trust, reading body language, following up on surprising answers. Automated outreach can supplement this but can't replace it for important accounts.

- Cross-functional action planning. Deciding whether to change the product roadmap, retrain the sales team, or adjust positioning. This requires organizational context no model has.

The ratio is roughly 80/20. AI handles 80% of the mechanical work; humans focus on the 20% that requires judgment and relationships. That's not a compromise — it's the optimal setup.

Step-by-Step: Building the Win-Loss Agent on OpenClaw

Here's how to actually build this. I'm going to walk through the architecture, the key components, and how OpenClaw makes each piece work.

Step 1: Define the Trigger

Your agent needs to activate when a deal closes. In OpenClaw, you set up a trigger that listens for CRM status changes.

trigger:

source: salesforce_webhook

event: opportunity_stage_change

conditions:

- field: StageName

value_in: ["Closed Won", "Closed Lost"]

This fires every time a deal moves to a terminal stage. No manual initiation, no "remember to submit the form." Every single deal gets processed.

Step 2: Data Collection Agent

This is the first agent in your pipeline. Its job is to gather everything relevant to the deal from every available source. On OpenClaw, you configure it to pull from multiple integrations:

agent: deal_data_collector

description: >

Collect all relevant data for a closed deal from CRM,

email, call recordings, and document storage.

data_sources:

- salesforce:

objects: [Opportunity, Contact, Activity, Note]

lookback_days: 180

- gong_or_call_recorder:

filter: opportunity_id

- email_integration:

filter: contact_emails_on_deal

- google_drive:

filter: proposal_docs_tagged_to_deal

output:

format: structured_json

fields:

- deal_metadata

- all_call_transcripts

- email_threads

- proposal_versions

- crm_notes_and_activities

- rep_reported_reasons

The key insight here: you're not relying on what the rep typed into a dropdown. You're pulling the actual conversations, the actual emails, and the actual documents. The truth lives in the artifacts, not in the self-report.

Step 3: Analysis Agent

This is the core of the system. It takes the collected data and extracts structured insights. OpenClaw lets you define exactly what you want the agent to identify:

agent: win_loss_analyzer

description: >

Analyze collected deal data to extract win/loss reasons,

competitive intelligence, and actionable insights.

analysis_framework:

primary_categories:

- pricing_and_commercial_terms

- product_capabilities_and_gaps

- competitive_positioning

- sales_execution

- buyer_timing_and_readiness

- implementation_and_risk_concerns

- relationship_and_trust_factors

for_each_category:

- evidence_quotes: "Direct quotes from transcripts or emails"

- confidence_score: "0.0 to 1.0 based on evidence strength"

- source_attribution: "Which data source(s) support this"

additional_extraction:

- competitors_mentioned

- features_requested_or_critiqued

- stakeholders_and_their_positions

- decision_timeline_vs_actual_timeline

- price_points_discussed

output:

format: structured_analysis

flag_for_human_review:

- confidence_below: 0.6

- deal_value_above: 100000

- loss_to_new_competitor: true

Notice the confidence scoring. The agent doesn't just say "this deal was lost on price." It says "pricing was mentioned as a concern in 3 of 7 calls with a confidence of 0.82, but implementation risk appeared in 5 of 7 calls with a confidence of 0.91." That changes the story entirely.

Step 4: Trend Aggregation Agent

Individual deal analysis is valuable. But the real power is in patterns across your entire deal population. This agent runs on a schedule (weekly or monthly) and synthesizes across all analyzed deals:

agent: trend_aggregator

schedule: weekly_monday_0800

scope: all_deals_analyzed_in_period

analysis:

- win_rate_by_loss_reason_category

- loss_reason_trends_over_time

- competitor_specific_win_loss_patterns

- segment_specific_patterns:

dimensions: [deal_size, industry, region, sales_rep]

- feature_gap_frequency_and_impact

- correlation_analysis:

- sales_cycle_length_vs_outcome

- number_of_stakeholders_vs_outcome

- competitor_involvement_vs_outcome

output:

- executive_summary: markdown

- detailed_report: structured_json

- alerts:

- significant_trend_changes

- emerging_competitor_threats

- product_gap_acceleration

This is the agent that produces the "we're suddenly losing 3x more often to Competitor Z in the mid-market, and the primary driver is their new integration with Tool X" insight that would take a human analyst weeks to uncover — if they spotted it at all.

Step 5: Distribution and Action

Insights that live in a database don't help anyone. The final piece routes findings to the right people in the right format:

agent: insight_distributor

triggers:

- new_deal_analysis_complete

- weekly_trend_report_ready

- critical_alert_detected

routing:

- sales_leadership:

channel: slack_channel_sales_leadership

content: executive_summary + key_alerts

- product_team:

channel: slack_channel_product

content: feature_gaps + customer_quotes

- marketing:

channel: email

content: competitive_positioning_insights

- individual_reps:

channel: slack_dm

content: personalized_deal_feedback

escalation:

- critical_competitive_threat:

notify: vp_sales + cpo

urgency: immediate

The whole pipeline — from deal closure to insight delivery — runs without anyone opening a spreadsheet. On OpenClaw, each of these agents is a discrete, testable component. You can iterate on the analysis framework, add new data sources, or adjust routing without rebuilding the whole system.

Step 6: Feedback Loop

This is the piece most people forget. You need a mechanism for humans to correct the agent's analysis so it improves over time:

agent: feedback_collector

trigger: human_review_complete

actions:

- compare_agent_analysis_to_human_corrections

- log_disagreements_with_context

- update_analysis_prompts_monthly

- track_accuracy_metrics:

- category_accuracy

- confidence_calibration

- false_positive_rate

Over time, your agent gets better at understanding your specific competitive landscape, your product's strengths and weaknesses, and the language your buyers use. This is where the compounding value kicks in.

What Still Needs a Human

I want to be direct about this because overselling AI capabilities is how automation projects fail.

Keep humans in the loop for:

-

Strategic deal reviews. Your top 10% of deals by value should still get human attention. Use the agent's analysis as a starting point, not a final answer.

-

Customer interviews. For key accounts, especially losses to strategic competitors, direct buyer conversations are irreplaceable. The agent can identify which customers to interview and what to ask them — that alone saves hours.

-

Action planning. The agent tells you "implementation risk is the #1 loss driver in enterprise deals, up 40% quarter over quarter." A human decides whether to invest in professional services, build better onboarding tooling, or create new sales collateral addressing risk.

-

Validation of surprising findings. When the agent flags something unexpected — a new competitor, a sudden shift in loss reasons, a rep-specific pattern — a human should investigate before acting.

-

Political and organizational context. The agent doesn't know that your VP of Product is already building the feature that keeps showing up in losses, or that the competitor winning in EMEA is about to be acquired.

Expected Time and Cost Savings

Based on what companies report after implementing AI-augmented win-loss programs (and what the data from Eigen Technologies, Forrester, and Gong case studies supports):

| Metric | Manual Process | With AI Agent | Improvement |

|---|---|---|---|

| Deals analyzed per quarter | 20–40 | Every closed deal | 5–10x more coverage |

| Time per deal analysis | 6–12 hours | 15–30 minutes (mostly automated) | ~95% reduction |

| Time from deal close to insight | 4–8 weeks | 24–48 hours | ~90% faster |

| Analysis accuracy (vs. customer interviews) | ~45% (self-reported) | ~80% (multi-source AI) | ~75% improvement |

| Analyst time on data collection | 60–70% of role | 10–15% of role | Freed for strategic work |

| Annual program cost (mid-market) | $150K–$250K | $40K–$80K | 50–70% reduction |

The mid-market SaaS case study from Eigen Technologies in 2023 is representative: they went from analyzing ~40 deals per quarter manually to analyzing every closed deal, with a 70% reduction in total analysis time. Their analysts shifted from data janitorial work to strategic interpretation and cross-functional action planning.

Forrester's data point is the one that matters most for making the business case: companies with mature win-loss programs see 15–22% higher win rates within 12–18 months. If your annual revenue is $20M, even a 5% win rate improvement is worth seven figures. The agent pays for itself in the first quarter.

Get Started

You can browse pre-built win-loss analysis agents and components in the Claw Mart marketplace, or build your own from scratch on OpenClaw. Either way, the building blocks are there: CRM integrations, transcript analysis agents, categorization frameworks, and reporting templates.

If you'd rather not build it yourself, post your project on Clawsourcing and let a specialist build and configure the entire pipeline for you. Most win-loss automation projects scope out at 2–4 weeks from kickoff to first automated analysis. That's faster than one cycle of your current manual process.

Stop analyzing 30% of your losses three months after they happen. Start analyzing all of them within 48 hours. The data is already there. You just need an agent to actually use it.