Loop Architecture: Build Self-Improving AI Agents

Decision & Execution Stack with OODA + Ralph loops for AI agents

Most AI agents suck because they think once and then pray.

You give them a task, they take one swing at it, and whatever comes out the other end is what you get. No reflection, no course correction, no second attempt. It's the equivalent of trying to parallel park by closing your eyes, turning the wheel, and hoping for the best.

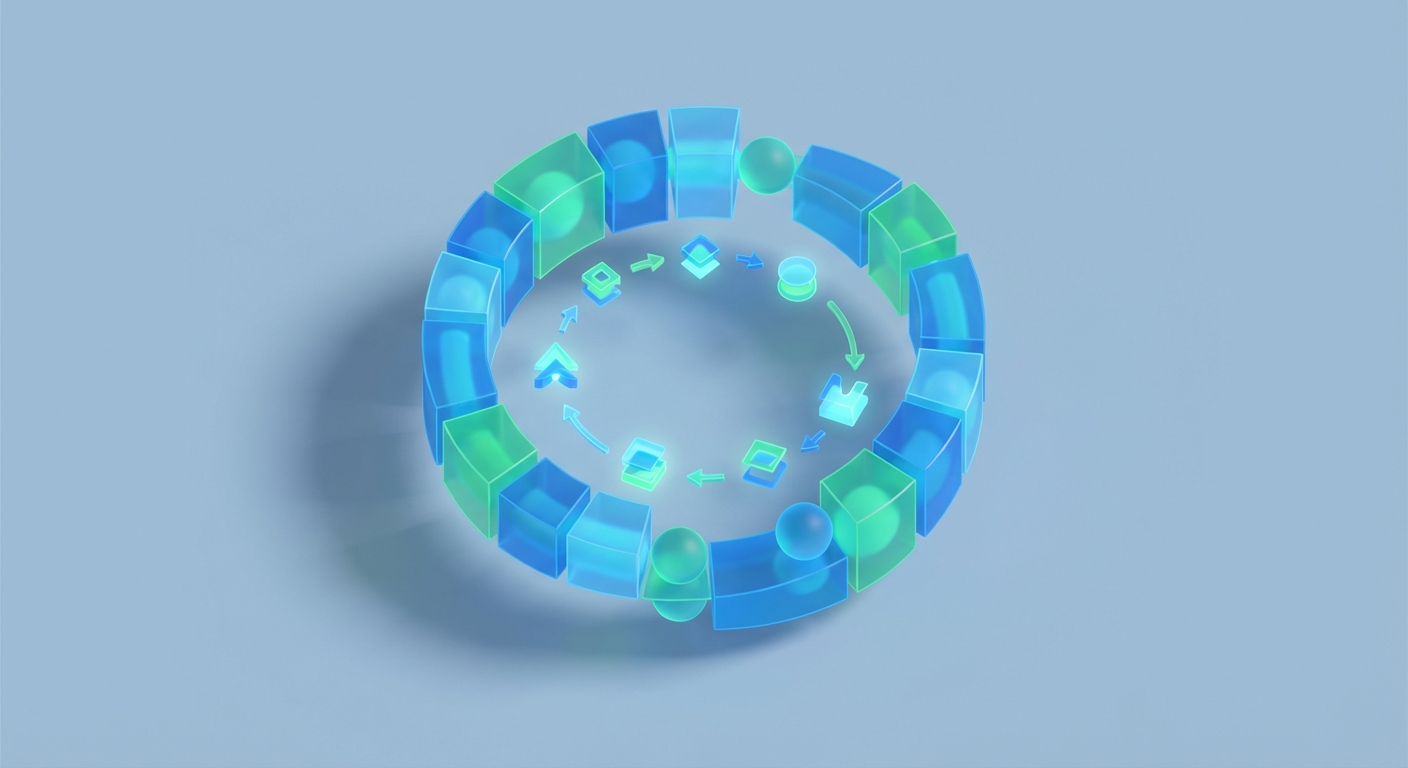

Loop architecture fixes this. It's the difference between a bot that guesses and a bot that thinks, acts, checks, and thinks again — over and over until the job is actually done. It's the pattern behind every AI agent that does something genuinely useful in the real world, and once you understand it, you'll never build a one-shot prompt chain again.

Let's break the whole thing down: what loop architecture actually is, how OODA loops give your agent a strategic brain, how Ralph execution handles the tactical grunt work, and how to wire it all together in OpenClaw so you can build agents that actually perform.

What Loop Architecture Actually Is

Loop architecture is exactly what it sounds like: your agent runs in a loop. Instead of a linear pipeline where input goes in one end and output comes out the other, the agent cycles through phases repeatedly until it achieves its goal or hits a defined stopping point.

The phases, at the most fundamental level, look like this:

- Perceive — Take in information from the environment. User input, tool outputs, API responses, memory, whatever.

- Reason — Process that information. Think about what it means, what's changed, what the current state of the task is.

- Act — Do something. Call a tool, generate output, make an API request, update memory.

- Reflect — Evaluate the result. Did that work? Are we closer to the goal? What should change?

Then it loops back to Perceive with new information from the action it just took. The cycle continues until the task is complete or a maximum iteration count is reached (because you don't want a rogue agent burning through your API credits at 3 AM running in circles).

This is how humans actually solve problems. You don't sit down to write a research report by producing the final draft in one pass. You gather sources, read them, draft something, realize it's wrong, go find better sources, rewrite, check your logic, and iterate until it's good. Loop architecture gives AI agents this same capability.

The reason this matters so much for LLM-based agents specifically is that language models are fundamentally limited by their context window and their single-pass generation. They can't "go back" and fix a mistake mid-generation. But if you wrap them in a loop, they can generate, evaluate, and regenerate — effectively giving them the ability to self-correct that they lack natively.

Every serious agent framework uses some version of this. ReAct (Reason + Act) alternates between thinking and tool-calling. Reflexion adds explicit self-critique steps. Plan-and-execute separates planning from doing. Tree of Thoughts explores multiple reasoning paths. They're all loops. The differences are just in what happens inside each phase and how many nested loops you're running.

OODA: The Strategic Decision Loop Your Agent Needs

Now let's talk about the specific loop pattern that gives your agent a strategic brain: the OODA loop.

OODA stands for Observe, Orient, Decide, Act. It was developed by Colonel John Boyd, a U.S. Air Force fighter pilot, in the 1970s. Boyd's original insight was about aerial combat: the pilot who cycles through OODA faster than their opponent wins the dogfight. Not because they're a better pilot, but because they're making decisions faster and forcing the other pilot to react to a reality that's already changed.

This translates to AI agents almost perfectly. Here's each phase:

Observe: Gather raw data. What's the current state of the task? What did the last action return? What does the user want? What's in memory? This is pure information intake — no interpretation yet.

Orient: This is the most important and most underrated phase. Orient means making sense of what you observed. It's where the LLM synthesizes observations with prior knowledge, context, and mental models to form an understanding of the situation. Boyd called this "destruction and creation" — you break apart existing schemas and build new ones that fit the current data.

In practice, this is where your agent prompt says something like: "Given the current state, your previous actions, and what you know about the task, what is actually going on here? What matters? What's changed?"

Decide: Based on your orientation, evaluate options and pick one. This might be a chain-of-thought deliberation, a scoring of possible next steps, or a straightforward selection from a menu of available actions.

Act: Execute the decision. Call a tool, generate output, send a request. This changes the environment, which produces new information, which feeds back into Observe.

The key insight from Boyd that most people miss: OODA is not a sequential pipeline. It's a recursive, overlapping process. Orient feeds back into Observe (your mental model shapes what you notice). Decide can loop back to Orient (if none of your options feel right, you need to re-orient). The whole thing is fluid and continuous.

For AI agents, this means your OODA loop isn't just "do these four steps in order." It means building an agent that can, at any point, say "wait, I need to re-evaluate my understanding of this situation before I proceed." That's what makes it powerful. That's what separates a strategic agent from a simple tool-caller.

Here's a simplified OODA prompt pattern you might use:

You are an AI agent operating in an OODA loop.

OBSERVE: Review the current task state, recent tool outputs, and any

new information available.

ORIENT: Synthesize your observations. What is the actual situation?

What assumptions should you update? What patterns do you notice?

Consider your prior knowledge and any contradictions with new data.

DECIDE: Based on your orientation, what is the best next action?

Evaluate at least 2 options before selecting one. Explain your reasoning.

ACT: Execute your chosen action using the available tools.

Specify the exact tool call and parameters.

After acting, loop back to OBSERVE with the new information.

Continue until the task is complete. Output DONE when finished.

This works. But for complex tasks, OODA alone is too high-level. It gives you strategic direction but doesn't handle the messy reality of multi-step tool execution. That's where Ralph execution comes in.

Ralph Execution: The Tactical Inner Loop

Let me be upfront: "Ralph execution" isn't a term you'll find in a NeurIPS paper or a LangChain tutorial. It's not an industry-standard acronym. The closest standard analog is ReAct-style execution — the Reason + Act pattern from the 2022 Yao et al. paper that became the backbone of practically every tool-using agent on the planet.

But whether you call it Ralph, ReAct, or "that tight inner loop where the agent actually does stuff," the concept is the same: a fast, tactical feedback cycle where the agent reasons about what to do, does it, observes the result, and reasons again. It's tighter and faster than OODA. Less strategic pondering, more "call the API, check the response, handle the error, try again."

The cycle looks like this:

- Thought — The agent reasons about its current sub-task. "I need to find the quarterly revenue for Q3. I'll search the financial database."

- Action — The agent calls a tool.

search_database(query="Q3 revenue 2024") - Observation — The tool returns a result.

{"revenue": "$4.2M", "source": "quarterly_report.pdf"}

Then loop: new Thought based on the Observation, new Action, new Observation, until the sub-task is done.

This is your workhorse loop. It's what actually gets things done at the tool level. While OODA is up in the command center looking at the big picture, Ralph execution is on the ground making API calls and parsing JSON.

The key difference between OODA and Ralph execution:

| OODA | Ralph Execution | |

|---|---|---|

| Scope | Strategic / macro | Tactical / micro |

| Speed | Deliberate | Fast |

| Focus | Understanding & planning | Doing & reacting |

| Typical duration | Entire task lifecycle | Single sub-task |

| Key phase | Orient (sense-making) | Action (tool-calling) |

Neither one alone is sufficient for complex tasks. OODA without execution is all planning, no action. Ralph execution without OODA is all action, no strategy. You need both.

The Hybrid: Nesting OODA and Ralph Together

Here's where it gets powerful. The real architecture is a nested loop where OODA operates as the outer strategic loop and Ralph execution runs as the inner tactical loop within OODA's Act phase.

START TASK

│

▼

┌──────────────── OODA OUTER LOOP ────────────────┐

│ │

│ OBSERVE: Gather full task state + memory │

│ ↓ │

│ ORIENT: LLM reasons about situation holistically │

│ ↓ │

│ DECIDE: Select high-level strategy/sub-goal │

│ ↓ │

│ ACT: ──── Enter Ralph Inner Loop ─────┐ │

│ │ │ │

│ │ Thought → Action → Observe │ │

│ │ ↑___________| │ │

│ │ (repeat until sub-goal met) │ │

│ │ │ │

│ └───── Exit with result ────────┘ │

│ ↓ │

│ UPDATE MEMORY with results │

│ ↓ │

│ Goal met? ──→ Yes → DONE │

│ │ │

│ No → Loop back to OBSERVE │

│ │

└───────────────────────────────────────────────────┘

Concrete example: Say your agent is researching a competitor landscape.

- OODA Observe: "User wants a competitor analysis for their SaaS product. I have no data yet."

- OODA Orient: "I need to identify competitors, gather their pricing, features, and market position. Let me start with identification."

- OODA Decide: "First sub-goal: find the top 5 competitors."

- OODA Act → Ralph loop:

- Thought: "I'll search for competitors in the project management SaaS space."

- Action:

web_search("top project management SaaS competitors 2025") - Observation: Returns a list of 8 companies.

- Thought: "Good, but I should verify these. Let me cross-reference."

- Action:

web_search("G2 project management software rankings") - Observation: Returns top 10 with ratings.

- Thought: "I now have a validated list of 5 top competitors. Sub-goal complete."

- OODA Observe (next cycle): "I have 5 competitors identified. Now I need pricing data."

- OODA Orient: "Pricing pages are often behind paywalls. I'll need to search strategically and maybe check review sites."

- And so on.

See how it works? OODA keeps the agent strategically oriented. Ralph keeps it tactically effective. The outer loop prevents the agent from losing sight of the big picture. The inner loop prevents it from getting stuck in analysis paralysis.

Building This in OpenClaw

Here's where theory becomes practice. OpenClaw is purpose-built for this kind of architecture, and it makes implementing nested loops dramatically simpler than wiring it all up from scratch.

In OpenClaw, you can build the hybrid OODA + Ralph architecture using its agent framework. Here's the general implementation approach:

Step 1: Define Your Outer OODA Loop

Set up your agent's main reasoning cycle. In OpenClaw, this means configuring your agent with a system prompt that enforces the OODA structure and connecting it to your tool set:

# OpenClaw OODA Agent Setup

agent = openclaw.Agent(

name="strategic_researcher",

system_prompt="""

You operate in an OODA decision loop.

Each cycle:

1. OBSERVE: State what you currently know and what's changed.

2. ORIENT: Analyze the situation. Update your mental model.

What assumptions need revising? What patterns emerge?

3. DECIDE: Choose your next high-level action. Justify it.

4. ACT: Execute using available tools.

After each action, loop back to OBSERVE.

Output TASK_COMPLETE when the goal is fully achieved.

Never exceed 15 outer loops.

""",

tools=[search_tool, database_tool, analysis_tool, write_tool],

memory=openclaw.Memory(type="persistent"),

max_iterations=15

)

Step 2: Configure Ralph Execution for Tool Interactions

Within each OODA Act phase, OpenClaw's tool-calling system naturally operates as a ReAct-style inner loop. Each tool call generates an observation that feeds back into the agent's next reasoning step:

# Tool definitions with built-in observation handling

search_tool = openclaw.Tool(

name="web_search",

description="Search the web for current information",

parameters={"query": "string"},

execution=lambda params: perform_search(params["query"]),

# OpenClaw automatically feeds results back as observations

observation_format="structured"

)

analysis_tool = openclaw.Tool(

name="analyze_data",

description="Analyze collected data for patterns and insights",

parameters={"data": "object", "analysis_type": "string"},

execution=lambda params: run_analysis(params),

observation_format="detailed"

)

Step 3: Add Memory and Reflection

This is where OpenClaw really shines. You can configure persistent memory that carries context across OODA cycles, so your agent doesn't forget what it learned three loops ago:

# Memory configuration for cross-loop persistence

memory_config = openclaw.MemoryConfig(

short_term_window=5, # Last 5 interactions always in context

long_term_storage=True, # Key findings persisted across sessions

reflection_enabled=True, # Agent periodically summarizes learnings

reflection_interval=3 # Reflect every 3 OODA cycles

)

agent.configure_memory(memory_config)

Step 4: Set Termination Conditions

You need guardrails. Loop architecture without termination conditions is a recipe for infinite cycles and a terrifying API bill:

# Termination configuration

agent.set_termination(

success_token="TASK_COMPLETE",

max_outer_loops=15,

max_inner_loops_per_action=8,

timeout_seconds=300,

budget_limit_usd=2.00

)

Step 5: Run It

result = agent.run(

task="Conduct a competitive analysis of the top 5 project management "

"SaaS tools. Include pricing, key features, market positioning, "

"and identify gaps our product could exploit.",

output_format="markdown_report"

)

print(result.output)

print(f"Loops completed: {result.metrics.total_loops}")

print(f"Tools called: {result.metrics.tool_calls}")

print(f"Total cost: ${result.metrics.cost:.4f}")

Common Pitfalls and How to Avoid Them

Building loop agents isn't hard. Building good loop agents requires avoiding a few traps:

1. Infinite loops. Your agent gets stuck in a cycle where it keeps trying the same action and getting the same failed result. Fix: Always set max_iterations and implement a "stuck detection" check — if the last 3 observations are functionally identical, force a re-orient or terminate.

2. Context window overflow. Each loop iteration adds to the conversation history. After 10 loops with detailed tool outputs, you've blown past your context limit. Fix: Use OpenClaw's memory summarization. Compress older loop iterations into summaries while keeping recent ones detailed.

3. Strategic drift. The agent starts on task but gradually wanders off into tangentially related rabbit holes. Fix: Include the original task in every OODA Observe phase. Force the Orient phase to explicitly evaluate "am I still working toward the original goal?"

4. Over-looping. The agent keeps refining when the answer is already good enough. Fix: Include explicit "good enough" criteria in your system prompt. "If you have data from at least 3 reliable sources that agree, consider the sub-task complete."

5. Under-orienting. The agent skips Orient and jumps straight from Observe to Act. Fix: Make your prompt structure enforce Orient as a mandatory step with minimum reasoning length. No orientation, no action.

What to Build First

If you haven't built a loop agent before, here's my recommended progression using OpenClaw:

Week 1: Simple ReAct loop. Build an agent with one tool (web search) that can answer research questions by searching, reading results, and searching again if needed. Get comfortable with the basic loop mechanics.

Week 2: Add OODA wrapper. Take your ReAct agent and add the strategic outer loop. Give it a complex task that requires multiple sub-goals (like the competitor analysis example). Watch how the Orient phase changes its behavior.

Week 3: Add memory and reflection. Configure persistent memory so your agent can handle tasks that span multiple sessions. Add reflection steps so it periodically evaluates its overall progress.

Week 4: Multi-tool orchestration. Add 3 to 5 tools and let the OODA loop decide which ones to use and in what order. This is where the architecture really proves its value — the agent dynamically selects its approach based on what it's learning.

Check the Claw Mart listings for pre-built tool integrations, agent templates, and memory modules that accelerate this timeline. There are starter kits specifically designed for loop architecture patterns that will save you the boilerplate setup.

The Bigger Picture

Loop architecture isn't a nice-to-have. It's the minimum viable architecture for any agent that needs to handle tasks more complex than "answer this one question." The combination of OODA for strategic decision-making and Ralph/ReAct execution for tactical tool use gives you an agent that can think at multiple levels simultaneously — planning its overall approach while executing individual steps with precision.

The agents that are actually useful in production — the ones handling customer research, code generation, data analysis, content creation at scale — are all running loops. The ones that feel like toys are running one-shot prompts with fancy wrappers.

Build the loop. Let OpenClaw handle the infrastructure. Focus on defining what your agent should actually accomplish, and let the architecture handle the how.

That's the infinite feedback twist. Not infinite in duration — infinite in potential. Each loop makes the agent smarter about the current task, and with persistent memory, smarter about future tasks too. It's compounding intelligence, one cycle at a time.

Now go build something that actually works.

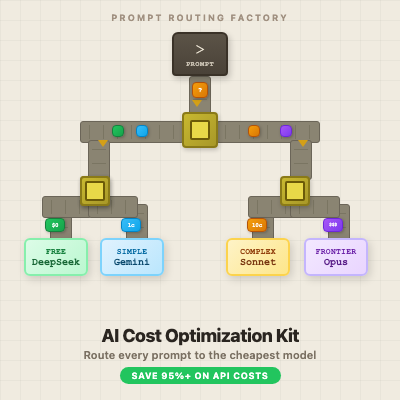

Recommended for this post