Automate Incident Response: Build an AI Agent That Triages Alerts

Automate Incident Response: Build an AI Agent That Triages Alerts

Your SOC team is drowning. You already know this. Thousands of alerts per day, false positive rates hovering between 40 and 80 percent, and your Tier 1 analysts spending half their shift copy-pasting IOCs into VirusTotal before deciding an alert is garbage. Meanwhile, the one alert that actually matters sits in the queue for hours because everything looks the same shade of orange in the dashboard.

IBM's data puts it bluntly: 204 days to identify a breach, 73 days to contain it. Not because security teams are incompetent—because they're buried alive in noise.

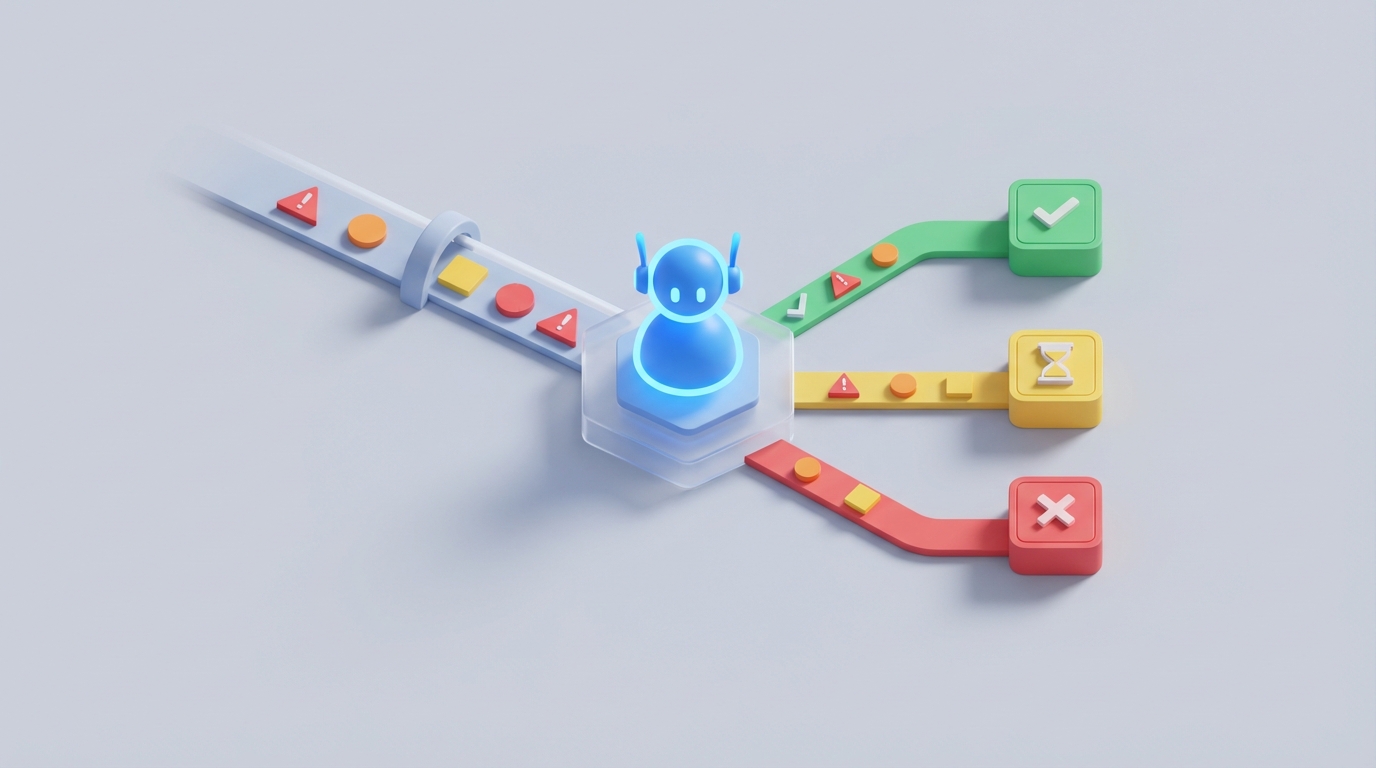

Here's the thing: most of that triage work isn't intellectually hard. It's repetitive, pattern-based, and follows predictable logic trees. Which means it's exactly the kind of work an AI agent can do right now—not in some hypothetical future, but today, with tools that exist and work.

This is a practical guide to building an AI-powered incident triage agent on OpenClaw that handles the bulk of your alert volume, enriches the ones that matter, and routes critical incidents to humans with full context already assembled. No hand-waving. No "just sprinkle some AI on it." Actual architecture, actual steps.

The Manual Workflow Today (And Why It's Bleeding You Dry)

Let's trace what actually happens when an alert fires in a typical SOC without meaningful automation.

Step 1: Alert lands in the queue. Could be from your SIEM (Splunk, Sentinel, Elastic), your EDR (CrowdStrike, SentinelOne), email security, cloud workload protection—whatever. It shows up as a row in a dashboard or a ticket in ServiceNow.

Step 2: Analyst picks it up. This alone can take minutes to hours depending on queue depth. During peak hours or overnight with skeleton crews, alerts sit.

Step 3: Initial triage. The analyst reads the alert details, tries to determine: Is this real? Is this a known false positive? What's the severity? This involves:

- Checking the source IP/domain against threat intel feeds

- Looking up the affected user in Active Directory or your identity provider

- Checking if the endpoint has known vulnerabilities or prior incidents

- Reviewing related logs (authentication events, network flows, process trees)

- Cross-referencing with recent threat advisories

For a single phishing alert, this takes 30 minutes on the low end. For a credential compromise or lateral movement alert, you're looking at 2 to 4+ hours of manual investigation.

Step 4: Decision and escalation. Analyst decides: close as false positive, escalate to Tier 2, or trigger the incident response playbook. If it escalates, the next analyst often re-investigates because the handoff notes are incomplete.

Step 5: Containment actions. Isolate the endpoint, disable the user account, block the IP/domain, quarantine the email. Each action requires logging into a different console, clicking through a different UI, and documenting what was done.

Step 6: Documentation. Write up what happened, what was done, what the impact was. This is often the part that gets skipped or half-assed because the analyst is already triaging the next alert.

Now multiply this by 4,000 to 11,000 alerts per day, which is what enterprise SOCs typically see according to industry surveys. Your analysts are doing the same enrichment dance thousands of times, and the vast majority of those dances end with "false positive, close ticket."

What Makes This So Painful

The costs here aren't abstract. They're specific and compounding.

Analyst burnout and turnover. SOC analyst turnover rates are notoriously high. When 60 percent of your day is mechanical enrichment on alerts that turn out to be nothing, talented people leave. Replacing a trained Tier 2 analyst costs six figures when you factor in recruiting, onboarding, and the ramp-up period where they're not yet effective.

Mean Time to Respond (MTTR) creep. When humans are the bottleneck at every stage, response times stretch. That credential compromise alert that fired at 2 AM? Nobody looked at it until the morning shift. By then, the attacker has been inside for six hours, moved laterally, and established persistence. The difference between a contained incident and a breach often comes down to minutes, not hours.

Inconsistency. Analyst A and Analyst B follow different mental models. One checks threat intel first, the other checks user context first. One closes borderline alerts as false positives, the other escalates them. There's no standardized decision logic, which means your triage quality is only as good as whoever happens to pick up the alert.

Context loss during handoffs. When an alert escalates from Tier 1 to Tier 2, critical context gets lost. The Tier 2 analyst re-investigates from scratch because the notes say "suspicious login from unusual IP" without specifying which threat intel feeds were checked, what the user's normal behavior looks like, or whether the endpoint had recent alerts.

Cost per incident. Ponemon data consistently shows that organizations with faster, more automated response save $1 million or more per breach compared to those relying on manual processes. Even for non-breach incidents, the analyst labor cost of manual triage adds up to hundreds of thousands annually in mid-size SOCs.

What AI Can Actually Handle Right Now

Let's be honest about what works and what doesn't. The hype cycle around "autonomous SOC" has created unrealistic expectations. But the pragmatic reality is still impressive.

AI is genuinely good at (today, 2026-2026):

- Alert deduplication and correlation. Grouping related alerts into incidents instead of making analysts connect dots manually.

- Automated enrichment. Pulling threat intel, user context, endpoint status, authentication logs, and network data in seconds instead of minutes.

- False positive identification. Pattern matching against historical data to flag alerts that match known benign patterns with high confidence.

- Standard playbook execution. For well-defined incident types (phishing email reported, known malware detected, impossible travel alert), AI can execute the full response playbook: quarantine, isolate, block, notify.

- Natural language summarization. Generating human-readable investigation summaries from raw log data and enrichment results.

- Severity scoring with context. Going beyond static severity labels to score alerts based on the specific user, asset, business context, and current threat landscape.

Organizations already doing this well report dramatic results. Financial institutions using automated endpoint isolation have gone from 2.5-hour response times to 8 minutes. Security teams using AI-assisted investigation report 30 to 50 percent reductions in time-per-incident. Mature SOAR deployments automate 60 to 80 percent of repetitive task volume.

OpenClaw is built for exactly this kind of agentic workflow—where you need an AI system that doesn't just answer questions but actually does things: calls APIs, makes decisions based on structured logic, and takes actions across multiple tools in sequence.

Step by Step: Building an Incident Triage Agent on OpenClaw

Here's how to actually build this. We'll focus on the most common and highest-volume use case: triaging security alerts from a SIEM, enriching them, making a disposition decision, and either auto-closing, auto-remediating, or escalating to a human with full context.

Step 1: Define Your Alert Intake

Your agent needs a trigger. The most common pattern is to have your SIEM (Splunk, Sentinel, Elastic) send alerts to a webhook that your OpenClaw agent listens on, or to poll an alert queue via API.

In OpenClaw, you set up your agent's intake like this:

agent: incident_triage_v1

trigger:

type: webhook

endpoint: /ingest/siem-alert

auth: bearer_token

input_schema:

alert_id: string

source: string

severity: string

description: string

affected_user: string

affected_host: string

indicators: list[string]

raw_log: string

timestamp: datetime

This gives your agent a structured input every time an alert fires. The schema ensures you're capturing the fields you need for downstream enrichment regardless of which SIEM is sending them.

Step 2: Build the Enrichment Layer

This is where the agent earns its keep. Instead of an analyst manually querying five different tools, the agent does it in parallel.

enrichment_steps:

- name: threat_intel_lookup

tool: openclaw.integrations.threatintel

sources: [virustotal, abuseipdb, otx, misp]

input: indicators

timeout: 10s

- name: user_context

tool: openclaw.integrations.identity

provider: azure_ad # or okta, jumpcloud

query: affected_user

fields: [department, role, last_login, risk_score, mfa_status, recent_sign_ins]

- name: endpoint_status

tool: openclaw.integrations.edr

provider: crowdstrike # or sentinelone, defender

query: affected_host

fields: [os, patch_level, isolation_status, recent_detections, running_processes]

- name: historical_alerts

tool: openclaw.integrations.siem

query: "affected_user OR affected_host"

lookback: 30d

fields: [alert_count, alert_types, dispositions]

- name: network_context

tool: openclaw.integrations.network

query: indicators

fields: [dns_resolutions, connection_frequency, geo_location, first_seen]

OpenClaw runs these enrichment calls concurrently. What takes an analyst 15 to 30 minutes of tab-switching takes the agent under 10 seconds. The results come back as structured data that feeds into the next step.

Step 3: Disposition Logic

This is the brain. You're encoding your senior analysts' decision-making into a structured reasoning framework. OpenClaw lets you combine rule-based logic with LLM-powered analysis.

disposition:

engine: openclaw.reasoning

mode: hybrid # combines rules + llm judgment

rules:

- name: known_false_positive

condition: >

threat_intel_lookup.all_clean == true

AND historical_alerts.similar_closed_as_fp >= 3

AND endpoint_status.recent_detections == 0

action: auto_close

confidence_threshold: 0.95

- name: confirmed_malicious_standard

condition: >

threat_intel_lookup.any_malicious == true

AND alert.severity in ["high", "critical"]

AND endpoint_status.isolation_status == "not_isolated"

action: auto_remediate

playbook: standard_containment

- name: requires_investigation

condition: >

threat_intel_lookup.any_suspicious == true

OR user_context.risk_score > 70

OR historical_alerts.alert_count > 5

action: escalate_to_human

priority: high

llm_analysis:

prompt: |

You are a senior SOC analyst. Given the following enriched alert data,

assess the likelihood this is a true positive, identify the most likely

attack technique (MITRE ATT&CK), and recommend a disposition.

Alert: {alert}

Threat Intel: {threat_intel_lookup}

User Context: {user_context}

Endpoint Status: {endpoint_status}

Historical Alerts: {historical_alerts}

Network Context: {network_context}

Respond with: disposition (auto_close, auto_remediate, escalate),

confidence (0-1), reasoning (2-3 sentences), mitre_technique.

fallback: escalate_to_human

The hybrid approach is key. Hard rules catch the obvious cases with zero latency. The LLM handles ambiguous situations where pattern recognition and contextual reasoning matter. If the LLM's confidence is below threshold, the agent escalates to a human rather than guessing.

Step 4: Automated Response Actions

For alerts the agent dispositions with high confidence, it can execute containment actions directly.

playbooks:

standard_containment:

steps:

- action: isolate_endpoint

tool: openclaw.integrations.edr

target: affected_host

condition: severity in ["high", "critical"]

- action: disable_user_account

tool: openclaw.integrations.identity

target: affected_user

condition: alert_type == "credential_compromise"

reversible: true

- action: block_indicators

tool: openclaw.integrations.firewall

targets: indicators

condition: threat_intel_lookup.any_malicious == true

- action: quarantine_email

tool: openclaw.integrations.email_security

target: alert.email_message_id

condition: alert_type == "phishing"

- action: create_ticket

tool: openclaw.integrations.itsm

provider: servicenow

fields:

summary: "{llm_analysis.reasoning}"

severity: "{alert.severity}"

actions_taken: "{executed_steps}"

enrichment_data: "{all_enrichment}"

Every action is logged, reversible where possible, and documented automatically. This is critical for compliance and forensic integrity.

Step 5: Human Escalation with Full Context

When the agent escalates, it doesn't just forward the raw alert. It packages everything it found into a structured brief.

escalation:

channel: slack # or teams, pagerduty, email

template: |

🚨 **Escalated Alert: {alert.alert_id}**

**Summary:** {llm_analysis.reasoning}

**Severity:** {alert.severity} | **Confidence:** {llm_analysis.confidence}

**MITRE Technique:** {llm_analysis.mitre_technique}

**Affected User:** {user_context.name} ({user_context.department})

- Risk Score: {user_context.risk_score}

- MFA: {user_context.mfa_status}

- Recent anomalous sign-ins: {user_context.recent_sign_ins}

**Affected Host:** {endpoint_status.hostname}

- OS: {endpoint_status.os} | Patch Level: {endpoint_status.patch_level}

- Prior detections (30d): {historical_alerts.alert_count}

**Threat Intel:**

{threat_intel_lookup.summary}

**Recommended Action:** {llm_analysis.recommended_action}

[View Full Investigation →]({ticket_url})

Your Tier 2 analyst gets a complete picture in seconds instead of spending 30 minutes assembling it themselves. They can make a judgment call immediately.

Step 6: Feedback Loop

This is what separates a static automation from a system that gets smarter. When a human overrides the agent's recommendation, that decision feeds back into the model.

feedback:

on_human_override:

- log_decision_delta

- update_disposition_rules

- retrain_confidence_model

schedule: weekly_review

metrics:

- true_positive_rate

- false_positive_rate

- auto_close_accuracy

- mean_time_to_disposition

- escalation_rate

Over time, your agent learns which alert patterns your team consistently overrides, and it adjusts. The goal is continuous improvement in accuracy without requiring manual rule updates.

What Still Needs a Human

Let's be clear about the boundaries. Automating triage doesn't mean automating judgment. Here's what your senior analysts should still own:

Business impact decisions. "Can we isolate this server that processes $2M in transactions per hour?" That requires understanding business context that no agent has today.

Novel attack techniques. Living-off-the-land attacks, zero-days, and sophisticated adversaries who specifically evade automated detection. When the agent says "I'm not confident," believe it—that's when experienced eyes matter most.

Legal and regulatory decisions. When to notify regulators, when to engage law enforcement, when to activate breach notification procedures. These are judgment calls with legal consequences.

Executive communication. Translating technical findings into business risk language for leadership. AI can draft the summary, but a human needs to own the message.

Root cause analysis for complex intrusions. Understanding why the attacker got in, how they moved, and what needs to change architecturally. This is deep, creative analytical work.

The right mental model: your OpenClaw agent is a tireless, extremely fast Tier 1 analyst who never sleeps, never gets bored, and follows procedures perfectly—but knows when to tap the senior analyst on the shoulder.

Expected Time and Cost Savings

Based on real-world data from organizations running similar automation (scaled to typical mid-enterprise SOC):

| Metric | Before Automation | After OpenClaw Agent | Improvement |

|---|---|---|---|

| Alerts triaged per hour | 8-12 (per analyst) | 500-1,000+ (agent) | 50-100x |

| Mean time to triage | 25-45 min | Under 30 seconds | 98%+ reduction |

| False positive handling | Manual, 40-80% of volume | Auto-closed with audit trail | 70-90% automated |

| Tier 1 analyst hours on triage | 6-8 hrs/day | 1-2 hrs/day (reviewing escalations) | 75% reduction |

| MTTR for standard incidents | 2-6 hours | 8-15 minutes | 90%+ reduction |

| Annual analyst cost savings (5-person SOC) | — | $250K-$500K in recaptured capacity | — |

| Investigation context assembly | 15-30 min per alert | Instant (pre-assembled) | Near-total elimination |

These aren't aspirational numbers. They're consistent with what CrowdStrike, Microsoft, and Cortex XSOAR customers report, and they're achievable with a well-configured OpenClaw agent connected to your existing security stack.

The bigger win isn't just cost savings—it's catching real threats faster. When your analysts aren't spending 80 percent of their time on noise, they can focus on the 20 percent that actually matters. That's how you move from 204 days to identify a breach to hours.

Getting Started

You don't have to automate everything on day one. Start with a single high-volume, well-understood alert type—phishing reports are the classic choice because they're high volume, well-defined, and relatively low risk to auto-remediate.

- Map your current workflow for that alert type. Every click, every lookup, every decision point.

- Set up your OpenClaw agent with the intake, enrichment, and disposition logic above.

- Run in shadow mode for two weeks. The agent triages alerts and logs its recommendations, but a human still makes the final call. Compare results.

- Enable auto-close for high-confidence false positives first (lowest risk).

- Gradually enable auto-remediation for confirmed malicious, standard playbooks.

- Review weekly. Tune rules, review overrides, expand to the next alert type.

Within 90 days, you'll have a meaningful percentage of your alert volume handled automatically with higher consistency than manual triage.

If you want to skip the build-from-scratch phase, browse the pre-built security automation agents on Claw Mart—there are incident triage templates, phishing response workflows, and enrichment pipelines ready to customize and deploy. The community has already solved many of the integration challenges you'd otherwise spend weeks on.

For teams that want the agent built to their exact specifications without pulling engineers off other projects, Clawsourcing connects you with experienced OpenClaw builders who've deployed these systems in production SOCs. Describe what you need, get matched with a builder, and have a working triage agent in weeks instead of months.

Your analysts are too expensive and too valuable to spend their days copy-pasting hashes into search bars. Let the agent handle the volume. Let the humans handle the judgment. That's the division of labor that actually works.