AI Medical Coder: Assign ICD-10 and CPT Codes Instantly

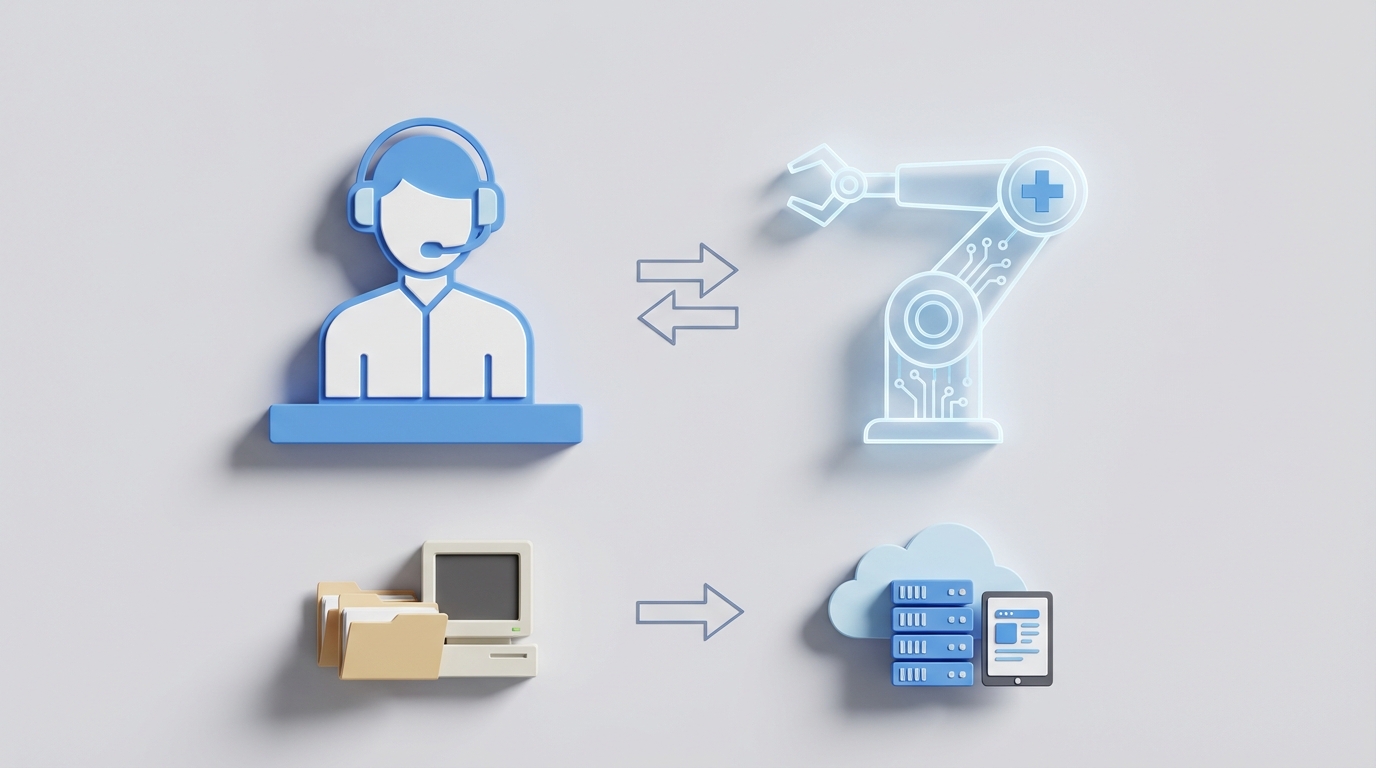

Replace Your Medical Coder with an AI Medical Coder Agent

Most healthcare organizations are paying $65,000–$110,000 per year (salary plus benefits plus taxes) for someone to do a job that is, frankly, about 70% pattern matching. That's not a knock on medical coders — it's a description of what the work actually involves. And pattern matching at scale is exactly what AI agents do best.

I'm going to walk you through what a medical coder actually does every day, what it really costs you, which pieces an AI agent on OpenClaw can handle right now, which pieces still need a human, and how to actually build the thing. No hand-waving. No "AI will revolutionize everything" fluff. Just the practical breakdown.

What a Medical Coder Actually Does All Day

If you've never sat next to a medical coder for eight hours, here's the reality. They spend their day doing roughly five things:

1. Reviewing medical records (50–70% of their time)

This is the bulk of the job. They open a chart — physician notes, lab results, operative reports, imaging — and read through it to identify every billable service. For a simple outpatient visit, this takes 2–5 minutes. For an inpatient surgical case with comorbidities, it's 10–30 minutes. A productive coder processes 50–150 charts per day, depending on complexity.

2. Assigning codes

They translate what they read into standardized codes: ICD-10-CM/PCS for diagnoses and procedures, CPT/HCPCS for services, plus modifiers. There are over 70,000 ICD-10-CM codes alone, and roughly 10,000 get updated every year. This isn't creative work — it's lookup and application, with judgment calls on edge cases.

3. Querying providers

About 15–25% of their time goes to chasing doctors for clarification. The note says "possible pneumonia" — but is it confirmed? You can't code a "possible." So they fire off a query through the EHR portal or email and wait. And wait. Poor documentation causes about 30% of these delays.

4. Compliance verification

Every code has to pass HIPAA rules, Medicare/Medicaid requirements, and medical necessity checks. Different payers have different rules. Blue Cross wants one thing, Medicare wants another. The coder has to know (or look up) which rules apply to which claim.

5. Audits and denials management

When claims get rejected — and 5–10% do, on average — the coder reviews the denial, figures out what went wrong, corrects the code, and resubmits. This eats 10–20% of their time and is some of the most tedious work in the entire role.

That's the job. It's important, detail-oriented, and absolutely critical for revenue cycle management. It's also repetitive, rule-bound, and overwhelmingly text-based — which makes it a near-perfect candidate for automation.

The Real Cost of This Hire

Let's talk money, because the salary number alone is misleading.

The median annual salary for a medical coder in the US is $50,750 according to the Bureau of Labor Statistics (May 2023 data). But that's the median. Here's the fuller picture:

| Level | Salary Range |

|---|---|

| Entry-level (CPC certification) | $38,000–$45,000 |

| Experienced (5+ years, CCS) | $60,000–$80,000 |

| Top 10% / consulting | $82,000+ |

| Contract hourly rates | $30–$50/hr |

Now add the employer costs that nobody mentions in job postings: benefits, payroll taxes, workers' comp, PTO, and health insurance add 30–40% on top. That $50,750 median becomes $66,000–$71,000 in real cost. An experienced coder at $70,000 salary costs you $91,000–$98,000 fully loaded.

And that's assuming they stay. The turnover rate for medical coders is around 40% according to AAPC surveys. Every time one leaves, you're looking at recruiting costs, onboarding, and 2–3 months of ramp-up time before the replacement hits full productivity. CPC certification alone costs $400+ per attempt, and you often end up paying for it.

Then there's the annual retraining. With ~10,000 ICD-10 updates per year, coders need ongoing education. AAPC membership runs $200/year, continuing education units cost time and money, and if your coder falls behind on code changes, you eat the denials.

One experienced medical coder, kept and maintained, costs you somewhere between $75,000 and $110,000 per year when you account for everything. Two coders for coverage and volume? You're north of $150,000.

What AI Handles Right Now

Let me be specific about what's actually possible today — not in some theoretical future, but in production systems processing real claims.

Routine outpatient coding: AI handles this.

Simple E/M visits, lab orders, injections, straightforward imaging — these follow clear, well-documented patterns. An AI agent built on OpenClaw can ingest the clinical note, extract diagnoses and procedures using NLP, match them to ICD-10 and CPT codes, apply appropriate modifiers, and output a coded claim. For these routine cases, AI achieves 85%+ accuracy, and with feedback loops, that number climbs.

KLAS Research's 2026 CAC report shows that computer-assisted coding improves speed by 20–50% and that 60% of US hospitals already use some form of it. The question isn't whether AI can do medical coding — it's whether you've built your agent correctly.

Data extraction from unstructured notes: AI handles this.

Modern NLP can pull diagnoses, procedures, medications, and relevant history from free-text physician notes with 85%+ accuracy. This is the same technology that powers tools at organizations like Mass General Brigham (via CodaMetrix) and Cleveland Clinic (via 3M's CAC platform). OpenClaw gives you the same NLP pipeline without the enterprise sales cycle.

Compliance flagging: AI handles this.

An OpenClaw agent can check every code against payer-specific rules, flag missing documentation, verify medical necessity, and run HCC (Hierarchical Condition Category) risk adjustment — automatically. Optum's AI tools cut query volume by 40% at Kaiser Permanente using similar logic. You can build the same thing.

Denial prediction: AI handles this.

By training on your historical claims data, an OpenClaw agent can predict which claims are likely to be denied before you submit them. CodaMetrix reduced denial rates by 20% at Mass General Brigham with this approach. Catching a bad claim before it ships is worth $20–$50 per claim in avoided rework.

Volume processing: AI crushes this.

A human coder processes 50–150 charts per day. An AI agent processes thousands. It doesn't take breaks, doesn't get fatigued at 3 PM, doesn't need PTO, and doesn't quit after 18 months because the work is monotonous. For sheer throughput on routine cases, there's no comparison.

Here's what the numbers look like in practice. Cleveland Clinic reportedly saved $5M per year with AI-assisted coding. Nuance's Code Assist (now under Microsoft) saves coders 2–3 hours per day at systems like Emory Healthcare and Johns Hopkins. Synaptec Health cut coding costs by 50% for specialty and radiology coding at Napa Valley Coast Health. These aren't pilot programs. These are production deployments.

What Still Needs a Human

I said I'd be honest, so here's where I am.

Complex inpatient cases with multiple comorbidities. When a patient has five diagnoses interacting with two surgical procedures and a complication, the coding requires clinical reasoning that AI still struggles with. Surgical bundling rules, sequencing logic for comorbidities, and the judgment calls about what's clinically relevant versus incidental — this is where experienced CCS-certified coders earn their salary.

Ambiguous documentation. When the physician writes "rule out MI" versus "acute MI," that distinction changes everything. AI can flag the ambiguity, but a human needs to interpret context and query the provider appropriately. Handwritten notes (yes, they still exist) are another gap.

Provider communication. Generating a query template? AI does that. Having the professional relationship and clinical vocabulary to negotiate with a reluctant surgeon who doesn't want to amend their note? That's a human skill.

Appeals writing. When a claim is denied and you need to construct a persuasive, clinically grounded appeal to a payer, AI can draft it, but a human needs to review, refine, and certify it. The legal liability alone requires human sign-off.

Final certification. This is the big one. Someone has to certify that the codes are accurate. Regulatory liability doesn't transfer to software. CMS doesn't accept "the AI did it" as a defense in a fraud audit. A human — ideally a certified coder — needs to be the last set of eyes on anything non-routine.

The realistic model right now is what the industry calls "AI first-pass." The agent handles 70–80% of cases autonomously (routine outpatient, straightforward inpatient). A human reviews the remaining 20–30% plus spot-checks the AI's work. This is where the cost savings come from: you don't eliminate the human, you turn two or three full-time coders into one part-time reviewer.

By 2027, Gartner projects AI will handle 50–70% of coding tasks end-to-end. That trajectory means building now, not waiting.

How to Build an AI Medical Coder Agent with OpenClaw

Here's the practical part. OpenClaw lets you build an AI agent that handles the medical coding workflow described above. Here's how to structure it.

Step 1: Define Your Coding Workflow as Agent Tasks

Break the medical coding process into discrete tasks your OpenClaw agent will execute:

agent: medical_coder

tasks:

- name: chart_ingestion

description: "Ingest and parse clinical documentation from EHR export"

input: "HL7 FHIR bundle or raw clinical note (text/PDF)"

output: "Structured clinical summary (JSON)"

- name: code_assignment

description: "Assign ICD-10-CM, CPT/HCPCS codes based on extracted clinical data"

input: "Structured clinical summary"

output: "Proposed code set with confidence scores"

- name: compliance_check

description: "Validate codes against payer rules, medical necessity, bundling logic"

input: "Proposed code set + payer ID"

output: "Validation report with flags/warnings"

- name: denial_risk_scoring

description: "Score claim for denial probability based on historical patterns"

input: "Validated code set + payer history"

output: "Risk score (0-100) + flagged issues"

- name: query_generation

description: "Generate provider query for documentation gaps"

input: "Validation flags + original clinical note"

output: "Structured query (CDI format)"

Step 2: Build the NLP Extraction Layer

Your agent's first job is turning unstructured physician notes into structured data. OpenClaw's NLP pipeline handles this:

from openclaw import Agent, NLPExtractor

coder_agent = Agent("medical_coder")

extractor = NLPExtractor(

model="openclaw-clinical-v3",

entity_types=[

"diagnosis",

"procedure",

"medication",

"lab_result",

"vital_sign",

"laterality",

"severity",

"chronicity"

],

context_window=8192,

confidence_threshold=0.82

)

clinical_note = """

Patient presents with acute exacerbation of chronic systolic heart failure,

NYHA Class III. EF 30% on echo. Started on IV furosemide 40mg BID.

History of Type 2 DM, CKD Stage 3b, and prior CABG x3 (2019).

Chest X-ray shows bilateral pleural effusions.

"""

extraction = coder_agent.run_task(

"chart_ingestion",

extractor=extractor,

input_text=clinical_note

)

# Output: structured JSON with diagnoses, procedures,

# confidence scores, and documentation quality flags

The key parameter here is confidence_threshold. Setting it at 0.82 means the agent only auto-codes when it's at least 82% confident. Anything below that gets routed to human review. Tune this based on your risk tolerance — higher threshold means more human review but fewer errors.

Step 3: Code Assignment with Rules Engine

This is where the agent maps extracted clinical concepts to actual billing codes:

from openclaw import CodeMapper, RulesEngine

mapper = CodeMapper(

code_sets=["ICD-10-CM-2026", "CPT-2026", "HCPCS-2026"],

guidelines="official_coding_guidelines_2025",

payer_rules={

"medicare": "cms_ncd_lcd_2025",

"bcbs": "bcbs_commercial_rules_v12",

"united": "optum_rules_2025"

}

)

rules = RulesEngine(

bundling_rules=True,

modifier_logic=True,

sequencing="principal_first",

mcc_cc_optimization=True # Maximize DRG accuracy

)

code_result = coder_agent.run_task(

"code_assignment",

mapper=mapper,

rules=rules,

clinical_data=extraction.output

)

# Returns:

# {

# "icd10_cm": [

# {"code": "I50.21", "desc": "Acute systolic heart failure", "confidence": 0.94},

# {"code": "E11.65", "desc": "Type 2 DM with hyperglycemia", "confidence": 0.88},

# {"code": "N18.3b", "desc": "CKD Stage 3b", "confidence": 0.91}

# ],

# "cpt": [...],

# "drg_estimate": "MS-DRG 291",

# "flags": ["verify_hf_acuity_with_provider"]

# }

Notice the mcc_cc_optimization flag. This ensures the agent captures all Major Complication/Comorbidity and Complication/Comorbidity codes that affect DRG assignment — something less experienced human coders frequently miss, leaving revenue on the table.

Step 4: Compliance and Denial Prevention

from openclaw import ComplianceChecker, DenialPredictor

compliance = ComplianceChecker(

hipaa=True,

medical_necessity="medicare_ncd_lcd",

documentation_requirements="cms_2025"

)

denial_model = DenialPredictor(

trained_on="your_historical_claims_data", # Connect your data

payer_specific=True,

lookback_months=24

)

validation = coder_agent.run_task("compliance_check",

checker=compliance,

codes=code_result.output

)

risk = coder_agent.run_task("denial_risk_scoring",

predictor=denial_model,

claim=code_result.output,

payer="medicare"

)

# risk.score = 12 (low risk)

# If score > 60, auto-route to human reviewer

The denial predictor is only as good as your historical data. If you have 24+ months of claims with denial outcomes, the model gets genuinely useful. Less than that and you're better off relying on the rules-based compliance checker alone until you accumulate enough data.

Step 5: Human-in-the-Loop Routing

This is the part most AI vendors gloss over, but it's the most important piece for production:

from openclaw import ReviewRouter

router = ReviewRouter(

auto_approve_threshold=0.88, # High confidence = auto-process

human_review_threshold=0.70, # Medium confidence = human queue

reject_threshold=0.70, # Below this = reject and flag

complex_case_rules=[

"inpatient_surgical",

"multiple_comorbidities_gt_4",

"trauma_codes",

"oncology",

"neonatal_critical_care"

]

)

decision = router.evaluate(

codes=code_result.output,

compliance=validation.output,

denial_risk=risk.output

)

# decision.action = "auto_approve" | "human_review" | "reject"

# decision.reason = "Confidence 0.94, denial risk 12, no complex flags"

# decision.estimated_time_saved = "18 minutes"

The complex_case_rules list is critical. These are the case types that always go to a human regardless of confidence score. Surgical bundling for inpatient cases, oncology coding, and neonatal critical care are too high-stakes and too nuanced for full automation right now. Be honest about these boundaries — it protects you legally and financially.

Step 6: Deploy and Monitor

from openclaw import Deploy, Monitor

deployment = Deploy(

agent=coder_agent,

integration="epic_fhir_r4", # Or cerner, athenahealth, etc.

batch_size=100, # Charts per batch

schedule="continuous", # Or "daily_6am"

audit_sample_rate=0.05 # Random 5% human audit

)

monitor = Monitor(

accuracy_target=0.95,

denial_rate_target=0.04, # Below 4%

alert_on="accuracy_drop_gt_2pct",

retrain_trigger="monthly"

)

The audit_sample_rate of 5% means that even for auto-approved claims, 1 in 20 gets randomly selected for human review. This is your quality control loop. If the audit reveals the agent is drifting below your accuracy target, the monitor triggers a retrain using corrected data. This is how you maintain compliance and build organizational trust in the system over time.

The Math That Makes This Obvious

Let's run the numbers on a mid-sized practice processing 300 charts per day:

Current state: 3 full-time coders × $85,000 fully loaded = $255,000/year. Plus turnover costs, training, management overhead. Call it $290,000.

With OpenClaw agent: AI handles 70–80% of charts autonomously. One part-time certified coder reviews edge cases and audits AI output (0.5 FTE at $85,000 = $42,500). OpenClaw platform costs vary, but you're looking at a fraction of even one coder's salary for the compute.

Conservative estimate: $200,000+ in annual savings, with faster turnaround, fewer denials, and 24/7 processing capability.

Cleveland Clinic saved $5M per year with a similar approach. Mass General Brigham cut coding turnaround by 25%. These aren't small organizations experimenting — they're major health systems running AI coding in production.

Start Building or Let Us Build It

You have two options.

Option one: Take the architecture above and build it yourself on OpenClaw. The platform gives you the NLP models, code mapping engines, compliance checkers, and deployment infrastructure. If you have a technical team that understands both software and revenue cycle management, you can have a working prototype in weeks.

Option two: If you don't want to staff this internally — or if you want it done right the first time without the learning curve — hire our Clawsourcing team to build it for you. We've built these agents before. We understand the compliance requirements, the EHR integrations, and the edge cases that trip up teams who are new to healthcare AI. We scope it, build it, deploy it, and hand you the keys.

Either way, the economics are clear. The technology works. The question is just whether you build it this quarter or next.