How to Run Multiple Agents Simultaneously Without Chaos

How to Run Multiple Agents Simultaneously Without Chaos

Let's get the uncomfortable truth out of the way first: most people who try running multiple AI agents simultaneously end up with an expensive, chaotic mess. I know because I've been there, watching agents argue with each other in circles while my token budget evaporates like water on a hot skillet.

The promise of multi-agent systems is seductive. You imagine a little digital team — a researcher feeding a writer feeding an editor — cranking out work while you do something else. The reality, for most people on most platforms, is closer to herding cats in a thunderstorm. Agents loop infinitely. They ignore their roles. They contradict each other. They burn through API credits on tasks a single well-prompted agent could handle in thirty seconds.

But here's the thing: multi-agent setups can work. They work really well, actually — when you architect them correctly. And after months of building with OpenClaw, I've landed on a set of patterns that consistently produce reliable, cost-effective multi-agent workflows without the chaos.

This post is the guide I wish I'd had when I started.

Why Multi-Agent Systems Usually Fail

Before we fix the problem, let's understand it. If you've already tried running multiple agents and hit a wall, you'll recognize at least a few of these:

The infinite loop problem. Agent A sends output to Agent B, which sends feedback to Agent A, which revises and sends back to Agent B, which sends more feedback... forever. This is the single most common failure mode. I've seen people report spending $18–40 on a single run that never actually produced a final output. The agents just kept talking to each other like two people who can't decide where to eat dinner.

Token burn and cost explosion. Every agent interaction is a full LLM call. When you have four or five agents communicating, you're not adding cost linearly — you're multiplying it. Each message includes the full conversation context, which grows with every turn. A "simple" five-agent workflow can torch through tens of thousands of tokens before anything useful happens.

Role drift. You give Agent C very specific instructions: "You are a code reviewer. Only review code. Do not write code." Three turns later, Agent C is writing code. This happens constantly, especially with less capable models, because the agent's assigned persona gets overwhelmed by the conversational context.

State and memory collapse. Agent A updates a shared document. Agent B still has the old version in its context. Agent D hallucinates a version that never existed. Nobody agrees on what the current state of the project is. Sound familiar?

Debugging nightmares. When something goes wrong in a single-agent system, you can trace the problem. When something goes wrong across five agents passing messages back and forth, you're reading through pages of logs trying to figure out which agent went off the rails and when. Someone on a Discord server I follow described it perfectly: "It's like trying to debug five drunk people on a conference call."

The communities building with various agent frameworks have mostly converged on the same conclusion: two to three agents is the practical ceiling for reliable work. Beyond that, complexity outpaces capability.

Unless you set things up right from the start.

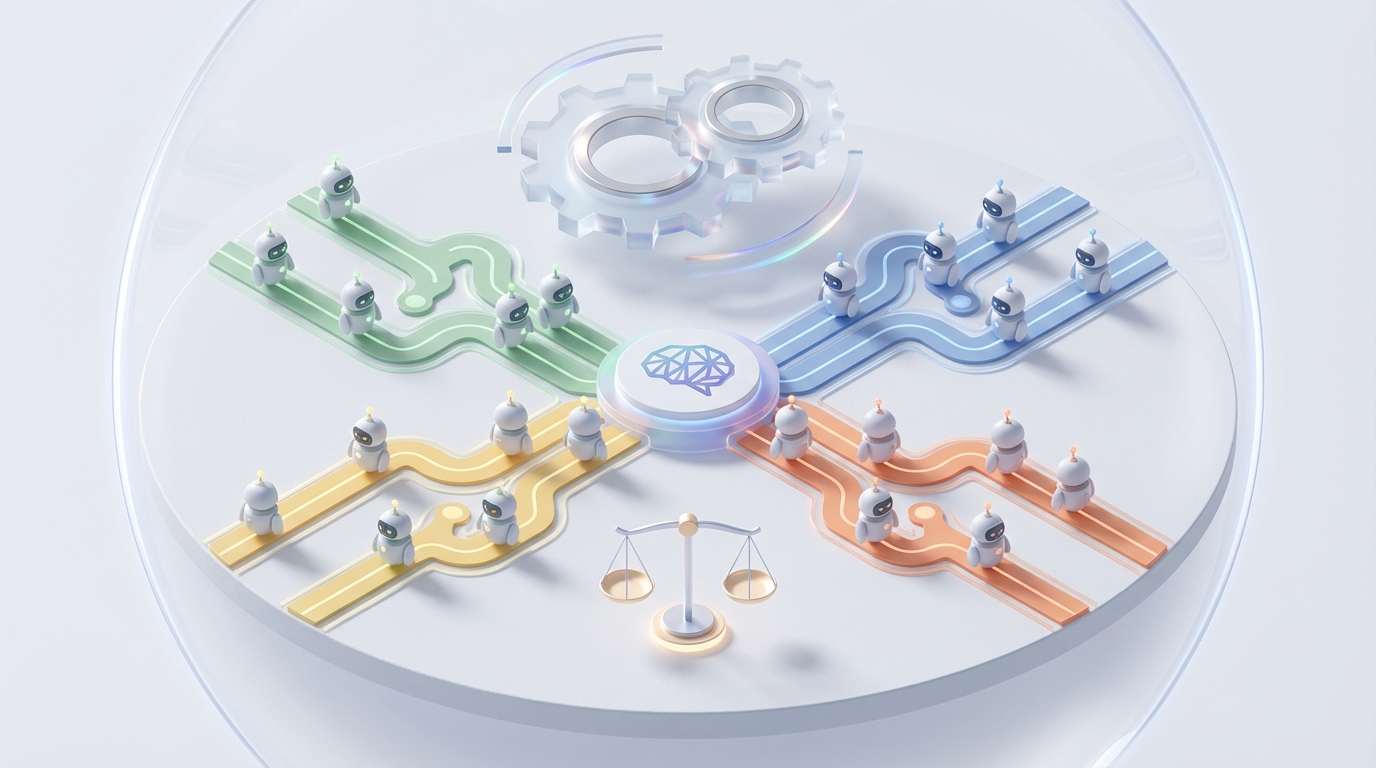

The OpenClaw Approach: Hierarchical, Not Democratic

OpenClaw gives you the primitives to build multi-agent systems that actually hold together, but the platform alone isn't enough. You need the right architecture. And the architecture that works — the one I've tested extensively and keep coming back to — is hierarchical orchestration.

Here's what that means in practice: instead of letting agents freely communicate with each other in some peer-to-peer free-for-all, you designate one agent as the supervisor and the rest as specialized workers. The supervisor is the only agent that talks to the workers. Workers never talk to each other directly.

This single structural decision eliminates about 80% of the chaos.

Think of it like a well-run kitchen. You don't have the line cooks, the sous chef, and the dishwasher all shouting at each other. The head chef (supervisor) assigns tasks, collects results, and decides what happens next. Each station (worker agent) does one thing and reports back.

Here's a basic skeleton of how I set this up in OpenClaw:

supervisor:

role: "Project Coordinator"

instructions: |

You manage a team of specialist agents. For each user request:

1. Break the request into discrete subtasks

2. Assign each subtask to the appropriate specialist

3. Collect and review their outputs

4. Compile the final deliverable

Never perform specialist work yourself. Only coordinate.

max_turns: 10

model: gpt-4o

workers:

researcher:

role: "Research Specialist"

instructions: |

You receive a specific research question from the supervisor.

Return a structured summary with sources. Nothing else.

Do not editorialize. Do not write prose. Facts and sources only.

max_turns: 1

model: gpt-4o-mini

writer:

role: "Content Writer"

instructions: |

You receive research summaries and a content brief from the supervisor.

Write the requested content based solely on provided materials.

Do not conduct your own research. Do not deviate from the brief.

max_turns: 1

model: gpt-4o

editor:

role: "Editor"

instructions: |

You receive a draft from the supervisor.

Return specific, actionable edits. Use the format:

- [LINE/SECTION]: [ISSUE] → [SUGGESTED FIX]

Do not rewrite the piece. Only flag issues and suggest fixes.

max_turns: 1

model: gpt-4o-mini

Notice a few critical details:

Workers get max_turns: 1. They receive a task, produce an output, and they're done. No back-and-forth. No opportunity to loop. This alone saves you a fortune in tokens and eliminates the infinite conversation problem.

The supervisor has a hard cap too. max_turns: 10 means the entire workflow must complete within ten supervisor actions. If it doesn't, it stops. You get a partial result instead of an infinite bill.

Instructions are absurdly specific about what agents should NOT do. "Do not conduct your own research." "Do not rewrite the piece." "Never perform specialist work yourself." Negative instructions are your best defense against role drift.

Cheaper models handle simpler tasks. The researcher and editor use gpt-4o-mini because their jobs are more constrained. The writer and supervisor use the full model because they need more nuance. This cuts costs dramatically without sacrificing quality where it matters.

The Five Rules That Keep Things Stable

After building dozens of multi-agent workflows in OpenClaw, I've distilled my approach down to five rules. Break any of them and things start falling apart.

Rule 1: One Agent, One Job

Every agent should do exactly one thing. Not "research and summarize." Not "write and edit." One thing. If you find yourself using the word "and" in an agent's role description, split it into two agents.

This seems like overkill until you realize that role clarity is what prevents agents from stepping on each other's toes. A researcher that also summarizes will start editorializing. A writer that also edits will get stuck in self-revision loops. Keep them separate.

Rule 2: Structured Outputs, Always

Never let agents communicate in freeform prose. Every message between agents should follow a defined schema. In OpenClaw, I enforce this with structured output configs:

researcher:

output_format:

type: json

schema:

findings:

type: array

items:

claim: string

source: string

confidence: "high | medium | low"

summary: string

gaps: array

When the researcher's output is a structured JSON object instead of a free-text paragraph, the downstream agents (and the supervisor) can parse it reliably. No ambiguity. No "well, I think they meant..." hallucinations. Just clean data flowing between nodes.

Rule 3: Hard Limits on Everything

Set explicit limits on turns, tokens, and time for every single agent. No exceptions.

limits:

max_turns_per_agent: 1

max_supervisor_turns: 10

max_tokens_per_response: 2000

total_budget_tokens: 50000

timeout_seconds: 120

The total_budget_tokens is especially important. This is your kill switch. Once the entire workflow has consumed 50,000 tokens (or whatever you set), it stops. You get whatever output exists at that point. This prevents the $40 surprise runs that make people swear off multi-agent systems entirely.

Rule 4: No Agent-to-Agent Communication

I said this already, but it's worth its own rule because people keep trying to cheat on it. Workers do not talk to each other. Ever. All communication flows through the supervisor.

The moment you let Agent A send a message directly to Agent B, you've created an uncontrolled communication channel that the supervisor can't monitor or manage. That's where loops start. That's where contradictions emerge. That's where context diverges.

If the writer needs something from the researcher, the writer tells the supervisor, and the supervisor asks the researcher. Yes, this adds a step. That step is the load-bearing wall of your entire system.

Rule 5: Fail Fast, Fail Loudly

Configure your agents to return explicit error states instead of trying to "figure it out" when something goes wrong.

researcher:

instructions: |

...

If you cannot find reliable information on the topic, return:

{"status": "failed", "reason": "Insufficient sources found for [topic]"}

Do NOT make up information. Do NOT guess. Fail explicitly.

When a worker fails explicitly, the supervisor can make an intelligent decision: retry with different parameters, skip that subtask, or ask the user for clarification. When a worker silently hallucinates through a problem, you get garbage output that looks like real output, and you don't discover the problem until much later.

A Real Workflow: Content Research and Writing

Let me walk through a complete example that I actually use. This is a content production workflow for generating well-researched blog posts. Four agents, one supervisor.

The team:

- Supervisor: Coordinates the workflow, reviews quality, compiles final output

- Researcher: Takes a topic, returns structured findings from provided skills and tools

- Outliner: Takes research, produces a structured content outline

- Writer: Takes outline + research, writes the full draft

- Editor: Reviews the draft against the outline and research, returns specific edits

The flow:

User Request → Supervisor

→ Supervisor assigns topic to Researcher

← Researcher returns structured findings

→ Supervisor assigns findings to Outliner

← Outliner returns section-by-section outline

→ Supervisor sends outline + findings to Writer

← Writer returns full draft

→ Supervisor sends draft + outline to Editor

← Editor returns list of specific edits

→ Supervisor applies edits and compiles final post

Final Output → User

The entire thing runs in a linear pipeline. No branching, no loops, no agent debates. The supervisor moves the baton from one specialist to the next, checks each output before passing it forward, and assembles the final result.

Is it less "exciting" than a free-roaming swarm of agents collaborating autonomously? Absolutely. Does it actually work every single time, produce consistent output, and cost under $1 per run? Also absolutely.

Scaling Beyond Three Agents

The "two to three agents max" conventional wisdom exists because most people build flat, peer-to-peer systems. With hierarchical orchestration in OpenClaw, you can scale further — I've run reliable workflows with six or seven specialized workers — because the complexity doesn't compound the same way.

The key insight: adding a worker to a hierarchical system adds one new communication channel (worker ↔ supervisor). Adding a worker to a peer-to-peer system adds N new channels (worker ↔ every other agent). That's why peer systems blow up at four agents and hierarchical systems stay manageable at seven.

That said, I'd still recommend starting small. Get two agents working perfectly before adding a third. Get three working before adding a fourth. Each new agent should solve a specific bottleneck you've identified, not just exist because "more agents = better."

When You Just Want It to Work

I've spent weeks iterating on these patterns — the YAML configs, the instruction templates, the output schemas, the limit settings. It's rewarding work if you enjoy the architecture side of things, but it's a lot of trial and error to get right.

If you'd rather skip the setup phase and start building right away, Felix's OpenClaw Starter Pack on Claw Mart is genuinely the fastest way I've found to get a working multi-agent setup running. For $29, you get pre-configured skills that handle the orchestration patterns I've described in this post — the hierarchical structure, the structured outputs, the hard limits, all of it. It's not a magic bullet, but it eliminates the cold-start problem of figuring out all the config from scratch. I wish it had existed when I started; it would have saved me a lot of wasted runs and a not-insignificant OpenAI bill.

The Honest Assessment

Multi-agent systems in OpenClaw are powerful but not magic. They work when you respect the constraints:

- Hierarchical over democratic. One supervisor, specialized workers.

- Constrained over autonomous. Hard limits on turns, tokens, and time.

- Structured over freeform. JSON schemas between every agent handoff.

- Explicit over implicit. Agents should fail loudly, not hallucinate quietly.

- Incremental over ambitious. Start with two agents. Prove it works. Then add more.

The people who get burned by multi-agent systems are usually the ones who try to build a full autonomous team on day one. The people who succeed are the ones who build boring, predictable pipelines where each agent does one thing well and the supervisor keeps everyone on task.

Start with the simplest workflow that solves your actual problem. Get it working reliably. Then, and only then, add complexity.

That's it. That's the whole secret. It's not glamorous, but it works, and in the world of AI agents, "works consistently" is worth more than "looks impressive in a demo."

Now go build something.