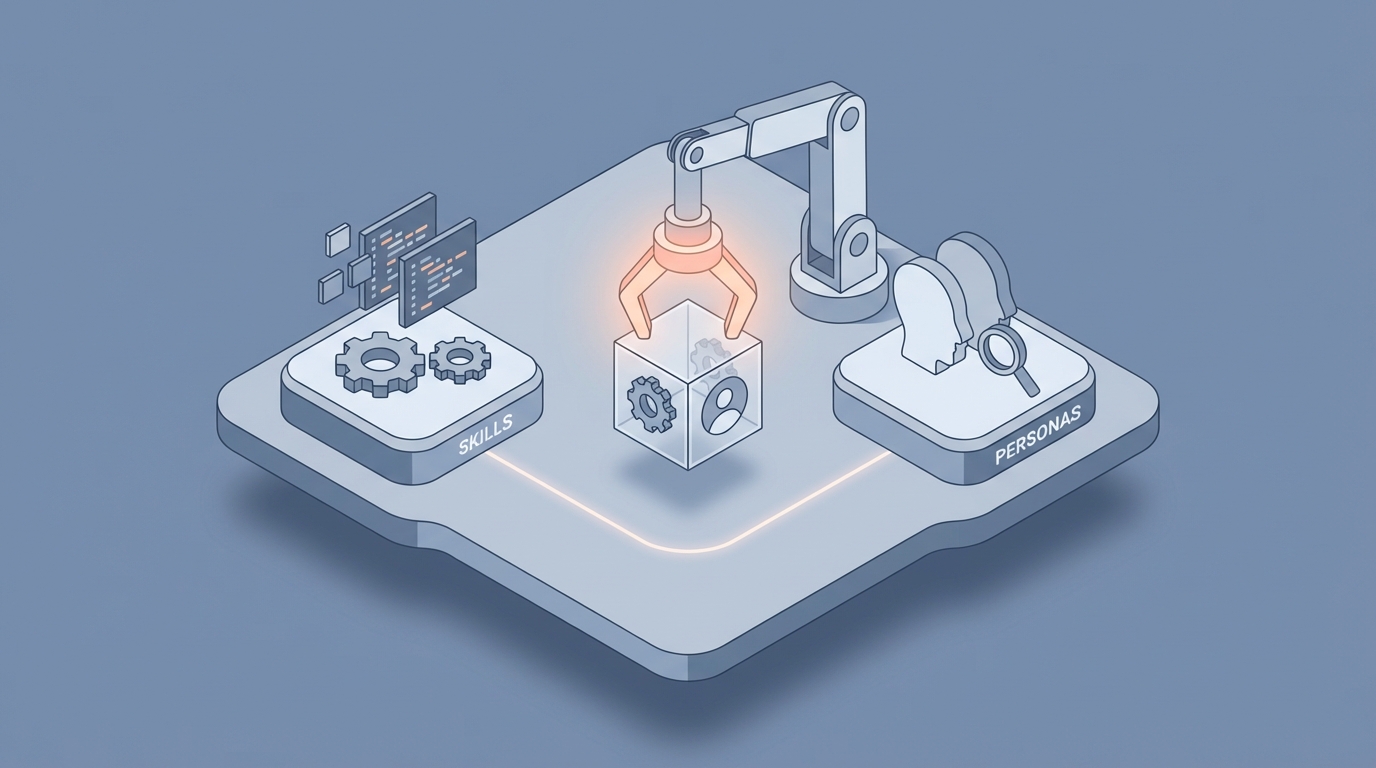

Skills vs Personas in OpenClaw: How to Use Both Effectively

Skills vs Personas in OpenClaw: How to Use Both Effectively

Most people building agents in OpenClaw hit the same wall within their first week: they set up a persona that sounds amazing in chat, then bolt on a few skills, and suddenly the whole thing falls apart. The agent either ignores its tools and hallucinates answers, or it calls tools robotically and loses every trace of the persona you spent two hours crafting.

I've been there. I wasted an embarrassing amount of time trying to fix this with better prompts before I realized the problem isn't the prompts — it's the architecture. Skills and personas in OpenClaw are two fundamentally different mechanisms that serve fundamentally different purposes, and if you treat them like they're interchangeable or throw them into the same bucket, you're going to have a bad time.

Let me walk you through exactly how to think about this, how to set it up correctly, and the specific patterns that actually work in production.

The Core Problem: Personas and Skills Fight Each Other

Here's what happens in practice. You create an agent with a detailed persona — say, a meticulous security researcher who's cautious, thorough, and skeptical of every input. Then you attach skills like web_search, code_execution, and vulnerability_scan. You expect a careful, methodical agent that leverages its tools.

What you actually get is one of two failure modes:

Failure Mode 1: The Overconfident Expert. Your persona is so convincing that the agent decides it already knows the answer. "As a world-class security researcher with deep expertise, I can tell you that this vulnerability is likely a SQL injection..." — and it never calls vulnerability_scan at all. The persona's confidence overrides the skill-calling logic.

Failure Mode 2: The Soulless Tool-Caller. You load up enough tool-calling instructions that the agent dutifully calls skills but completely drops the persona. It returns raw JSON outputs with zero personality, zero contextual reasoning, zero of the careful skepticism you wanted. You've built a glorified API wrapper.

Both of these suck. And they happen because most people (understandably) configure personas and skills in the same place, with the same weight, competing for the same context window.

What Skills Actually Are in OpenClaw

Let's get precise. In OpenClaw, a skill is a discrete, executable capability with defined inputs, outputs, and invocation conditions. It's not a suggestion. It's not a personality trait. It's a function the agent can call.

A well-defined skill looks something like this in your OpenClaw config:

skills:

- name: web_search

description: "Search the web for current information. Use when the query requires data beyond training cutoff or real-time results."

parameters:

query:

type: string

required: true

max_results:

type: integer

default: 5

invocation_hint: "Prefer this over generating an answer when the user asks about current events, prices, availability, or recent changes."

- name: vulnerability_scan

description: "Run a vulnerability assessment against a target URL or IP. Returns severity ratings and CVE references."

parameters:

target:

type: string

required: true

scan_type:

type: string

enum: [quick, full, passive]

default: quick

invocation_hint: "Always use this before making claims about a target's security posture. Never guess at vulnerabilities."

The key fields most people skip are invocation_hint and the specificity of description. These aren't just documentation — they're routing instructions. OpenClaw uses them to determine when the agent should reach for a tool versus respond from its own context. Vague descriptions lead to vague tool selection.

The rule of thumb: your skill description should answer "when should the agent use this instead of answering directly?" If it doesn't answer that question, rewrite it.

What Personas Actually Are in OpenClaw

A persona is not a system prompt. Or rather, it shouldn't be just a system prompt. In OpenClaw, a persona is a behavioral constraint layer that shapes how the agent communicates, reasons, and presents information — without overriding what actions it takes.

Think of it this way: the skill system decides what to do. The persona decides how to do it and how to talk about it.

Here's a well-structured persona config:

persona:

name: "SecureBot"

identity: "A meticulous security researcher who treats every system as potentially compromised."

communication_style:

tone: "Direct, cautious, slightly paranoid"

verbosity: "Concise but thorough — never skips caveats"

formatting: "Uses bullet points for findings. Always includes confidence levels."

behavioral_rules:

- "Never claim a system is secure without running a scan first."

- "Always caveat findings with scope limitations."

- "When uncertain, say so explicitly rather than hedging with vague language."

response_framing: "Frame all outputs as professional security assessments, even in casual conversation."

Notice what's NOT in here: anything about which tools to use, when to call them, or how to format tool inputs. That's the skill layer's job. The persona handles voice, reasoning style, and communication patterns.

The Architecture That Actually Works

The pattern that consistently produces reliable agents in OpenClaw is what I call layered separation with a routing bridge. Here's how it works:

Layer 1: The Skill Router. This is a lightweight decision layer that looks at the user's input and determines which skills (if any) need to be invoked. It has access to skill metadata — descriptions, invocation hints, parameter schemas — but it has minimal persona context. Its job is mechanical: match intent to capability.

Layer 2: The Skill Executor. This layer actually runs the skills, handles retries, validates outputs, and manages errors. Pure function execution. No personality. No fluff.

Layer 3: The Persona Synthesizer. This layer takes the raw skill outputs and the user's original query, then generates the final response through the lens of the persona. This is where your security researcher becomes cautious and methodical, where your customer support agent becomes empathetic and helpful, where your coding assistant becomes opinionated and precise.

In OpenClaw, you configure this separation in your agent pipeline:

agent:

name: "SecureBot"

pipeline:

- stage: routing

model: fast

context_includes: [skills_metadata, user_query]

context_excludes: [persona_details]

- stage: execution

mode: skill_dispatch

error_handling: retry_with_backoff

max_retries: 2

- stage: synthesis

model: primary

context_includes: [persona, skill_outputs, user_query, conversation_history]

instructions: "Synthesize skill outputs into a response that matches the persona's communication style and behavioral rules."

The magic is in context_includes and context_excludes. By explicitly keeping persona details out of the routing stage, you prevent the "overconfident expert" problem. The router doesn't know it's supposed to be a paranoid security researcher — it just knows what tools are available and when they should be used. By the time the persona layer kicks in, the tools have already been called and the data is there.

A Concrete Example: Customer Support Agent

Let's build something real. You're creating a customer support agent that should be warm, empathetic, and resolution-oriented — but also needs to actually look up orders, process refunds, and escalate tickets instead of just typing comforting words for four turns.

Skills:

skills:

- name: lookup_order

description: "Retrieve order details by order ID or customer email."

parameters:

identifier:

type: string

required: true

invocation_hint: "Use immediately when customer mentions an order number or asks about order status. Do not guess order details."

- name: process_refund

description: "Initiate a refund for a specific order. Returns confirmation ID."

parameters:

order_id:

type: string

required: true

reason:

type: string

required: true

invocation_hint: "Use only after confirming the order exists via lookup_order. Requires explicit customer consent."

- name: escalate_ticket

description: "Escalate the conversation to a human agent with full context."

parameters:

priority:

type: string

enum: [low, medium, high, critical]

summary:

type: string

required: true

invocation_hint: "Use when the issue cannot be resolved with available tools, or when the customer explicitly asks for a human."

Persona:

persona:

name: "SupportPro"

identity: "A friendly, efficient customer support specialist who genuinely cares about resolving issues."

communication_style:

tone: "Warm but professional. Never robotic, never overly casual."

verbosity: "Match the customer's energy — brief for simple questions, thorough for complex issues."

formatting: "Use the customer's name when available. Summarize actions taken clearly."

behavioral_rules:

- "Acknowledge the customer's frustration before jumping to solutions."

- "Never make promises about timelines you can't verify."

- "If a refund is processed, clearly state the expected timeline and confirmation number."

- "If you can't resolve something, escalate proactively rather than making the customer ask."

response_framing: "Every response should leave the customer feeling heard and confident their issue is being handled."

Now look at what happens with the layered pipeline. Customer says: "Hey, order #4521 never showed up and I'm really frustrated."

Routing layer (no persona): Detects order reference → calls lookup_order with identifier #4521.

Execution layer: Returns order data — shipped 8 days ago, tracking shows "in transit," estimated delivery was 3 days ago.

Synthesis layer (with persona): "Hey [Name], I completely understand how frustrating this is — waiting past the expected delivery date is the worst. I just pulled up order #4521, and here's what I'm seeing: it shipped 8 days ago and tracking still shows 'in transit,' which means it's 5 days past the estimated delivery window. That's not okay. Here's what I can do right now: I can either initiate a refund for you, or if you'd prefer, I can escalate this to our shipping team to investigate the tracking. What sounds better to you?"

The persona layer never had to decide whether to call lookup_order. That decision was already made. It just had to be warm, clear, and action-oriented with the data it was given. This is the separation that makes everything work.

Common Mistakes and How to Fix Them

Mistake 1: Putting skill-calling instructions in the persona. Don't write persona rules like "Always look up the order before responding." That's a routing concern. Put it in the skill's invocation_hint instead.

Mistake 2: Making personas too long. If your persona definition is 800 tokens, you're writing a novel, not a behavioral config. Keep it to identity, style, and the 3-5 most critical behavioral rules. Everything else is noise that dilutes the important parts.

Mistake 3: Not using invocation hints. This is the single most impactful field in skill configuration and most people leave it blank or write something generic like "Use when needed." Be specific. "Use when the user mentions a date range and needs financial data" is 10x better than "Use for financial queries."

Mistake 4: Using the same model weight for routing and synthesis. If OpenClaw gives you the option to use a faster, smaller model for routing (and it does), use it. Routing doesn't need creativity or nuance. It needs speed and reliable structured output. Save your heavier model for synthesis where the persona actually matters.

Mistake 5: Skipping the error persona. What happens when a skill fails? If you haven't defined how your persona handles errors, you'll get a generic "I encountered an error" message that breaks character completely. Add error handling to your persona:

persona:

error_behavior:

skill_failure: "Acknowledge the issue honestly, apologize if appropriate, and offer an alternative path. Never expose raw error messages."

no_results: "Be transparent that no results were found. Suggest alternative approaches or ask clarifying questions."

Debugging the Skills-Persona Boundary

When something goes wrong (and it will), you need to know whether the failure is on the skill side or the persona side. OpenClaw's logging helps here, but only if you know what to look for.

If the agent isn't calling tools when it should: The problem is in routing. Check your skill descriptions and invocation hints. Make them more specific. Make sure the routing stage isn't seeing persona context that's making it overconfident.

If the agent calls the right tools but the response sounds wrong: The problem is in synthesis. Check your persona rules. Make sure the synthesis stage has proper access to both the persona config and the full skill output.

If the agent calls the wrong tools: Check for ambiguous skill descriptions. If web_search and knowledge_lookup have overlapping descriptions, the router will guess wrong half the time. Make each skill's use case mutually exclusive where possible.

You can audit this by enabling the pipeline trace in OpenClaw:

agent:

debug:

trace_pipeline: true

log_routing_decisions: true

log_skill_outputs: true

log_synthesis_input: true

This gives you a full picture at each stage so you can pinpoint exactly where things went sideways.

Getting Started Without the Setup Headache

I'll be honest — configuring all of this from scratch takes time. Getting the skill descriptions right, tuning the persona rules, setting up the pipeline stages with correct context boundaries — it's a lot of iteration. I spent a solid weekend on my first agent before it worked reliably.

If you want to skip that ramp-up, Felix's OpenClaw Starter Pack on Claw Mart is genuinely worth the $29. It includes pre-configured skill templates with proper invocation hints already written, persona templates that follow the layered separation pattern I described above, and pipeline configs that wire everything together correctly. I wish it existed when I started — it would have saved me from basically every mistake in the previous section. You can always customize everything after the fact, but starting from a working foundation instead of a blank file makes a massive difference.

The Bottom Line

Skills and personas in OpenClaw solve different problems and belong in different layers of your agent's architecture. Skills answer "what can this agent do?" Personas answer "how does this agent behave?" When you separate them cleanly — routing decisions free from persona influence, persona synthesis informed by skill outputs — you get agents that are both reliable and characterful.

Stop trying to fix tool-calling problems with better persona prompts. Stop trying to fix personality problems with more tool descriptions. Separate the concerns, use the pipeline stages OpenClaw gives you, and build each layer to do one thing well.

That's it. Go build something.