OpenClaw Gateway Crashes: Root Causes and Fixes

OpenClaw Gateway Crashes: Root Causes and Fixes

If you've been building agents on OpenClaw for any length of time, you've hit it. That moment where everything is humming along — your agents are making tool calls, reasoning is clean, the whole pipeline feels like magic — and then the gateway just... dies. A 502. A timeout. A cryptic connection reset. Your agent's state evaporates. Hours of work, gone.

I've been there more times than I'd like to admit, and I've spent an embarrassing number of late nights staring at logs trying to figure out what the hell happened. So let's cut through the noise. This post is everything I've learned about why OpenClaw gateway crashes happen and, more importantly, how to make them stop.

No hand-waving. No "it depends." Just the actual fixes.

The Crash Patterns You're Probably Seeing

Before we get into root causes, let's establish a shared vocabulary. When people say "my OpenClaw gateway crashed," they usually mean one of these five things:

Pattern 1: The Slow Bleed. Everything works for the first 5-10 agent steps, then responses start getting slower, and eventually you get a timeout or 502. This is almost always a memory or connection leak.

Pattern 2: The Sudden Death. Agent is mid-execution, no warning signs, and the gateway process just dies. Restart it, run the same workflow, it dies again at a completely different point. This is usually an OOM kill at the infrastructure level.

Pattern 3: The Rate Limit Cascade. You get a 429, your agent retries immediately, gets another 429, retries again, and within seconds your entire multi-agent system is in a death spiral of retries that makes the problem exponentially worse.

Pattern 4: The Long-Running Timeout. Your agent is doing something legitimately complex — maybe a 15-step ReAct loop with multiple tool calls — and the gateway connection just drops after a certain duration. The agent framework treats this as a fatal error and the whole thing collapses.

Pattern 5: The Concurrency Bomb. Everything works perfectly with one agent. You scale to 5 or 10 running in parallel, and the gateway starts returning errors, dropping connections, or becomes completely unresponsive.

Sound familiar? Good. Let's fix each one.

Root Cause #1: No Retry Logic (Or Bad Retry Logic)

This is the single most common reason OpenClaw gateway issues turn into full-blown crashes. The underlying issue might be a momentary network blip — something that would resolve itself in 500 milliseconds — but because there's no retry logic, your agent framework treats it as a fatal error and kills the entire execution.

Here's what most people's code looks like when they start:

# The "please crash" pattern

def call_openclaw(prompt):

response = openclaw_client.chat.completions.create(

model="your-model",

messages=[{"role": "user", "content": prompt}]

)

return response.choices[0].message.content

Zero error handling. One transient 502 and the whole thing is dead.

Here's what it should look like:

import time

import random

def call_openclaw_with_retry(prompt, max_retries=5, base_delay=1.0):

"""

Retry with exponential backoff + jitter.

This alone will fix ~60% of gateway crash issues.

"""

for attempt in range(max_retries):

try:

response = openclaw_client.chat.completions.create(

model="your-model",

messages=[{"role": "user", "content": prompt}],

timeout=30 # Don't let it hang forever

)

return response.choices[0].message.content

except openclaw_client.RateLimitError:

# 429 — back off aggressively

delay = base_delay * (2 ** attempt) + random.uniform(0, 1)

print(f"Rate limited. Waiting {delay:.1f}s before retry {attempt + 1}/{max_retries}")

time.sleep(delay)

except openclaw_client.APIStatusError as e:

if e.status_code in (502, 503, 504):

# Gateway errors — retry with backoff

delay = base_delay * (2 ** attempt) + random.uniform(0, 1)

print(f"Gateway error {e.status_code}. Retry {attempt + 1}/{max_retries} in {delay:.1f}s")

time.sleep(delay)

else:

# 400, 401, 403, etc. — these won't fix themselves

raise

except (ConnectionError, TimeoutError) as e:

delay = base_delay * (2 ** attempt) + random.uniform(0, 1)

print(f"Connection error: {e}. Retry {attempt + 1}/{max_retries} in {delay:.1f}s")

time.sleep(delay)

raise Exception(f"Failed after {max_retries} retries")

The key details that matter:

- Exponential backoff: Each retry waits longer. 1s, 2s, 4s, 8s, 16s. This gives the gateway time to recover.

- Jitter: That

random.uniform(0, 1)prevents the thundering herd problem where all your agents retry at the exact same moment and overwhelm the gateway again. - Explicit timeout: Don't let a request hang forever. Set a reasonable timeout so a stalled connection doesn't block your entire agent loop.

- Differentiate error types: A 429 is not the same as a 401. Retrying an authentication error is pointless.

This single change will eliminate the majority of gateway-related crashes. I'm not exaggerating.

Root Cause #2: Connection and Memory Leaks

If you're seeing Pattern 1 (the slow bleed) or Pattern 2 (sudden death / OOM), you probably have a connection leak. This happens when your agent code opens HTTP connections to the OpenClaw gateway but doesn't properly close them. Over the course of a long-running agent workflow, you accumulate hundreds of open connections, and eventually something breaks.

The fix:

# Use a session with connection pooling

from requests.adapters import HTTPAdapter

from urllib3.util.retry import Retry

def create_robust_session():

"""

Connection pooling + automatic retries at the HTTP level.

This sits underneath your OpenClaw client.

"""

session = requests.Session()

retry_strategy = Retry(

total=3,

backoff_factor=1,

status_forcelist=[429, 500, 502, 503, 504],

allowed_methods=["POST", "GET"]

)

adapter = HTTPAdapter(

max_retries=retry_strategy,

pool_connections=10, # Max connections to keep alive

pool_maxsize=20, # Max pool size

pool_block=True # Block when pool is full instead of creating new connections

)

session.mount("http://", adapter)

session.mount("https://", adapter)

return session

Also important: always use context managers or explicitly close your OpenClaw client when you're done with it. Don't let connections dangle.

# Good: explicit lifecycle management

client = OpenClawClient()

try:

result = run_my_agent_workflow(client)

finally:

client.close()

# Better: context manager if supported

with OpenClawClient() as client:

result = run_my_agent_workflow(client)

If you're running in a container (Docker, Kubernetes), also make sure your memory limits are set appropriately. An agent running a 20-step tool-calling loop with large context windows can easily eat 2-4GB of RAM. If your container limit is 512MB, the kernel's OOM killer will nuke your process without any useful error message.

Root Cause #3: No Circuit Breaker

Retry logic handles transient errors. But what about when the gateway is genuinely down for an extended period? Without a circuit breaker, your agents will keep retrying, consuming resources, potentially racking up costs, and definitely not making any progress.

A circuit breaker pattern says: "If I've seen X failures in the last Y seconds, stop trying and fail fast."

import time

from collections import deque

class CircuitBreaker:

"""

Simple circuit breaker for OpenClaw gateway calls.

States:

- CLOSED: Normal operation, requests go through

- OPEN: Too many failures, reject requests immediately

- HALF_OPEN: Testing if the service has recovered

"""

def __init__(self, failure_threshold=5, recovery_timeout=60, window=120):

self.failure_threshold = failure_threshold

self.recovery_timeout = recovery_timeout

self.window = window

self.failures = deque()

self.state = "CLOSED"

self.last_failure_time = None

def record_failure(self):

now = time.time()

self.failures.append(now)

# Remove failures outside the window

while self.failures and self.failures[0] < now - self.window:

self.failures.popleft()

if len(self.failures) >= self.failure_threshold:

self.state = "OPEN"

self.last_failure_time = now

print(f"Circuit OPEN — {len(self.failures)} failures in {self.window}s window")

def record_success(self):

self.failures.clear()

self.state = "CLOSED"

def can_proceed(self):

if self.state == "CLOSED":

return True

if self.state == "OPEN":

# Check if enough time has passed to try again

if time.time() - self.last_failure_time > self.recovery_timeout:

self.state = "HALF_OPEN"

print("Circuit HALF_OPEN — testing recovery")

return True

return False

# HALF_OPEN: allow one request through

return True

# Usage

breaker = CircuitBreaker(failure_threshold=5, recovery_timeout=60)

def call_openclaw_safe(prompt):

if not breaker.can_proceed():

raise Exception("Circuit open — OpenClaw gateway is currently unavailable. Will retry in 60s.")

try:

result = call_openclaw_with_retry(prompt) # Our retry function from earlier

breaker.record_success()

return result

except Exception as e:

breaker.record_failure()

raise

This prevents the cascade pattern where one agent's retries make the gateway problems worse for every other agent.

Root Cause #4: No State Persistence / Checkpointing

This is the one that really hurts. The gateway crashes, and you lose everything your agent has done so far. You're back to square one.

The fix is checkpointing your agent's state at every meaningful step:

import json

import os

class CheckpointedAgent:

"""

Agent wrapper that saves state after every step.

If the gateway crashes, resume from last checkpoint.

"""

def __init__(self, agent_id, checkpoint_dir="./checkpoints"):

self.agent_id = agent_id

self.checkpoint_dir = checkpoint_dir

os.makedirs(checkpoint_dir, exist_ok=True)

self.state = self._load_checkpoint() or {

"step": 0,

"messages": [],

"tool_results": [],

"status": "running"

}

def _checkpoint_path(self):

return os.path.join(self.checkpoint_dir, f"{self.agent_id}.json")

def _save_checkpoint(self):

with open(self._checkpoint_path(), 'w') as f:

json.dump(self.state, f, indent=2)

def _load_checkpoint(self):

path = self._checkpoint_path()

if os.path.exists(path):

with open(path, 'r') as f:

state = json.load(f)

print(f"Resumed from checkpoint at step {state['step']}")

return state

return None

def run_step(self, step_fn):

"""Execute a step with automatic checkpointing."""

try:

result = step_fn(self.state)

self.state["step"] += 1

self.state["tool_results"].append(result)

self._save_checkpoint() # Persist after every successful step

return result

except Exception as e:

self._save_checkpoint() # Save what we have so far

print(f"Step failed at step {self.state['step']}: {e}")

raise

Now when the gateway crashes at step 14 of a 20-step workflow, you don't restart from step 1. You restart from step 14. This alone has saved me more time and money than any other single pattern.

Root Cause #5: Concurrency Misconfiguration

If you're running multiple agents in parallel and seeing crashes, the issue is almost certainly that you're overwhelming the gateway with concurrent requests. Most gateway configurations have a maximum number of concurrent connections, and if you exceed it, you get dropped connections or 503s.

import asyncio

# Control concurrency with a semaphore

GATEWAY_CONCURRENCY_LIMIT = 5 # Tune this based on your gateway's capacity

semaphore = asyncio.Semaphore(GATEWAY_CONCURRENCY_LIMIT)

async def call_openclaw_concurrent(prompt):

"""

Ensures no more than GATEWAY_CONCURRENCY_LIMIT

simultaneous requests hit the gateway.

"""

async with semaphore:

return await async_call_openclaw_with_retry(prompt)

# Run multiple agents without overwhelming the gateway

async def run_agents(prompts):

tasks = [call_openclaw_concurrent(p) for p in prompts]

return await asyncio.gather(*tasks, return_exceptions=True)

Start with a concurrency limit of 5, monitor your gateway's response times and error rates, and adjust from there. I've found that most setups start struggling around 10-15 concurrent agent requests, but it depends entirely on your specific configuration and the complexity of the calls.

The Monitoring You Actually Need

You can't fix what you can't see. At minimum, log these four things for every OpenClaw gateway call:

import time

import logging

logger = logging.getLogger("openclaw_gateway")

def call_openclaw_observed(prompt):

start = time.time()

try:

result = call_openclaw_safe(prompt) # Our circuit-breaker-wrapped function

duration = time.time() - start

logger.info(f"SUCCESS | {duration:.2f}s | tokens_est={len(prompt.split()) * 1.3:.0f}")

return result

except Exception as e:

duration = time.time() - start

logger.error(f"FAILURE | {duration:.2f}s | error={type(e).__name__}: {e}")

raise

Track success rate, latency (p50, p95, p99), error types, and error rate over time. When you see latency creeping up, that's your early warning that a crash is coming. Don't wait for the 502 — start investigating when p95 latency doubles.

Getting Started Without the Pain

If you're reading this and thinking "this is a lot of infrastructure to get right before I even start building useful agents," you're not wrong. This is exactly why I recommend starting with Felix's OpenClaw Starter Pack.

It bundles the essential configuration and patterns you need for a stable OpenClaw setup, so you're not spending your first two weeks fighting gateway crashes instead of actually building agents. It covers the baseline configuration that makes OpenClaw production-ready — the kind of stuff I've outlined in this post, but packaged so you can hit the ground running rather than debugging connection resets at 2 AM. Think of it as skipping the pain I had to learn through trial and error. You get the battle-tested defaults, and then you customize from there.

The Debugging Checklist

When your gateway crashes and you don't know why, work through this in order:

-

Check the HTTP status code. 429 = rate limits. 502/503/504 = gateway infrastructure. 400 = you're sending bad requests. Each requires a different fix.

-

Check gateway memory and CPU. If either is maxed out, you've found your bottleneck. Scale up or reduce concurrency.

-

Check connection count. If open connections are growing over time without plateauing, you have a connection leak.

-

Check request duration. If requests are taking longer and longer, the gateway is under pressure and a crash is imminent.

-

Check your retry logic. Are you retrying without backoff? You might be causing the very problem you're trying to fix.

-

Check your agent's context window size. If your agent is stuffing 100K tokens into every request after 15 steps of accumulated context, the gateway has to handle massive payloads. Implement context summarization or sliding windows.

Putting It All Together

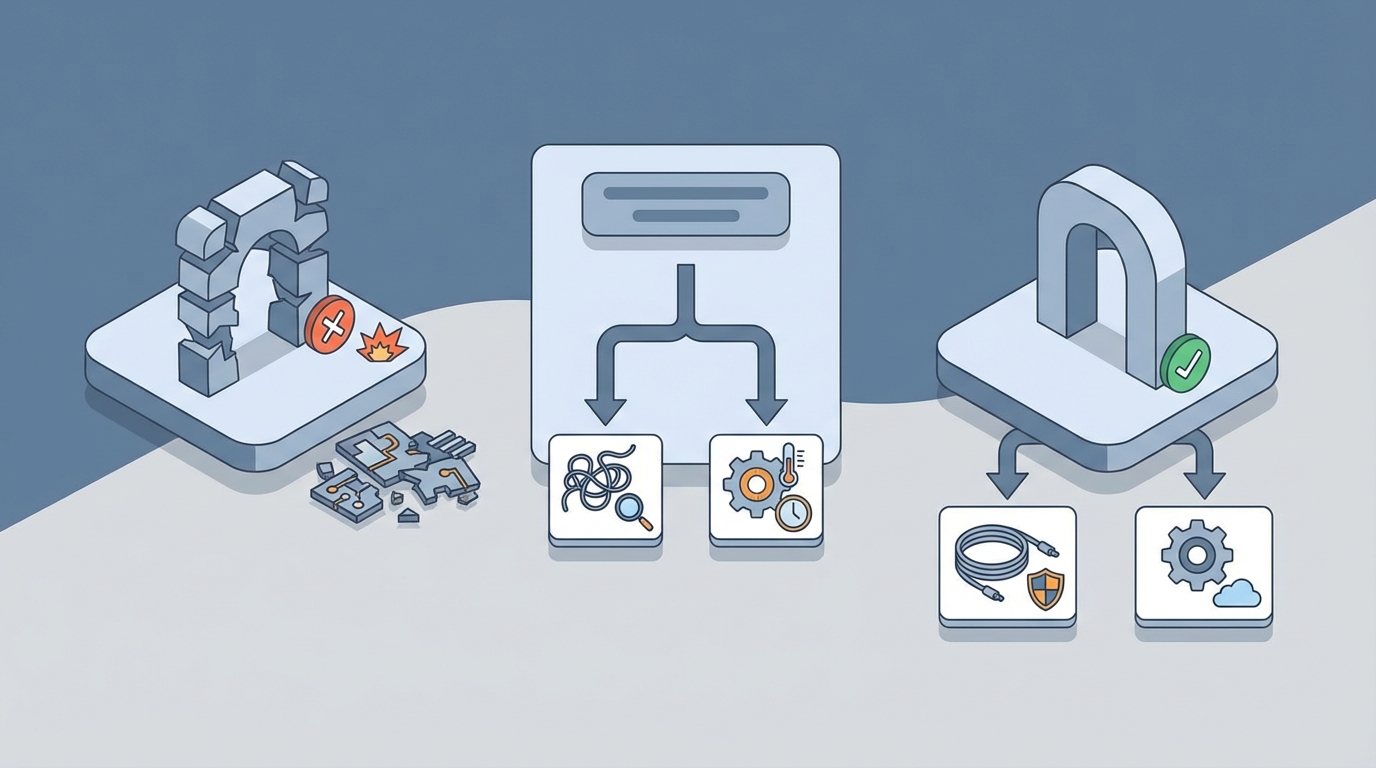

Here's the architecture that actually works in production:

Your Agent Code

↓

Concurrency Limiter (semaphore)

↓

Circuit Breaker

↓

Retry Logic (exponential backoff + jitter)

↓

Connection Pool (managed session)

↓

OpenClaw Gateway

↓

Model Endpoint

Each layer handles a different failure mode. The concurrency limiter prevents overwhelming the gateway. The circuit breaker prevents cascading failures during extended outages. The retry logic handles transient blips. The connection pool prevents leaks. And checkpointing (running alongside all of this) ensures that when failures do happen, you don't lose work.

Next Steps

Here's what I'd do this week:

-

Add the retry wrapper from this post to every OpenClaw gateway call in your codebase. This takes 15 minutes and fixes the majority of crashes.

-

Add basic logging so you can actually see what's happening when things go wrong. Another 10 minutes.

-

Implement checkpointing for any agent workflow that runs more than 5 steps. This is the biggest quality-of-life improvement you'll make.

-

Grab Felix's OpenClaw Starter Pack if you want a proven baseline configuration instead of tuning everything from scratch.

-

Add the circuit breaker and concurrency limiter once you're running multiple agents or deploying to production.

Gateway crashes are not a matter of if, they're a matter of when. The difference between a system that falls over and one that gracefully handles failures is about 200 lines of defensive code. Write those 200 lines now, and you'll thank yourself every single time they silently catch a transient error instead of blowing up your entire agent workflow.

The gateway will crash. Your agents don't have to.