How to Automate Upsell and Cross-sell Campaigns with AI

How to Automate Upsell and Cross-sell Campaigns with AI

Most companies treat upsell and cross-sell campaigns like artisan bread — handcrafted, labor-intensive, and way too dependent on one person who knows the recipe. A marketer pulls data from three different tools, builds segments in a spreadsheet, writes twelve email variants, configures flows, runs A/B tests, checks results next Thursday, and repeats the whole thing when the quarter changes.

It works. Sort of. Until you realize the team is spending 30+ hours per campaign cycle doing work that a well-built AI agent could handle in minutes.

This isn't hypothetical. Companies using predictive AI for upsell targeting are seeing 3.2x higher conversion rates than those running manual processes. Personalized upsells convert 2.8x better than generic ones. And yet only 19% of companies feel they're actually achieving personalization at scale.

The gap isn't awareness. It's execution. So let's close it.

The Manual Workflow Today (And Why It's Bleeding You Dry)

Here's what a typical upsell campaign looks like at a mid-market e-commerce or SaaS company in 2026. I've mapped this against real workflows from Shopify merchants, SaaS operators, and the marketers who run them.

Step 1: Data Collection and Segmentation (6–12 hours)

Someone pulls purchase history from Shopify, browsing behavior from GA4 or Mixpanel, RFM scores from a spreadsheet someone built last year, and support ticket data from Zendesk. They manually define segments: "customers who bought Product A but not Product B," "users on the Starter plan for 90+ days," "high-intent cart abandoners from the last 14 days."

Most of this happens in spreadsheets. Data cleaning alone eats 2–4 hours.

Step 2: Offer Strategy and Product Mapping (3–6 hours)

Which products get upsold to which segments? What's the margin on each bundle? Does offering 15% off the premium plan cannibalize full-price conversions? Someone has to think through this, usually with a combination of gut instinct and a pricing spreadsheet that hasn't been updated since Q3.

Step 3: Creative and Copy Development (4–8 hours)

Emails, SMS messages, in-app prompts, checkout page copy. Each segment might need different messaging. Gymshark's "Complete the Look" flows reportedly required 12+ creative variants. Someone writes them. Someone else designs the assets. Someone reviews for brand voice and compliance.

Step 4: Campaign Build and Trigger Configuration (3–6 hours)

Set up the flows in Klaviyo, HubSpot, Intercom, or whatever your stack looks like. Configure triggers, delays, exit conditions. Build the if/then logic: "if customer spent more than $200 and purchased from Category X in the last 60 days, send Offer Z after a 3-day delay."

Step 5: Testing and Approval (2–4 hours)

A/B test subject lines, offers, timing. Route through legal or compliance if you're in a regulated industry. Get sign-off from someone with authority.

Step 6: Monitoring and Iteration (4–8 hours per week, ongoing)

Pull reports. Look at conversion rates, revenue per email, unsubscribe rates. Decide what to change. Change it. Wait another week to see if it worked.

Total: 15–40 hours per campaign setup, plus 4–8 hours per week for optimization.

One mid-market SaaS company reported spending 25 hours per month managing just seven upsell sequences across Intercom and HubSpot. A DTC brand on Shopify said their post-purchase upsell configuration took nearly three weeks to get right.

This is the norm, not the exception.

What Makes This Painful (Beyond the Hours)

The time cost is obvious. The hidden costs are worse.

Poor relevance at scale. Thirty-one percent of consumers say they receive too many upsell emails. When your segmentation is manual, your segments are coarse. You're grouping thousands of people with different intent into the same bucket and hitting them with the same offer. Some will convert. Many will unsubscribe. A few will get annoyed enough to churn.

Data fragmentation. Your CRM doesn't talk to your billing system. Your product analytics don't sync cleanly with your email platform. Your support tickets live in a different universe entirely. Every campaign requires manual stitching of data that should flow automatically.

Optimization lag. Most teams look at campaign performance weekly. That means if an offer is bombing — or a segment is suddenly responsive to a different product — you don't know for days. Real-time opportunities evaporate while you wait for next Monday's dashboard review.

Creative fatigue. Your best copywriter is spending a third of their week rewriting variations of "You might also like..." for the fourteenth time this quarter. That's not a good use of a human brain.

Churn risk from bad timing. One SaaS company reported a 9% increase in churn after deploying aggressive automated upsells. The automation worked exactly as configured — it just shouldn't have been configured that way. A customer who just filed a support ticket doesn't want to hear about upgrading to the premium plan.

The fundamental problem: most upsell systems are automated but not intelligent. They execute rules. They don't reason about context.

What AI Can Handle Right Now

Let's be specific about what's realistic today — not science fiction, not "in a future release" — with an AI agent built on OpenClaw.

Predictive segmentation and propensity scoring. An OpenClaw agent can connect to your purchase data, product usage data, and behavioral signals, then run propensity models that score every customer on their likelihood to upgrade, buy a complementary product, or respond to a specific offer. Instead of manually building segments in a spreadsheet, you get dynamic, continuously updating segments based on actual predictive signals.

This is the single highest-leverage automation. The difference between "customers who bought Product A" and "customers who bought Product A, browsed Product B twice in the last week, have a high lifetime value, and haven't contacted support recently" is the difference between a 4% and a 15% conversion rate.

Dynamic offer selection. Rather than a marketer deciding which product to recommend to which segment, an OpenClaw agent can evaluate margins, purchase history, browsing patterns, and inventory levels to select the optimal offer for each individual customer. Amazon has done this for years with billions in infrastructure investment. OpenClaw lets you build a version of this that works for your catalog without a machine learning team.

Copy generation at scale. With proper brand voice training, an OpenClaw agent can generate email copy, SMS messages, and in-app prompts for each segment — or each individual customer — in seconds. Not generic "You might also like" filler, but contextually relevant messaging that references their specific purchase history and usage patterns.

Trigger optimization. When should the upsell fire? Immediately post-purchase? Three days later? After the fifth login? An OpenClaw agent can test timing variations continuously and converge on optimal send times per segment, per channel. No more guessing that "Tuesday at 10am" is the right time because someone read a blog post about email timing in 2021.

Continuous experimentation. Instead of manually setting up A/B tests, waiting a week, and picking a winner, an OpenClaw agent can run multi-armed bandit tests that automatically shift traffic toward winning variants in real time. Faster learning, less wasted exposure to underperforming offers.

Anomaly detection. If upsell acceptance suddenly drops 40% on a specific segment, or unsubscribe rates spike on a particular flow, an OpenClaw agent can flag it immediately — not next Thursday when someone checks the dashboard.

Step by Step: How to Build This with OpenClaw

Here's the practical implementation path. This assumes you have an existing e-commerce or SaaS business with at least a few thousand customers and some historical transaction data.

Phase 1: Connect Your Data Sources

An OpenClaw agent needs to see what your marketers currently see — just faster and more comprehensively. Connect:

- Transaction/purchase data (Shopify, Stripe, your billing system)

- Behavioral data (product usage, browsing history, feature adoption)

- Customer support data (ticket history, satisfaction scores)

- Current campaign performance (email engagement, SMS response rates)

OpenClaw handles the integration layer. You're not building ETL pipelines — you're telling the agent where to look and what matters.

Phase 2: Define Your Upsell Logic and Constraints

This is where human judgment is critical upfront so the agent can execute intelligently.

Specify:

- Product relationships. What products naturally pair? What upgrade paths exist? What bundles make margin sense?

- Business rules. Maximum discount thresholds. Minimum days between upsell attempts. Products excluded from promotion. Customer segments that should never receive automated upsells (recent complainers, enterprise accounts with dedicated reps).

- Brand guidelines. Tone, voice, messaging principles. What you'd never say. What you always emphasize.

- Success metrics. Revenue per campaign? Conversion rate? Customer lifetime value impact? Define what "winning" looks like so the agent optimizes for the right thing.

Think of this as writing the operating manual for a very fast, very tireless junior marketer. Be specific. The more precise your constraints, the better the output.

Phase 3: Build the Agent Workflow

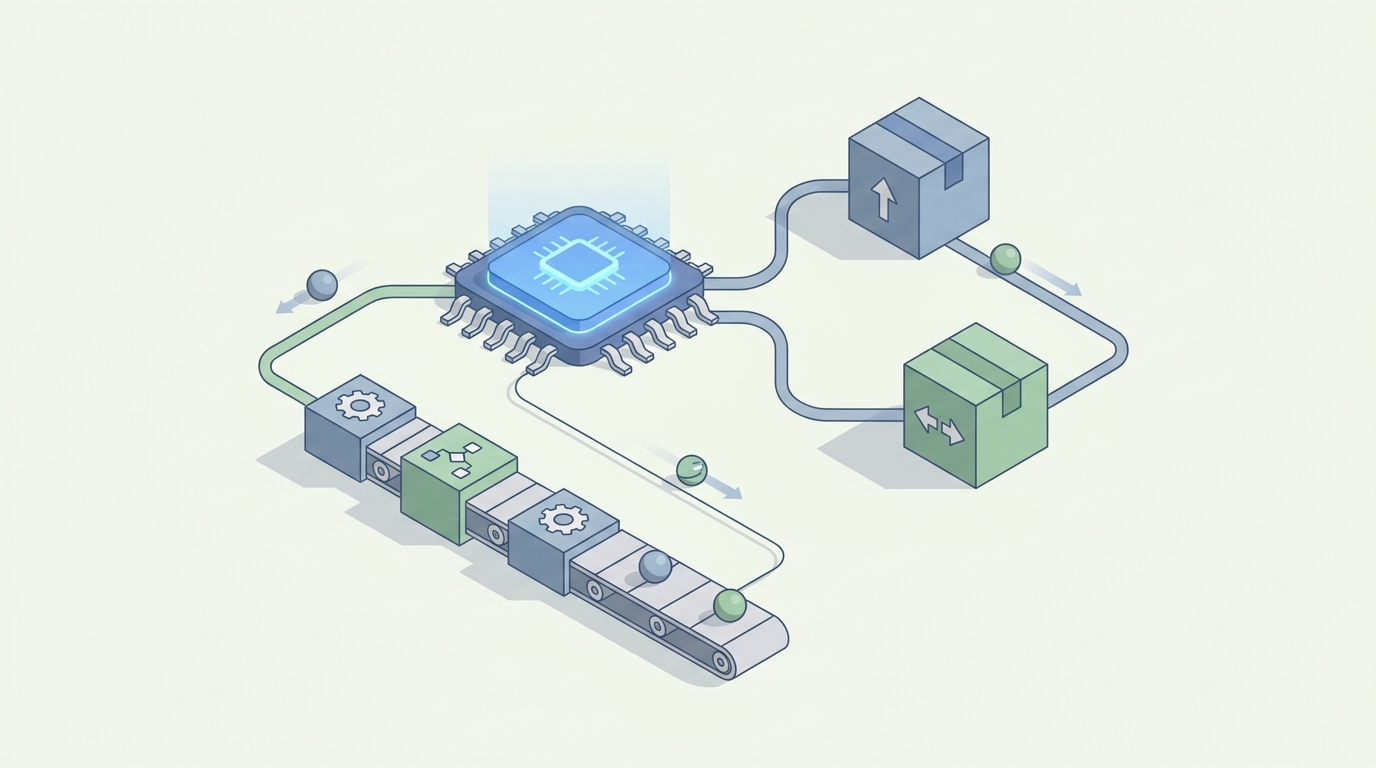

In OpenClaw, you're constructing an agent that follows this loop:

- Score and segment → Continuously evaluate every customer's propensity to convert on each available upsell offer.

- Select offer → For each high-propensity customer, choose the optimal product, bundle, or upgrade based on margin, relevance, and inventory.

- Generate creative → Produce channel-appropriate copy (email, SMS, in-app) tailored to the customer's context.

- Determine timing and channel → Pick the optimal moment and medium for delivery based on historical engagement patterns.

- Execute → Push the campaign to your delivery platform (Klaviyo, HubSpot, Intercom, Attentive, etc.).

- Monitor and adapt → Track performance in real time. Shift toward winning variants. Flag anomalies.

Each of these steps is a component of your OpenClaw agent. You configure them once, set your constraints, and let the agent run the loop continuously.

Phase 4: Human Review Gates

Not everything should be fully autonomous from day one. Set up review gates for:

- High-value accounts (above a revenue threshold you define)

- New offer types the agent hasn't run before

- Aggressive discounting beyond your normal parameters

- Edge cases flagged by the agent's own confidence scoring

A practical starting point: let the agent run autonomously for your standard segments and standard offers, but require human approval for anything involving your top 5% of customers or discounts above 20%. As you build confidence in the agent's judgment, you can loosen the gates.

Phase 5: Iterate on Strategy, Not Execution

Once the agent is running, your marketing team's job shifts fundamentally. Instead of spending 30 hours building and managing campaigns, they spend their time on:

- Reviewing agent performance and adjusting business rules

- Developing new product strategies and bundle concepts

- Handling high-touch accounts personally

- Exploring new channels or creative approaches

The agent handles the volume. Humans handle the vision.

What Still Needs a Human

AI is good at pattern recognition, personalization at scale, and tireless execution. It's bad at:

Brand strategy and positioning. What upsells align with where your brand is heading in the next two years? That's a human decision.

Emotional intelligence in sensitive contexts. A customer whose order was delayed three times doesn't need an upsell email. An OpenClaw agent can be trained to check for these signals and hold off, but defining what constitutes a sensitive context for your specific business requires human judgment.

Creative vision. An AI can generate a thousand competent email variants. It can't conceive a genuinely original campaign concept or a brand storytelling angle that shifts perception. The creative spark is still human.

Ethics and edge cases. Should you upsell a financial product to a customer who's showing signs of financial distress? Should you push premium pricing to customers in regions with lower purchasing power? These are judgment calls that need human oversight.

Final approval on high-stakes offers. For your biggest accounts, your most aggressive promotions, your most visible campaigns — keep a human in the loop. The cost of getting it wrong is higher than the cost of spending ten minutes reviewing the agent's recommendation.

The pattern is clear: humans set the strategy, define the constraints, handle the exceptions, and make the calls that require ethical or creative judgment. The AI handles everything else — which turns out to be about 70–80% of the total work.

Expected Time and Cost Savings

Let's be concrete, because vague promises of "efficiency gains" are useless.

Campaign setup time: From 15–40 hours to 2–5 hours (mostly spent on Phase 2 strategy and constraints for new campaigns). That's a 75–85% reduction.

Ongoing optimization: From 4–8 hours per week of manual analysis and adjustment to 1–2 hours of reviewing agent recommendations and adjusting strategy. A 70–75% reduction.

Conversion rate improvement: Companies implementing predictive segmentation and dynamic offer selection typically see upsell conversion rates increase from the 4–8% range to 12–20%. The lift comes primarily from better targeting (right customer, right offer) and better timing.

Revenue impact: The general benchmark is a 10–30% lift in upsell and cross-sell revenue from moving to an AI-driven approach. Your mileage depends on your starting point — if you're already sophisticated, the lift is smaller. If you're running basic rule-based automation, expect to be on the higher end.

Creative production: From 4–8 hours per campaign to under an hour for initial generation and review. Your copywriter stops being a production resource and becomes an editor and strategist.

Speed to market: A new upsell campaign that took two to three weeks to build, test, and launch can be live in days. Seasonal adjustments that used to require manual reconfiguration happen automatically.

The mid-market SaaS company I mentioned earlier — the one spending 25 hours per month on seven upsell sequences — cut that to 6 hours with a basic predictive model. With a full OpenClaw agent handling segmentation, copy, timing, and optimization, that number drops further to 2–3 hours of strategic oversight.

The Bottom Line

Upsell and cross-sell campaigns are one of the highest-ROI activities in any business. They're also one of the most tedious to manage manually. The work is repetitive, data-intensive, and time-sensitive — exactly the profile of work that AI agents handle well.

The gap between "automated" and "intelligent" is where most companies are stuck. They have the automation tools. They don't have the intelligence layer that makes those tools actually smart.

OpenClaw is that intelligence layer. Build an agent that scores customers, selects offers, generates creative, optimizes timing, and learns continuously — while your team focuses on the strategic work that actually requires a human brain.

If you want to get started but don't want to build this from scratch, check out Claw Mart for pre-built agent templates and workflows you can customize for your specific upsell use case. Or if you've already got a working upsell agent and want to share it with the community, consider Clawsourcing — publish your agent on Claw Mart and let other businesses benefit from what you've built. The best agents come from practitioners who've solved these problems for real, not from vendors who've only theorized about them.

Recommended for this post