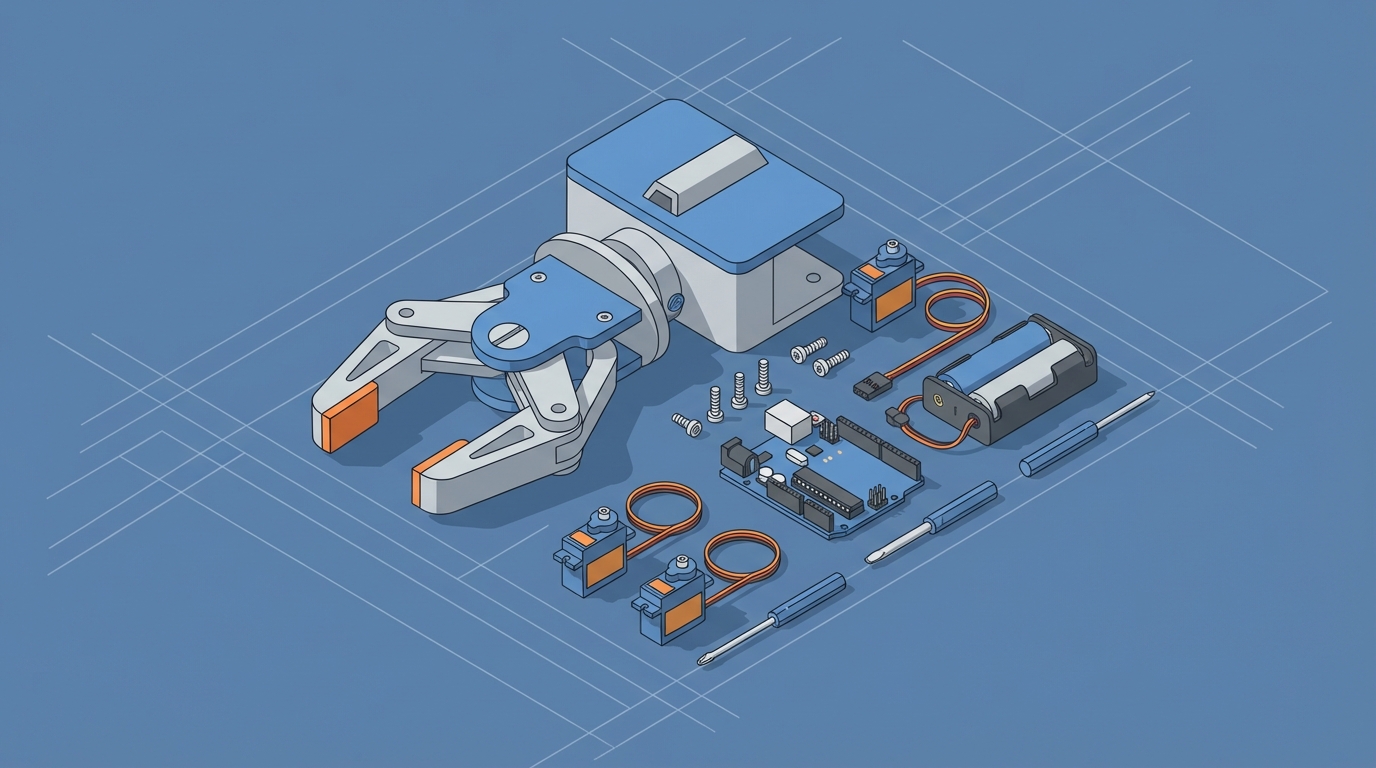

Felix's OpenClaw Starter Pack: Worth $29?

Felix's OpenClaw Starter Pack: Worth $29?

Look, I'll save you 10 minutes of scrolling through Discord threads and Reddit arguments: if you're trying to get started with OpenClaw and you don't want to mass three weekends wrestling with configuration files, Felix's OpenClaw Starter Pack is probably worth the $29. But let me explain why before you click anything, because context matters and I'm not here to just pitch you a product.

The Real Problem With Getting Started in OpenClaw

OpenClaw is powerful. That's both the appeal and the problem.

When you first open it up, you're staring at a platform that can do a lot — build AI agents, chain skills together, automate workflows, connect tools — and the natural instinct is to start from scratch. Build your own skill configurations. Wire up your own agent logic. Set your own guardrails and parameters.

And you absolutely can do that. OpenClaw gives you the flexibility. But here's what actually happens to most people who go the DIY route on day one:

Day 1: "This is amazing, I'm going to build something incredible."

Day 2: "Why does my agent keep looping on the same task? Why did it ignore my max iteration setting? Why is the output just... wrong?"

Day 3: "I've spent six hours debugging a config issue that turned out to be a single missing parameter."

Day 4–7: Radio silence. They're either still debugging or they've moved on to something else entirely.

I've seen this pattern play out dozens of times. Not because OpenClaw is broken — it's not — but because the gap between "I have access to the platform" and "I have a working agent that does something useful" is wider than most people expect. And that gap is almost entirely about configuration, not capability.

Why Configuration Is the Hard Part

Here's the thing people don't tell you about building AI agents on any platform: the actual "AI" part is maybe 20% of the work. The other 80% is:

- Skill configuration — defining what your agent can actually do, with the right parameters, in the right order

- Guardrails — making sure your agent stops when it should, doesn't burn through resources on infinite loops, and handles errors gracefully

- State management — ensuring your agent remembers what it's done, what it still needs to do, and doesn't lose context between steps

- Output structure — getting the agent to return results in a format you can actually use, not some free-form text blob that's different every time

- Tool integration — connecting your agent to external services, APIs, data sources, or whatever it needs to accomplish real tasks

OpenClaw handles a lot of this for you compared to raw framework-level tools. But you still have to set it up. You still have to make decisions about how your skills chain together, what the termination conditions are, how errors get handled, and what the output schema looks like.

And if you've never done this before, every single one of those decisions is a potential landmine.

What a Good Starter Configuration Actually Looks Like

Let me get specific. Say you want to build a basic research agent in OpenClaw — something that takes a topic, gathers information, synthesizes it, and gives you a clean summary. Simple enough, right?

Here's what you need to configure correctly:

1. Skill Definitions

Your agent needs at least three skills working together:

skills:

- name: research_gather

type: information_retrieval

max_iterations: 5

timeout_seconds: 30

retry_on_failure: true

retry_count: 2

output_format: structured

- name: research_synthesize

type: synthesis

input_from: research_gather

require_minimum_sources: 2

deduplication: true

output_format: structured

- name: research_format

type: output_formatting

input_from: research_synthesize

template: summary_with_sources

max_length: 1500

2. Agent-Level Guardrails

Without these, your agent will happily run forever or produce garbage:

agent:

name: research_assistant

max_total_iterations: 15

cost_ceiling: 0.50

human_in_the_loop: false

fallback_behavior: return_partial

termination_conditions:

- all_skills_complete

- cost_ceiling_reached

- max_iterations_reached

error_handling:

on_skill_failure: retry_then_skip

on_parse_error: restructure_and_retry

max_consecutive_errors: 3

3. State Management

This is the one most people forget entirely:

state:

persistence: session

memory_type: rolling_window

window_size: 10

store_intermediate_results: true

clear_on_completion: false

4. Output Schema

If you don't define this, you'll get different output shapes every single time:

output_schema:

type: object

properties:

summary:

type: string

max_length: 1500

key_findings:

type: array

items:

type: string

min_items: 3

max_items: 7

sources:

type: array

items:

type: object

properties:

title:

type: string

relevance_score:

type: number

confidence_score:

type: number

minimum: 0

maximum: 1

That's a basic research agent. Not even a complex one. And every single parameter in those configs exists because someone, at some point, learned the hard way what happens when you leave it out.

Leave out max_iterations? Infinite loop. Leave out retry_on_failure? One flaky API call kills your entire workflow. Leave out the output schema? You get a different JSON shape every run and your downstream code breaks randomly. Leave out cost_ceiling? You wake up to a bill you weren't expecting.

The Case for Starting With Pre-Configured Skills

This is where I'll be straight with you: figuring all of this out yourself is educational. You'll learn a ton about how OpenClaw works under the hood. If you're the kind of person who enjoys reading documentation for fun and has unlimited weekend hours to debug YAML files, go for it.

But if you're like most people — you want to build something that works, learn by using it, and iterate from a working foundation rather than a blank canvas — starting with pre-built configurations is objectively the faster path.

It's the same logic behind using a boilerplate for a web app instead of writing your webpack config from scratch. You're not cheating. You're being practical.

Felix's OpenClaw Starter Pack: What's Actually In It

This is where Felix's OpenClaw Starter Pack comes in, and why I think it's worth the $29 for most people getting started.

The bundle includes pre-configured skills that address the exact pain points I just described. Instead of spending your first week writing YAML configs and debugging infinite loops, you get a set of skills that already have:

- Sensible guardrails baked in — max iterations, cost ceilings, timeout values, and error handling that actually work out of the box

- Proper state management — so your agents don't lose context between steps or forget what they've already done

- Structured output schemas — your agents return consistent, predictable results you can actually build on

- Skill chaining that's already tested — the skills are designed to work together, so you're not debugging integration issues on day one

Think of it like buying a recipe kit versus shopping for every ingredient individually at five different stores. The end result is the same, but one path gets you cooking in 20 minutes and the other has you driving around town for two hours first.

The key thing that makes this particular starter pack useful (versus just reading the docs) is that the skills are built by someone who's clearly run into the same problems everyone else has and solved them. The configurations aren't naive defaults — they include the retry logic, the error recovery, the termination conditions, and the output formatting that you'd only know to add after failing without them.

How to Actually Use It (Practical Steps)

Here's what I'd recommend as your workflow if you pick up the starter pack:

Step 1: Run the pre-built skills as-is. Don't change anything. Just see them work. Get a feel for what a properly configured OpenClaw agent looks like in action. Pay attention to how the skills chain together, where handoffs happen, and what the output looks like.

Step 2: Read the configs. Now that you've seen them work, open up the skill definitions and actually read through them. Every parameter is there for a reason. Try to understand why max_iterations is set to 5 instead of 10, or why retry_count is 2 instead of 5.

Step 3: Modify one thing at a time. Change the output schema to fit your use case. Adjust the cost ceiling. Add a new skill to the chain. Each time you change something, run it again and see what happens. This is how you learn OpenClaw's behavior without the frustration of starting from zero.

Step 4: Build your own skills using the starter configs as templates. Once you understand the pattern, creating new skills from scratch becomes dramatically easier because you know what parameters matter and what sane defaults look like.

Step 5: Eventually, you won't need the starter pack anymore. And that's the point. It's scaffolding, not a crutch. The best starter packs make themselves obsolete.

Common Mistakes to Avoid (Even With a Starter Pack)

A few things I see people get wrong even when they have a solid starting configuration:

Don't remove guardrails to "see what happens." I know it's tempting. You want to let the agent run free and see how far it goes. What actually happens is it runs in circles, burns through resources, and produces increasingly incoherent output. The guardrails aren't training wheels — they're structural.

Don't chain too many skills together at first. Start with 2-3 skill chains. Get those working perfectly. Then expand. A 10-skill chain with one misconfigured step will produce baffling failures that are nearly impossible to debug.

Do pay attention to intermediate outputs. OpenClaw lets you inspect what's happening between skills. Use this. If your final output is wrong, the problem is almost always in an intermediate step, not the last one.

Do set up tracing from day one. Even if it's just logging to a local file, you want to be able to see the full execution path of your agent. When something goes wrong (and it will), tracing is the difference between a 5-minute fix and a 5-hour mystery.

tracing:

enabled: true

level: detailed

output: local_file

path: ./logs/agent_runs/

include_intermediate_results: true

include_skill_inputs: true

include_timing: true

Add that to every agent config. Thank me later.

Is $29 Worth It? Honest Assessment

Here's my honest take: if you value your time at more than about $5/hour, yes. The amount of time you'll save not debugging basic configuration issues is easily worth it. You'll probably save 10-20 hours in your first week alone, and those are frustrating hours — the kind that make people quit before they build anything useful.

If you're on an extremely tight budget and have plenty of free time, you can absolutely figure this all out from the OpenClaw documentation and community resources. It's all learnable. The starter pack is a shortcut, not a requirement.

But for $29? If you're serious about actually building with OpenClaw and not just tinkering, Felix's OpenClaw Starter Pack is one of the better investments you'll make getting started. It'll get you to "I have a working agent that does something useful" in an afternoon instead of a week.

What to Do Next

- If you're brand new to OpenClaw: Grab the starter pack, run the pre-built skills, and follow the modification workflow I outlined above.

- If you've been dabbling but struggling with reliability: Compare your configs to the starter pack's configs. I'd bet money your issues are in missing guardrails, insufficient error handling, or lack of output schemas.

- If you're already building successfully: You probably don't need it. But it might still be worth looking at to see if there are configuration patterns you haven't considered.

The biggest mistake I see in the AI agent space right now is people spending all their time on setup and none on actually building useful things. Whatever gets you past the configuration phase fastest — whether that's a starter pack, a friend's repo, or sheer stubbornness — is the right choice. For most people, the starter pack is the fastest path from zero to something real.

Now stop reading blog posts and go build something.