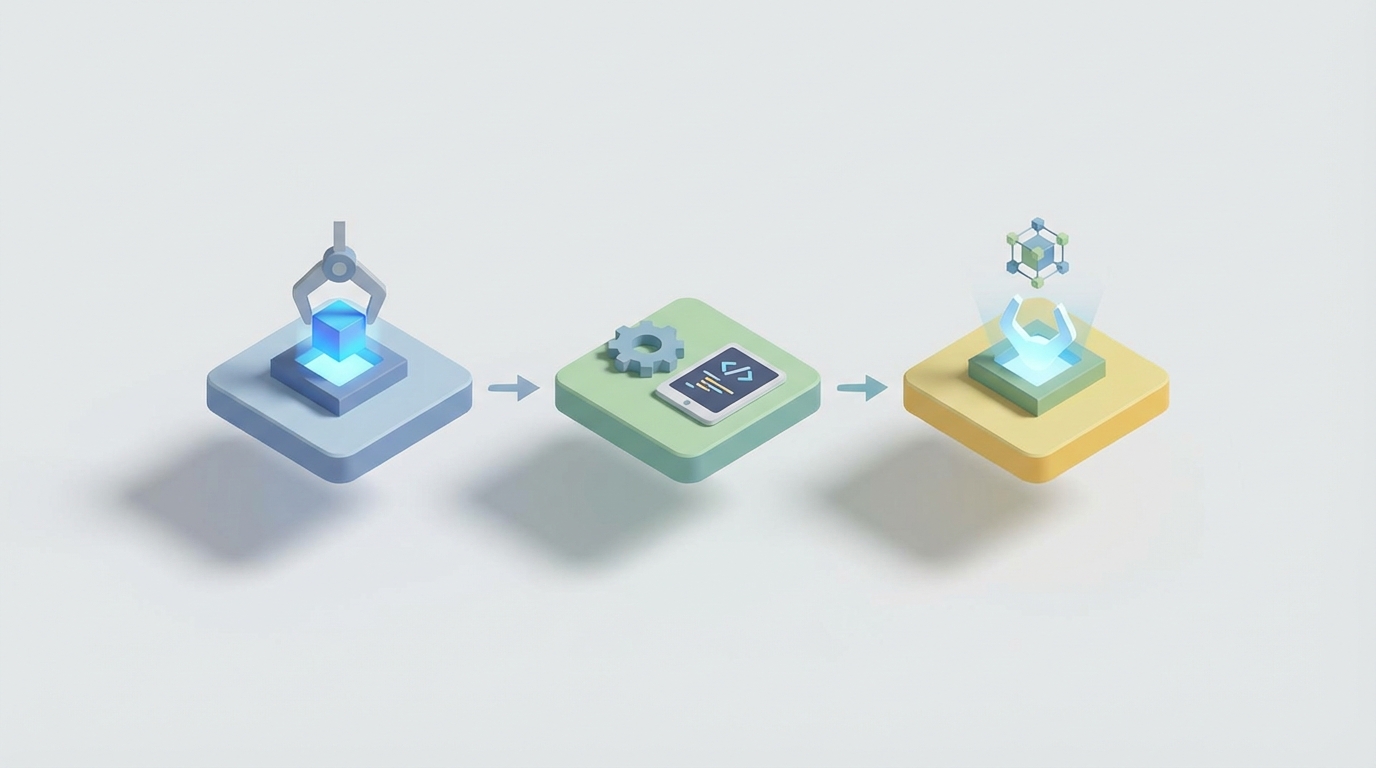

Create Your First OpenClaw Skill: Step-by-Step

Create Your First OpenClaw Skill: Step-by-Step

Look, I'm going to save you about six hours of frustration.

Creating your first skill in OpenClaw should be straightforward. The documentation gives you the basics, the concept makes sense, and you think you'll have something working in twenty minutes. Then the LLM ignores your skill entirely, or calls it with hallucinated arguments, or gets stuck in a retry loop that burns through your tokens like kindling. You close your laptop, question your career choices, and come back the next day to try again.

I've been there. Most people building with OpenClaw have been there. The good news is that once you understand why skills behave the way they do — and how OpenClaw's architecture actually expects you to build them — the whole thing clicks. You go from fighting the framework to having it work for you.

This post is the guide I wish I had when I created my first OpenClaw skill. We're going to build one from scratch, step by step, and I'm going to explain the decisions along the way so you actually understand what's happening instead of just copying code and praying.

Why Most First Skills Fail

Before we write a single line, let's talk about why your first attempt probably didn't work (or why it won't, if you haven't tried yet).

The number one reason skills fail in any agent framework — OpenClaw included — is that developers treat them like regular functions. You write a Python function, slap a decorator on it, and expect the LLM to figure out when and how to call it. That works in demos. It does not work in production.

Here's what actually happens when an OpenClaw agent encounters your skill:

- The agent reads the skill's schema — the name, description, and parameter definitions.

- Based on the user's request and the available skills, the agent decides whether to invoke yours.

- If it does, it generates arguments based on the schema.

- Your skill executes and returns a result.

- The agent incorporates that result into its next action.

Every single one of those steps is a potential failure point. The agent might not understand your description. It might generate wrong arguments. Your skill might return an error the agent can't recover from. Or the result might be so verbose that the agent loses track of what it was doing.

OpenClaw gives you tools to handle all of this. But you have to actually use them.

Setting Up Your Environment

First things first. Make sure you have OpenClaw installed and a project initialized:

pip install openclaw

openclaw init my-first-skill-project

cd my-first-skill-project

This gives you a project structure that looks like:

my-first-skill-project/

├── skills/

│ └── __init__.py

├── agents/

│ └── default_agent.py

├── openclaw.config.yaml

└── tests/

└── __init__.py

The skills/ directory is where your custom skills live. The openclaw.config.yaml file is where you register them and configure how the agent discovers and uses them.

Building the Skill: A Real Example

Let's build something actually useful instead of a toy example. We're going to create a skill that checks the status of a website and returns structured health information. This is the kind of thing you'd want in a monitoring agent or an ops assistant.

Step 1: Define the Schema First (Not the Function)

This is the most important mindset shift. Start with the schema, not the implementation.

The schema is what the LLM sees. It's the contract between your skill and the agent. If the schema is bad, it doesn't matter how brilliant your implementation is.

Create a new file at skills/website_health.py:

from openclaw.skills import Skill, SkillParam

from pydantic import BaseModel, Field

from typing import Optional

class WebsiteHealthInput(BaseModel):

"""Input parameters for checking website health."""

url: str = Field(

description="The full URL to check, including https://. Example: https://example.com"

)

timeout_seconds: Optional[int] = Field(

default=10,

description="Maximum seconds to wait for a response. Defaults to 10. Do not set higher than 30."

)

class WebsiteHealthOutput(BaseModel):

"""Structured result of a website health check."""

url: str

status_code: int

response_time_ms: float

is_healthy: bool

error_message: Optional[str] = None

Notice a few things:

- The input model has explicit, detailed descriptions. Not just "the URL" but "the full URL including https://." This matters enormously. LLMs are literal and lazy. If you say "url," you might get

example.comwithout the protocol, and yourrequests.get()call will fail. - Default values are set in the schema. Don't make the agent guess what reasonable defaults are. It will guess wrong.

- There's a constraint in the description ("Do not set higher than 30"). OpenClaw's schema enforcement helps here, but adding it to the description provides a belt-and-suspenders approach.

- The output model is structured. Don't return raw strings. Structured output lets the agent parse and reason about the result reliably.

Step 2: Write the Implementation

Now we write the actual function. This part is straightforward Python:

import httpx

import time

async def check_website_health(params: WebsiteHealthInput) -> WebsiteHealthOutput:

"""

Checks whether a website is responding and returns health metrics.

Use this skill when the user asks about website status, uptime,

or whether a site is down.

"""

try:

start_time = time.monotonic()

async with httpx.AsyncClient() as client:

response = await client.get(

params.url,

timeout=params.timeout_seconds,

follow_redirects=True

)

elapsed_ms = (time.monotonic() - start_time) * 1000

return WebsiteHealthOutput(

url=params.url,

status_code=response.status_code,

response_time_ms=round(elapsed_ms, 2),

is_healthy=response.status_code < 400,

error_message=None

)

except httpx.TimeoutException:

return WebsiteHealthOutput(

url=params.url,

status_code=0,

response_time_ms=params.timeout_seconds * 1000,

is_healthy=False,

error_message=f"Request timed out after {params.timeout_seconds} seconds"

)

except httpx.RequestError as e:

return WebsiteHealthOutput(

url=params.url,

status_code=0,

response_time_ms=0,

is_healthy=False,

error_message=f"Connection error: {str(e)}"

)

Critical detail: the function always returns a valid output, even on errors. This is the single biggest mistake I see people make with OpenClaw skills. If your skill throws an unhandled exception, the agent has to figure out what went wrong from a raw traceback. It can't. It will either hallucinate a response or get stuck in a retry loop.

Always catch errors and return them as structured data. Let the agent decide what to do with an error message, don't force it to parse a stack trace.

Step 3: Register the Skill

Now wire it all together using OpenClaw's Skill class:

website_health_skill = Skill(

name="check_website_health",

description=(

"Checks if a website is online and responsive. Returns the HTTP status code, "

"response time in milliseconds, and whether the site is healthy. "

"Use this when the user asks if a website is up, down, slow, or having issues."

),

input_model=WebsiteHealthInput,

output_model=WebsiteHealthOutput,

handler=check_website_health,

retry_policy={

"max_retries": 2,

"backoff": "exponential",

"retry_on": ["timeout", "connection_error"]

}

)

A few things to call out here:

The description is the most important field. This is what the agent reads to decide whether to use your skill. Write it like you're explaining to a smart but literal colleague when this tool should be used. Include the "when to use" context — "when the user asks if a website is up, down, slow, or having issues" — because it helps the agent match user intent to skill selection.

The retry_policy is built into OpenClaw's skill layer. This is one of the things that makes OpenClaw genuinely better than rolling your own. Instead of the agent deciding to retry (and potentially doing it wrong), the framework handles retries at the skill execution level with sensible policies. Exponential backoff, specific error conditions, configurable max retries. You declare the policy, OpenClaw enforces it.

Step 4: Add the Skill to Your Agent Config

Update your openclaw.config.yaml:

agent:

name: ops-assistant

model: gpt-4o

skills:

- skills.website_health.website_health_skill

skill_selection:

strategy: semantic_match

confidence_threshold: 0.7

observability:

log_level: debug

trace_skill_calls: true

The skill_selection block is worth lingering on. semantic_match with a confidence_threshold of 0.7 means the agent needs to be at least 70% confident that a skill matches the user's intent before it calls it. This prevents the maddening problem of agents calling random skills on ambiguous requests. If confidence is below the threshold, the agent will ask for clarification instead of guessing.

And trace_skill_calls: true means every skill invocation gets logged with the full decision trace — what the agent saw, why it chose the skill, what arguments it generated, and what result it got back. This alone will save you hours of debugging.

Step 5: Test Before You Deploy

OpenClaw includes a testing harness specifically for skills. Use it:

# tests/test_website_health.py

from openclaw.testing import SkillTestHarness

from skills.website_health import website_health_skill

def test_healthy_site():

harness = SkillTestHarness(website_health_skill)

result = harness.simulate_call(

user_message="Is google.com working right now?",

expected_params={"url": "https://google.com", "timeout_seconds": 10}

)

assert result.skill_was_selected

assert result.parsed_params["url"] == "https://google.com"

def test_ambiguous_request():

harness = SkillTestHarness(website_health_skill)

result = harness.simulate_call(

user_message="What's the weather like?"

)

assert not result.skill_was_selected

def test_error_handling():

harness = SkillTestHarness(website_health_skill)

result = harness.invoke_directly(

params={"url": "https://definitely-not-a-real-site-12345.com", "timeout_seconds": 5}

)

assert not result.output.is_healthy

assert result.output.error_message is not None

The simulate_call method is gold. It simulates what happens when a user sends a message and checks whether the agent would select your skill and what parameters it would extract. You can test skill selection logic without burning API credits on full agent runs.

Run the tests:

openclaw test

Common Mistakes (and How to Avoid Them)

After building a bunch of skills, here are the patterns that consistently cause problems:

Too many skills at once. If your agent has more than 8-10 skills, selection accuracy drops significantly. OpenClaw's skill grouping feature lets you organize skills into logical toolkits so the agent first picks a category, then a specific skill. Use it.

skill_groups:

- name: monitoring

description: "Tools for checking system and website health"

skills:

- skills.website_health.website_health_skill

- skills.server_status.server_status_skill

- name: communication

description: "Tools for sending messages and notifications"

skills:

- skills.send_email.send_email_skill

- skills.send_slack.send_slack_skill

Vague descriptions. "Checks a website" is bad. "Checks if a website is online and responsive by making an HTTP GET request. Returns status code, response time, and health status. Use when user asks about uptime, availability, or site performance" is good. More specific descriptions mean more accurate skill selection.

Returning too much data. If your skill returns a 50KB JSON blob, the agent's context window fills up fast, and it loses track of the original task. Return only what the agent needs to make a decision or report to the user.

Not handling auth properly. If your skill needs API keys or credentials, use OpenClaw's secrets manager instead of hardcoding them or passing them as parameters:

from openclaw.secrets import get_secret

api_key = get_secret("MONITORING_API_KEY")

This keeps secrets out of the prompt, out of logs, and out of the agent's reasoning chain.

Skip the Setup: Felix's OpenClaw Starter Pack

I want to be real with you — building skills from scratch is the best way to learn how OpenClaw works. But if you're trying to ship something this week and you don't want to spend three days writing schemas, debugging selection logic, and configuring retry policies, there's a shortcut.

Felix's OpenClaw Starter Pack on Claw Mart is a $29 bundle that includes pre-configured skills for the most common use cases — web requests, API integrations, data parsing, file operations, and a few others. Everything comes with tested schemas, proper error handling, retry policies, and the kind of detailed descriptions that actually make skill selection work reliably.

I'm not saying this to be salesy. I'm saying this because I spent about two full weekends getting my first set of skills production-ready, and the majority of that time was spent on the boring but critical stuff: getting descriptions right, handling edge cases in error responses, tuning retry logic. The starter pack has all of that done already. You can use the included skills as-is, or — and this is what I'd actually recommend — crack them open and study how they're built, then modify them for your specific needs. It's the fastest way to learn OpenClaw's patterns by example.

Where to Go from Here

Once you have a working skill, the next steps are:

-

Build a second skill that complements the first. An agent with two well-designed skills that work together is more useful than one with ten half-baked skills.

-

Add skill composition. OpenClaw lets skills call other skills in a defined chain. Your

check_website_healthskill could trigger asend_alertskill when a site is down, without the agent having to figure out that chain on its own. -

Set up observability dashboards. OpenClaw's tracing data can be exported to standard observability tools. Once you're running agents in production, you need to see which skills are being called, how often they fail, and where the latency is.

-

Write more tests. Specifically, write tests for adversarial inputs — what happens when the user says something ambiguous? What happens when two skills could plausibly handle the same request? Edge case testing catches the problems that demo-driven development misses.

The whole point of OpenClaw is that it gives you structured, reliable control over how agents use tools. But that structure only works if you build skills that take advantage of it. Detailed schemas, structured error handling, explicit retry policies, proper testing — these aren't optional nice-to-haves. They're the difference between a skill that works in your notebook and one that works when real users are depending on it.

Start with one skill. Get it rock solid. Then build from there.