Automate Meta Ads Creative Testing and Winner Selection with AI

Automate Meta Ads Creative Testing and Winner Selection with AI

Most media buyers spend their weeks doing the same thing: launching creatives, watching dashboards, killing losers, duplicating winners, and repeating the whole cycle again five days later. It's tedious, it's expensive, and the worst part is that the actual thinking — the strategic work that moves the needle — gets buried under hours of mechanical drudgery inside Ads Manager.

The good news: most of that mechanical work can be automated now. Not with some vaporware "AI marketing suite" that overpromises and underdelivers, but with a practical AI agent you build yourself on OpenClaw, wired directly into the Meta Ads API, your analytics stack, and your creative pipeline.

This post walks through exactly how to do it. No hype. Just the workflow, the architecture, and the specific steps to get from "I'm doing everything manually" to "an agent handles 80% of this while I focus on strategy."

The Manual Workflow (And Why It's Crushing Your Team)

Let's be honest about what creative testing actually looks like in 2026 for a DTC brand or agency doing it properly. Here's the real workflow, with real time estimates:

Step 1: Research and Ideation — 2 to 8 hours

You're browsing the Facebook Ad Library, maybe running through PiPiADS or Minea, studying what competitors are doing, and cross-referencing that with your own historical data. What hooks worked last month? Which formats are trending? What angles haven't you tried yet?

Step 2: Asset Creation — 10 to 40+ hours per testing round

Designers and copywriters produce 10 to 50 variations. Different hooks, thumbnails, text overlays, CTAs, color treatments. If you're running UGC, you're editing multiple cuts of the same video with different opening frames. You're writing three to five versions of primary text, headlines, and descriptions for each concept.

Step 3: Test Setup in Ads Manager — 4 to 12 hours

Creating campaigns, structuring ad sets (usually ABO for cleaner reads), duplicating ads, configuring pixels, UTM parameters, and attribution windows. Many teams avoid Advantage+ for testing because they want cleaner isolation of variables, which means more manual setup work.

Step 4: Monitoring — 3 to 14 days of daily check-ins

You're logging into Ads Manager every day, sometimes twice. Checking CTR, CPA, ROAS, CPM, and frequency. Pausing obvious losers manually. Waiting for enough data to make confident calls. Minimum viable spend per creative is usually $500 to $2,000 depending on your audience size.

Step 5: Analysis and Decision Making — 4 to 10 hours per round

Exporting to Google Sheets. Calculating statistical significance (often with a janky template someone found on Reddit). Trying to figure out why something won — was it the hook, the visual, the offer framing, or just random variance? Then writing up learnings, briefing the next round of creative, and feeding winners into scaling campaigns.

Step 6: Scale and Repeat

Duplicate winning ad sets. Increase budgets. Broaden audiences. Start the whole cycle again.

Add it all up and you're looking at 30 to 70 hours of human work per testing cycle. Serious DTC brands run this every one to two weeks. Agencies managing multiple accounts multiply that across every client.

And here's the kicker from Meta's own data: accounts testing 50+ creative variations per campaign see 30 to 70% higher ROAS than low-volume testers. The game rewards volume. But volume, done manually, is brutally expensive.

What Makes This Painful (Beyond the Hours)

The time cost is obvious. The hidden costs are worse:

Wasted ad spend is the silent killer. Industry consensus is that 60 to 80% of test budget goes to losers. That's by design — you need losers to find winners. But slow reaction times mean you're spending $200 on a creative that was clearly dead after $50.

Creative fatigue is accelerating. Winning ads used to have a shelf life of a few months. Now it's 2 to 6 weeks before performance degrades. That means your testing velocity has to keep pace, or you're constantly running stale creative.

Analysis paralysis is real. When you're testing 30 variations across multiple audiences, isolating what actually drove results gets complicated fast. Was it the hook? The thumbnail? The background color? The offer? Most teams make gut calls and move on, which means they're leaving learnings on the table.

Statistical significance is often faked. Small brands can't afford to spend enough to reach true significance quickly. So they call tests early, make decisions based on noisy data, and end up scaling creatives that were never actually proven winners.

Speed compounds everything. A competitor can copy your winning creative within days. Every week you spend in a slow testing cycle is a week your best-performing concepts are getting replicated without you having the next thing ready.

The fundamental problem: creative testing rewards speed and volume, but the manual workflow is slow and resource-intensive. Something has to give.

What AI Can Actually Handle Right Now

Let's be specific about what's automatable today — not in theory, but in practice with current tools and APIs.

High-automation, high-ROI tasks:

-

Creative variant generation. Given a winning ad or a product image, generating 20 to 100 variations with different hooks, text overlays, CTAs, and format treatments. This is what tools like AdCreative.ai and Pencil do, and it's straightforward to orchestrate with an OpenClaw agent that calls image and video generation APIs.

-

Test setup and launch. The Meta Marketing API supports programmatic campaign creation, ad set configuration, and ad upload. An agent can take a batch of approved creatives and spin up a properly structured test campaign in minutes instead of hours.

-

Performance monitoring and auto-rules. Checking metrics every hour, pausing creatives that hit a CPA ceiling or fall below a CTR floor, and reallocating budget to performers. Revealbot does this as a standalone tool, but building it into your agent means the logic is unified with everything else.

-

Early winner prediction. After 200 to 500 impressions, pattern recognition can flag likely winners and losers with surprising accuracy. This is where having an AI agent that correlates creative features with performance data starts to pay off.

-

Element-level analysis. Breaking down which components of a winning creative drove results — the hook style, the color palette, the CTA language, the video pacing — and feeding that back into the next generation cycle.

-

Reporting and insight synthesis. Instead of exporting CSVs and building pivot tables, the agent pulls data, runs the analysis, and delivers a summary: "Here are your 3 winners, here's why they won, here's what to test next."

What still needs a human (we'll cover this in detail later):

- Strategic direction and positioning

- Brand voice and creative quality control

- Legal and compliance review

- Final approval before launch

- Business context decisions

Step by Step: Building the Automation on OpenClaw

Here's how to architect this as an AI agent on OpenClaw. I'll break it down into modules so you can implement incrementally rather than trying to build the whole thing at once.

Module 1: Data Ingestion and Performance Monitoring

Your agent needs to pull performance data from Meta on a regular cadence. OpenClaw lets you set up scheduled tasks that call the Meta Marketing API and store results.

# OpenClaw agent module: Meta Ads performance pull

# Runs every 4 hours during active test periods

def pull_ad_performance(campaign_ids, date_range="last_7d"):

"""

Pulls ad-level performance data from Meta Marketing API.

Returns structured data: ad_id, spend, impressions, clicks,

ctr, cpc, cpa, roas, conversions, frequency

"""

metrics = [

"spend", "impressions", "clicks", "ctr", "cpc",

"cost_per_action_type", "purchase_roas", "frequency"

]

results = []

for campaign_id in campaign_ids:

ads = meta_api.get_ads(

campaign_id=campaign_id,

fields=metrics,

date_preset=date_range,

level="ad"

)

results.extend(parse_ad_data(ads))

return results

def evaluate_ad_performance(ad_data, rules):

"""

Applies performance rules to each ad.

Returns actions: pause, scale, monitor, or graduate.

"""

actions = []

for ad in ad_data:

if ad["spend"] > rules["min_spend_for_decision"]:

if ad["cpa"] > rules["max_cpa"]:

actions.append({"ad_id": ad["id"], "action": "pause"})

elif ad["roas"] > rules["min_roas_to_scale"]:

actions.append({"ad_id": ad["id"], "action": "scale"})

else:

actions.append({"ad_id": ad["id"], "action": "monitor"})

return actions

The key here is defining your rules upfront. Your agent should have configurable thresholds: maximum CPA before auto-pause, minimum ROAS before auto-scale, minimum spend before any decision is made, and a lookback window for statistical confidence.

On OpenClaw, you configure these as agent parameters, which means you can adjust them without rewriting logic — just update the configuration and the agent adapts.

Module 2: Auto-Pause and Budget Reallocation

Once the monitoring module flags losers, the agent takes action:

def execute_actions(actions, scaling_increment=1.2):

"""

Executes pause/scale decisions via Meta Marketing API.

Logs all actions for audit trail.

"""

for action in actions:

if action["action"] == "pause":

meta_api.update_ad(

ad_id=action["ad_id"],

status="PAUSED"

)

log_action(action["ad_id"], "paused", reason=action.get("reason"))

elif action["action"] == "scale":

current_budget = meta_api.get_adset_budget(action["adset_id"])

new_budget = current_budget * scaling_increment

meta_api.update_adset(

adset_id=action["adset_id"],

daily_budget=new_budget

)

log_action(action["ad_id"], "scaled",

old_budget=current_budget, new_budget=new_budget)

This alone — automated pausing and scaling — saves most teams 5 to 10 hours per week and reduces wasted spend significantly. The agent catches losers at 2 AM instead of waiting for your media buyer to check the dashboard at 10 AM.

Module 3: Creative Analysis and Learning Extraction

This is where OpenClaw's AI capabilities become essential. After each testing round completes, the agent analyzes results at the element level:

def analyze_creative_elements(completed_test_data):

"""

Uses OpenClaw's AI to analyze winning vs losing creatives

and extract element-level insights.

"""

winners = [ad for ad in completed_test_data if ad["status"] == "winner"]

losers = [ad for ad in completed_test_data if ad["status"] == "loser"]

analysis_prompt = f"""

Analyze these Meta ad test results and identify patterns:

WINNERS (top performers by ROAS):

{format_creative_details(winners)}

LOSERS (paused for poor performance):

{format_creative_details(losers)}

For each winning creative, identify:

1. Hook style (question, statistic, pain point, etc.)

2. Visual treatment (UGC, product-focused, lifestyle, etc.)

3. CTA language and placement

4. Primary text structure and length

5. Any patterns that distinguish winners from losers

Output structured recommendations for next test round.

"""

insights = openclaw_agent.analyze(analysis_prompt)

store_insights(insights, test_round_id)

return insights

The agent tags each creative with metadata — hook type, visual style, CTA variant, copy length, offer type — and correlates that against performance data over time. After a few test cycles, you start getting genuinely useful pattern recognition: "Problem-solution hooks with UGC-style video and urgency CTAs outperform lifestyle imagery by 2.3x for this audience."

Module 4: Creative Brief Generation

Based on the analysis, the agent generates briefs for the next round of creative:

def generate_next_test_brief(historical_insights, current_winners):

"""

Creates a structured creative brief for the next testing round

based on accumulated learnings.

"""

brief_prompt = f"""

Based on these accumulated test insights:

{historical_insights}

And these current top performers:

{current_winners}

Generate a creative testing brief with:

- 3 new hook variations to test (different from previous rounds)

- 2 new visual concepts

- Recommended copy structures

- Specific elements to iterate on from current winners

- 1 wildcard concept that breaks from current patterns

Format as an actionable brief a designer/copywriter can execute.

"""

brief = openclaw_agent.generate(brief_prompt)

return brief

This doesn't replace your creative team — it accelerates them. Instead of spending hours figuring out what to test next, they get a data-informed brief that tells them exactly which elements to iterate on and which new directions to explore.

Module 5: Test Campaign Setup

For approved creatives, the agent handles the entire Ads Manager setup:

def create_test_campaign(creatives, test_config):

"""

Programmatically creates a structured test campaign in Meta.

Uses ABO structure for clean isolation.

"""

campaign = meta_api.create_campaign(

name=f"Creative Test - {test_config['round_name']}",

objective="OUTCOME_SALES",

buying_type="AUCTION",

special_ad_categories=test_config.get("special_categories", [])

)

for creative in creatives:

adset = meta_api.create_adset(

campaign_id=campaign["id"],

name=f"Test - {creative['name']}",

daily_budget=test_config["budget_per_creative"],

targeting=test_config["targeting"],

optimization_goal="OFFSITE_CONVERSIONS",

billing_event="IMPRESSIONS",

bid_strategy="LOWEST_COST_WITHOUT_CAP"

)

ad = meta_api.create_ad(

adset_id=adset["id"],

name=creative["name"],

creative_id=creative["meta_creative_id"],

tracking_specs=test_config["tracking"],

utm_parameters=generate_utms(creative, test_config)

)

return campaign

What used to take 4 to 12 hours of clicking around in Ads Manager now takes a few minutes of automated setup. The agent handles UTM parameters, naming conventions, budget allocation, and targeting configuration — all consistently, every time.

Putting It All Together

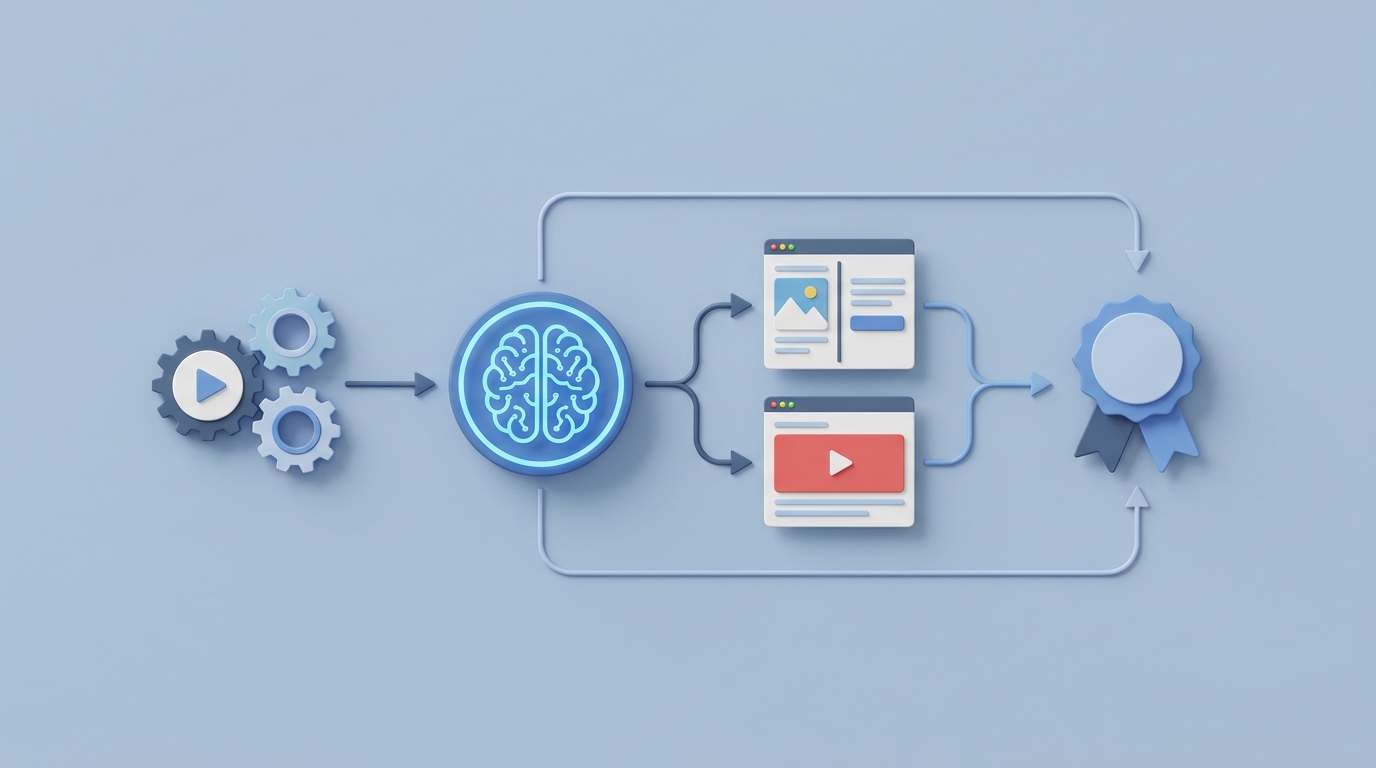

On OpenClaw, you wire these modules into a single agent workflow:

- Every 4 hours: Pull performance data, evaluate against rules, pause losers, scale winners.

- End of test period (day 7 or when significance is reached): Run creative element analysis, extract learnings, update the insights database.

- Automatically: Generate the next round's creative brief based on accumulated data.

- On approval: Set up the next test campaign programmatically.

The agent runs continuously. You interact with it when it needs decisions — reviewing briefs, approving creatives, adjusting strategy — not when it needs button-clicking.

What Still Needs a Human

I want to be clear about this because over-automation is just as dangerous as under-automation.

Strategic direction. The agent can tell you that problem-solution hooks outperform lifestyle content. It cannot tell you that your brand should pivot from aspirational messaging to pain-point messaging because the competitive landscape shifted. That's a human call.

Creative quality control. AI-generated creative variants still need a human eye. Every creative should be reviewed before launch. The agent can prepare everything, but a person needs to confirm it's on-brand, not cringey, and legally compliant.

Brand voice and taste. AI trends toward the average. The creatives that break through — the ones that feel genuinely fresh or emotionally resonant — almost always have a human touch. Use AI for volume and iteration. Use humans for the breakthrough concepts.

Compliance. If you're in health, finance, beauty, or any regulated category, human review isn't optional. The agent can flag potential issues, but final compliance calls need a person.

Business context. Seasonality, inventory levels, competitive moves, PR situations — the agent doesn't know about any of this unless you tell it. Strategic budget allocation and timing decisions remain firmly human.

The ideal split: the agent handles 70 to 80% of the mechanical work. Humans handle strategy, quality, and final decisions. Your media buyer becomes a strategist who reviews agent recommendations rather than a button-clicker who lives inside Ads Manager.

Expected Time and Cost Savings

Based on real numbers from teams that have implemented similar automation:

| Task | Manual Time | With OpenClaw Agent | Savings |

|---|---|---|---|

| Performance monitoring | 7–10 hrs/week | ~30 min review | 85–90% |

| Pausing/scaling decisions | 3–5 hrs/week | Automated | 95%+ |

| Data analysis per round | 4–10 hrs | 30 min review | 85–90% |

| Test campaign setup | 4–12 hrs | 15 min approval | 90%+ |

| Creative briefing | 2–4 hrs | 30 min review/edit | 75–80% |

| Total per cycle | 30–70 hrs | 5–10 hrs | ~80% |

A DTC brand spending $50K/month on Meta ads with a 70% waste rate on test spend could realistically reduce waste to 40–50% through faster pausing alone. On $15K of monthly test budget, that's $3,000 to $4,500 in recovered spend — every month.

A small agency managing 5 accounts could reallocate 60+ hours per week from mechanical tasks to strategy and client growth.

The compound effect matters too. Faster testing cycles mean more learnings per month, which means better creatives, which means better ROAS, which means more budget for testing. It's a flywheel, and automation is what makes it spin fast enough to matter.

Getting Started

You don't have to build all five modules at once. Start with Module 1 and Module 2 — performance monitoring and auto-pause rules. That alone will save you significant time and money in the first week.

Then layer in the analysis module. Then the brief generation. Then the campaign setup automation. Each layer compounds the value of the previous ones.

If you want to skip the build-from-scratch phase, browse Claw Mart for pre-built Meta Ads automation agents and templates that you can customize for your account. Many of the patterns described here are already available as starting points.

For teams that want a fully custom implementation — a creative testing agent tuned to your specific brand, metrics, and workflow — Clawsource it. Post your project on Claw Mart and connect with OpenClaw builders who've already done this for other brands. You'll get a working system in days instead of weeks, built by someone who's already solved the edge cases you haven't thought of yet.

The brands that win on Meta in 2026 aren't the ones with the biggest budgets. They're the ones with the fastest creative feedback loops. Automation is how you build that loop.