Automate Chatbot Training: Build an AI Agent That Improves Itself from Tickets

Automate Chatbot Training: Build an AI Agent That Improves Itself from Tickets

Most teams building chatbots spend the majority of their time on the most boring part: training. Not the interesting architectural decisions. Not the conversation design. The grinding, repetitive work of pulling ticket data, labeling intents, generating utterance variations, updating the knowledge base, and then doing it all again in six weeks when the model starts drifting.

I've watched companies burn three to seven months getting a customer support bot to production, then dedicate one or two full-time people just to keep it accurate. The dirty secret of conversational AI is that the maintenance never ends, and it's almost entirely manual.

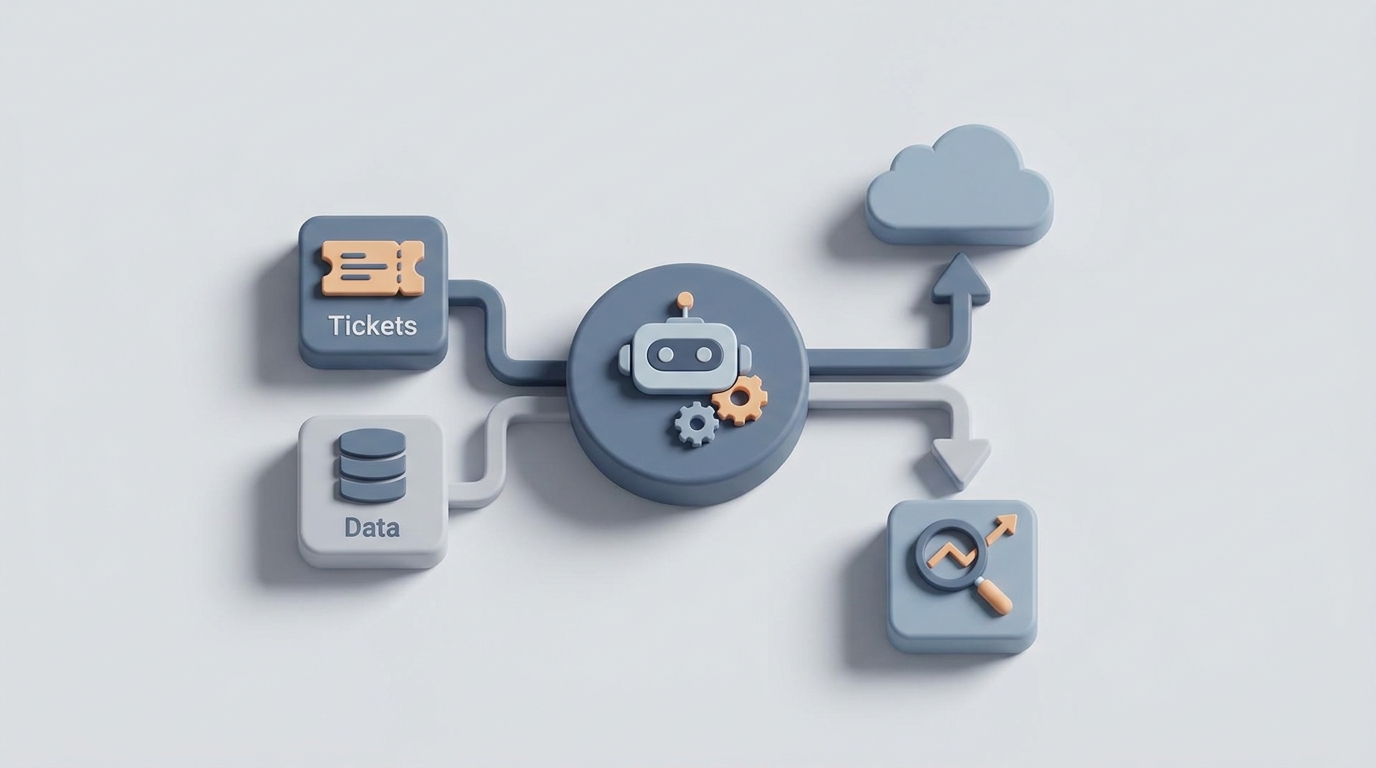

But here's what's changed: you can now build an AI agent that handles the bulk of this training workflow autonomously. Not a chatbot that talks to customers — an agent that trains the chatbot. It reads your tickets, identifies new intents, generates training data, updates your knowledge base, and flags the stuff that actually needs a human to weigh in on.

This post walks through exactly how to build that agent on OpenClaw, step by step.

The Manual Workflow (And Why It's Crushing Your Team)

Let's be honest about what chatbot training actually looks like in most organizations. Here's the typical loop:

Step 1: Intent and Scope Definition (1–3 weeks) Product and UX teams sit in rooms mapping business goals to intents. "cancel_order", "track_shipment", "billing_dispute", "escalate_to_human." This requires deep domain knowledge, and it's usually incomplete on the first pass. You discover new intents for months after launch.

Step 2: Utterance Collection and Variation Generation (2–4 weeks) Someone exports thousands of tickets from Zendesk or Intercom, reads through them, and groups them by intent. Then they brainstorm or manually write variations of how customers phrase each request. "Where's my order?" vs. "I haven't received my package" vs. "tracking says delivered but I don't have it." This is mind-numbing work.

Step 3: Data Labeling and Annotation (3–8 weeks) Humans tag every utterance with its intent, extract entities (order numbers, product SKUs, dates), mark tone and escalation triggers. This single step consumes 70–80% of total project time according to Scale AI and Labelbox reports. At $0.08–$0.35 per utterance with specialized annotators, a 10,000-utterance dataset costs real money and real calendar time.

Step 4: Knowledge Base Curation (2–4 weeks, then ongoing) Select, rewrite, and chunk internal docs, FAQs, and policy PDFs so the chatbot can retrieve accurate answers. Someone has to verify every chunk is current and correct. This never stops because policies change, products launch, and pricing updates.

Step 5: Testing and Red-Teaming (1–2 weeks per cycle) Manual test suites, adversarial prompts to check for hallucinations, shadow testing against live traffic. GPT-4-class models still hallucinate 8–27% of the time on knowledge-intensive queries without strong retrieval and guardrails (Stanford and Anthropic, 2026).

Step 6: Monitoring and Retraining (Ongoing, forever) Weekly review of conversation logs. Relabeling failures. Adding new intents when customer behavior drifts. Companies report retraining every 4–12 weeks. Forrester found that enterprises spend $200K–$1.2M per year on chatbot maintenance across their portfolio.

Step 7: Human-in-the-Loop Approval For high-stakes responses — pricing, refunds, medical advice, anything with legal exposure — a human reviews before the training data or response template goes live.

Total timeline for an enterprise support bot with 50–100 intents: three to seven months to production. Then 15–40% of a full-time person's time indefinitely.

That's the reality. Now let's talk about what we can automate.

What Makes This Painful

Three things make chatbot training disproportionately expensive:

The labeling bottleneck. Seventy to eighty percent of project time is spent on data labeling and annotation. This is largely mechanical work — a human reads a ticket, decides the intent, tags the entities — but it requires enough domain knowledge that you can't just throw it at the cheapest crowd-sourced labor and expect accuracy.

Drift is constant. Your customers don't ask the same questions the same way forever. New products launch. Policies change. Seasonal patterns shift. A chatbot trained in January is noticeably degraded by April. This means the labeling bottleneck isn't a one-time cost; it's a recurring tax.

Errors compound silently. A mislabeled intent doesn't throw an error. It just makes the chatbot slightly worse in ways that are hard to detect until a customer complains or an analyst happens to catch it in a log review. By the time you find the problem, it's been live for weeks.

Gartner's 2026 data says 62% of conversational AI projects never reach production. The primary reason isn't bad technology — it's that accuracy stalls below business thresholds (typically 85% intent accuracy on real traffic) because the training data isn't good enough, diverse enough, or fresh enough.

What AI Can Handle Now

The good news: the most time-consuming parts of chatbot training are exactly the parts that modern AI agents handle well. Here's what you can automate today:

Intent discovery from raw tickets. Instead of humans reading thousands of tickets and manually clustering them, an AI agent can embed ticket text, cluster with algorithms like HDBSCAN, and use LLM summarization to name and describe discovered intents. This turns weeks of work into hours.

Synthetic utterance generation. Once you have an intent defined, an LLM can generate hundreds of realistic variations — including edge cases, typos, slang, and industry jargon — far faster than a human brainstorming session.

Auto-labeling with confidence scoring. The agent labels incoming tickets with intent and entity tags, assigns a confidence score, and only routes low-confidence items to humans. Active learning loops like this reduce manual labeling volume by 50–90% (documented in Snorkel and Scale AI case studies).

Knowledge base extraction and chunking. The agent can ingest new policy documents, extract relevant Q&A pairs, chunk them appropriately for retrieval, and flag conflicts with existing knowledge base entries.

Regression test generation. Automatically generate test cases from real conversation logs and flag regressions when model updates cause previously-correct responses to break.

Conversation log summarization. Instead of analysts reading hundreds of transcripts weekly, the agent produces structured summaries: new failure patterns, emerging intents, drift metrics.

Step-by-Step: Building the Self-Improving Training Agent on OpenClaw

Here's how to build this end to end. We're creating an agent that ingests your support tickets, maintains your chatbot's training data, and continuously improves accuracy with minimal human involvement.

Step 1: Set Up Your Ticket Ingestion Pipeline

Connect your ticket source (Zendesk, Intercom, Freshdesk, or a custom database) to OpenClaw. You want a scheduled pull — daily or twice daily depending on ticket volume.

In OpenClaw, you'll configure this as an input source for your agent. The agent needs access to:

- Ticket text (customer message + agent response)

- Resolution status

- Any existing tags or categories

- Customer satisfaction scores if available

The agent's first task is straightforward: for each new batch of tickets, embed the text and store it in a vector index. OpenClaw handles the embedding and storage — you configure the chunking strategy (for support tickets, whole-ticket embedding usually works better than splitting into paragraphs).

Step 2: Build the Intent Discovery Module

This is where the agent starts earning its keep. Configure it with a system prompt along these lines:

You are an intent discovery agent for a customer support chatbot.

Your job:

1. Analyze clusters of similar support tickets

2. Identify distinct customer intents

3. For each intent, provide: a canonical name (snake_case), a plain-English description, 3-5 representative example utterances from real tickets, and suggested entity slots (order_id, product_name, date, etc.)

4. Flag intents that overlap significantly and recommend whether to merge or split them

5. Compare discovered intents against the existing intent taxonomy and flag: new intents not yet covered, existing intents with shifting language patterns, intents that appear to be declining in frequency

Current intent taxonomy:

{existing_intents}

New ticket batch:

{ticket_batch}

On OpenClaw, you set this agent to run after each ticket ingestion. It outputs a structured JSON of intent updates — new intents to add, existing intents to update, and suggested merges or splits.

Step 3: Automate Utterance Generation and Labeling

Once the intent discovery module identifies intents, the next agent in the chain generates training utterances. Here's the key: you don't just generate random paraphrases. You generate realistic variations based on actual ticket language.

Configure the utterance generation agent:

You are a training data generator for a customer support chatbot.

Given an intent definition and real example tickets, generate training utterances that:

1. Cover diverse phrasings (formal, casual, frustrated, brief, verbose)

2. Include realistic typos and abbreviations (2-3% of outputs)

3. Include entity variations (different product names, order number formats, date formats)

4. Include negative examples — utterances that look similar but belong to a different intent

5. Assign a confidence score (0.0-1.0) to each label

For utterances with confidence below 0.85, mark them for human review.

Intent: {intent_definition}

Real examples: {real_ticket_examples}

Existing training data for this intent: {current_training_data}

Generate 50 new training utterances.

The auto-labeling component works similarly. New incoming tickets get classified against existing intents. High-confidence classifications (above your threshold, typically 0.90+) go straight into the training set. Low-confidence ones get queued for human review.

This is the single biggest time saver. Instead of labeling 10,000 utterances manually, your team reviews maybe 500–1,500 edge cases. The agent handles the rest.

Step 4: Knowledge Base Maintenance Agent

This agent monitors for knowledge base staleness and gaps. It compares:

- Questions customers are asking (from ticket analysis) against answers currently in the knowledge base

- New internal documents (policy updates, product launches) against existing KB entries

- Chatbot responses that led to escalation or negative CSAT against the KB content that was retrieved

Configure it to output a weekly KB health report:

You are a knowledge base maintenance agent.

Analyze the following data and produce a structured report:

1. GAPS: Customer questions with no matching KB article (include frequency counts)

2. STALENESS: KB articles that contradict information in recent tickets or updated policy documents

3. CONFLICTS: KB articles that contradict each other

4. SUGGESTED UPDATES: For each gap or stale article, draft an updated or new KB entry

5. RETRIEVAL FAILURES: Questions where the correct KB article exists but wasn't retrieved (suggest re-chunking or re-indexing)

Customer tickets (last 7 days): {recent_tickets}

Current knowledge base: {kb_contents}

Updated policy documents: {new_policies}

Escalation logs: {escalation_data}

This is where OpenClaw's ability to chain agents together becomes particularly useful. The KB maintenance agent's output feeds into the intent discovery agent (new KB articles might mean new intents) and the utterance generator (new topics need training data).

Step 5: Regression Testing and Drift Detection

Build a testing agent that runs after every training data update:

You are a QA agent for a customer support chatbot.

Given the updated training data and knowledge base, generate test cases that:

1. Cover every intent with at least 5 test utterances

2. Include adversarial examples (ambiguous queries, out-of-scope requests, prompt injection attempts)

3. Test entity extraction accuracy

4. Verify that previously-passing test cases still pass

5. Flag any regressions with severity (critical/high/medium/low)

Previous test results: {last_test_run}

Updated training data: {new_training_data}

Updated knowledge base: {new_kb}

Run this automatically every time the training pipeline produces new data. Regressions get flagged to your team immediately. Non-regressions proceed automatically.

Step 6: The Human Review Queue

Everything above generates a focused queue of items that actually need human judgment. On OpenClaw, you configure this as a dashboard or notification system that surfaces:

- Low-confidence labels (typically 5–15% of total volume)

- New intent proposals (the agent discovered something new — does the business want to support it?)

- KB updates that affect pricing, refunds, legal language, or compliance

- Regression alerts

- Suggested intent merges or splits

This is the critical design decision: the agent does the volume work, humans make the judgment calls. Your conversation designers and domain experts spend their time on decisions that matter instead of reading tickets and typing labels.

What Still Needs a Human

Let me be direct about the boundaries. AI agents are bad at:

Defining business strategy. Which intents are worth building versus routing to a human? What's your escalation philosophy? What tone of voice represents your brand? These are business decisions, not data problems.

Compliance and legal accuracy. If your chatbot discusses pricing, medical information, financial advice, or anything with legal exposure, a human must validate the training data and KB entries. LLMs cannot be trusted to self-validate company policy. Period.

Novel edge cases. The first time a genuinely new scenario appears — a product recall, a PR crisis, a regulatory change — a human needs to define the response strategy before the agent can incorporate it.

Emotional judgment. Deciding when a frustrated customer needs a human agent versus an automated response requires contextual and emotional intelligence that current AI handles poorly.

The final quality gate. Before any training update goes to production, someone on your team should review the agent's summary of changes. This takes minutes, not days, but it should happen.

Expected Time and Cost Savings

Based on the benchmarks from the research and what teams are seeing with agent-automated workflows:

| Phase | Manual Timeline | With OpenClaw Agent | Reduction |

|---|---|---|---|

| Intent Discovery | 2–3 weeks | 1–2 days | ~85% |

| Utterance Generation | 2–4 weeks | Hours (+ human review) | ~90% |

| Data Labeling | 3–8 weeks | 3–5 days (edge cases only) | ~80% |

| KB Curation | 2–4 weeks initial, ongoing | Ongoing with weekly reviews | ~60% |

| Testing | 1–2 weeks per cycle | Automated + alert review | ~75% |

| Monitoring & Retraining | 15–40% FTE ongoing | 5–10% FTE (review queue only) | ~65% |

For the overall initial build, teams are cutting from 3–7 months down to 3–6 weeks. For ongoing maintenance, from one to two dedicated people down to a few hours per week of focused human review.

In dollar terms: if you're spending $200K–$500K annually on chatbot maintenance (which is typical for mid-to-large enterprises running multiple bots), you can reasonably expect to cut that by 50–70%. The agent handles the volume; your expensive human experts handle the judgment.

Getting Started

The fastest path is this:

- Start with your ticket data. Export your last 90 days of resolved tickets. This is your training corpus.

- Build the intent discovery agent first on OpenClaw. Even before you automate anything else, having an agent that clusters and names your intents from real data saves weeks of workshop time.

- Add auto-labeling with human review as your second module. Set the confidence threshold high (0.92+) initially and lower it as you validate accuracy.

- Layer in KB maintenance and regression testing once the core loop is working.

- Measure everything. Track intent accuracy, human review volume, time-to-update, and CSAT. These numbers justify expanding the automation.

You don't need to build all six components at once. Each one delivers standalone value. But together, they create a training pipeline that genuinely improves itself — your chatbot gets better every week with minimal human intervention.

If you want to skip the build-from-scratch phase, the Claw Mart marketplace has pre-built agent templates for support ticket analysis and training data generation that you can customize for your stack. And if you'd rather have someone build and manage the whole pipeline, Clawsource it — post your project and let a specialized builder handle the implementation while you focus on the business decisions that actually require your expertise.

Recommended for this post